For the first SMB and ROBO HCI benchmark stage we obtained 6.7 million IOPS, 51% out of theoretical 13.2 million IOPS, over production-ready HCA cluster of 12 Supermicro SuperServers featured by Intel® Xeon® Platinum 8268 processors, Intel® Optane™ SSD DC P4800X Series drives and Mellanox ConnectX-5 100GbE NICs all connected by Mellanox SN2700 Spectrum™ switch and Mellanox LinkX® copper cables. This is a standard hardware configuration for our production HCAs where only CPUs were upgraded for chasing HCI industry record.

We doubled the storage power of the cluster: we installed two more Intel® Optane™ SSD DC P4800X Series drives to each node. The NVMe drives are configured as Write-Back cache according to Intel recommendations. Intel® SSD D3-S4510 were used as a primary storage. Now, the storage has block-level replication, however, the cache is not replicated. This configuration suits applications like SQL Availability Groups (AG)s, SAP and other databases those synchronize data between shared storage and cache (consistency checks).

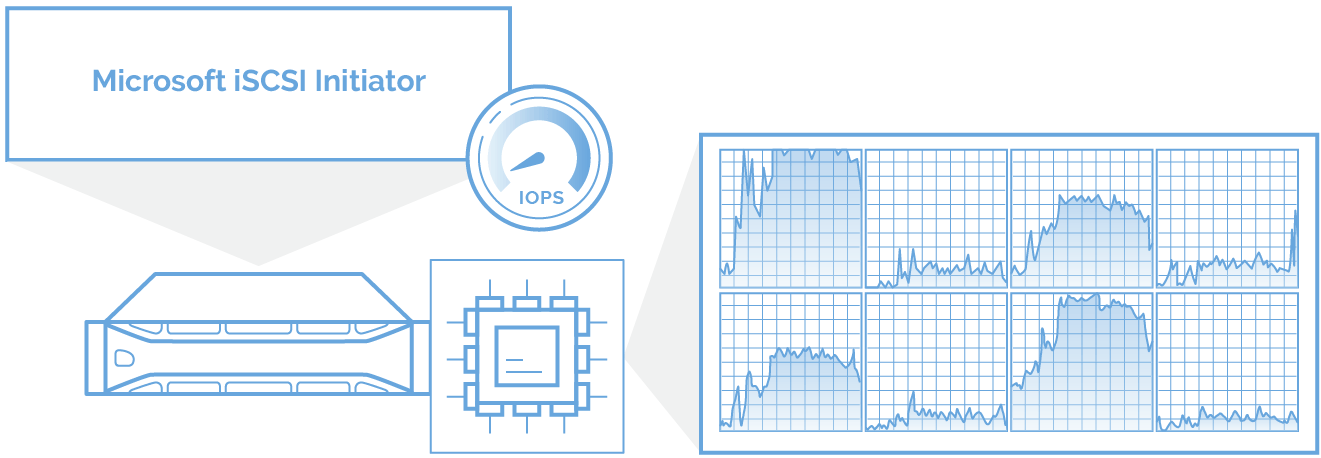

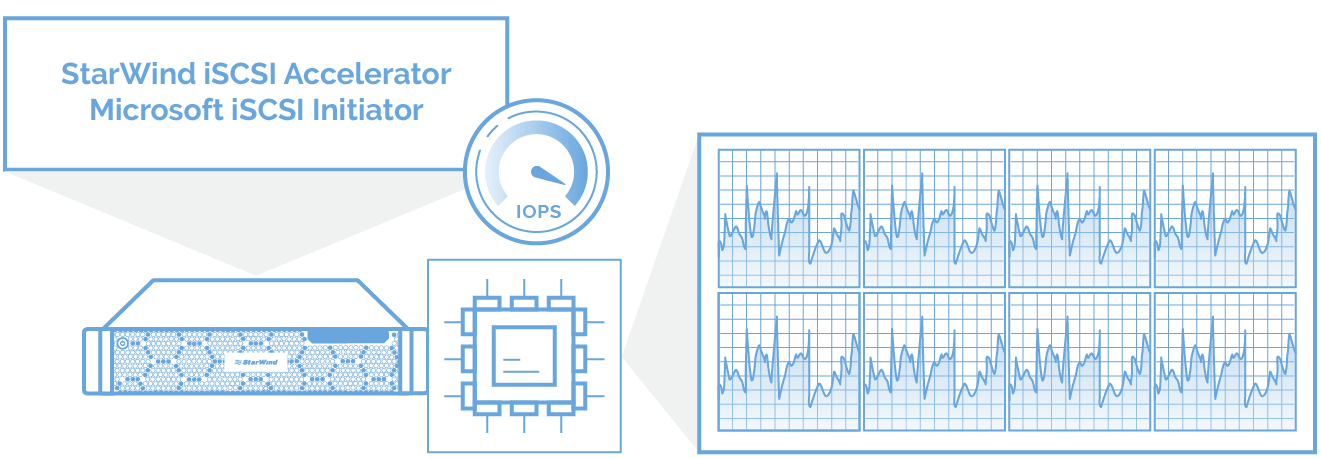

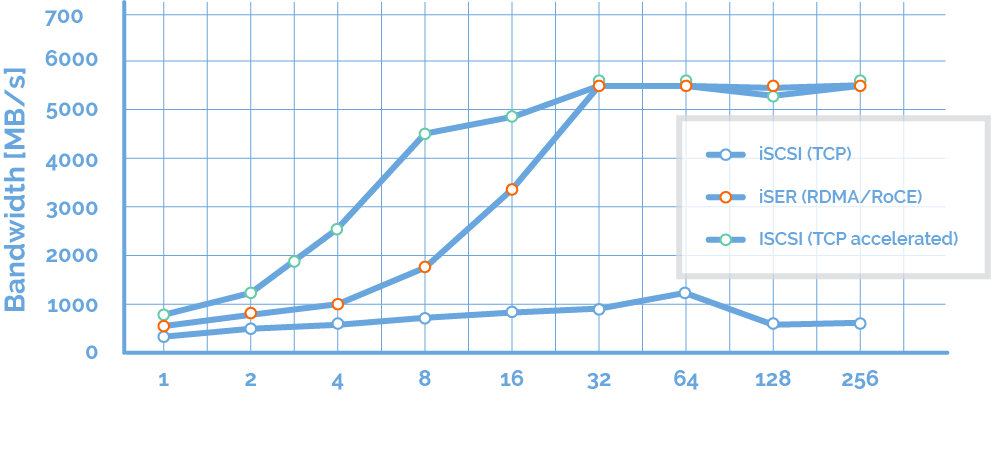

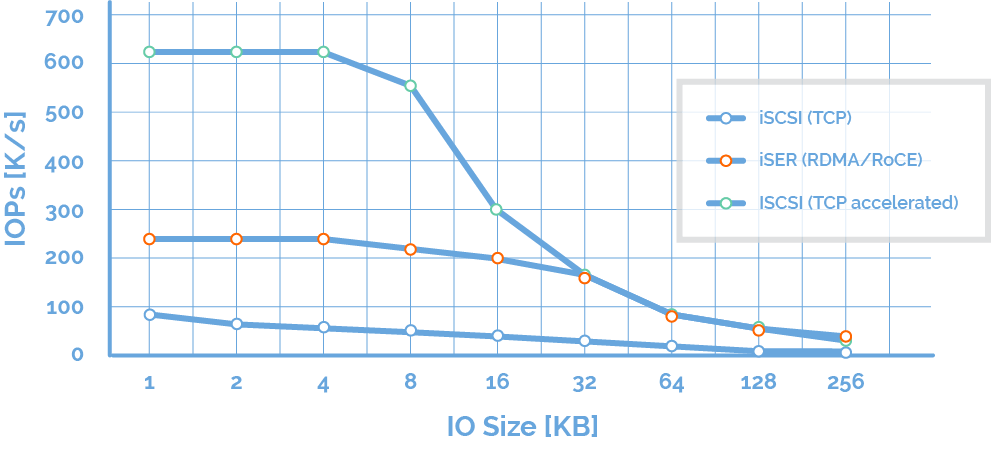

In our setup, StarWind HCA had the fastest software running: Microsoft Hyper-V (Windows Server 2019) and StarWind Virtual SAN service application runs in Windows user-land. StarWind supports polling, in addition to avoid interrupt-driven IO, to reduce latency and to boost performance by turning CPU cycles into IOPS. We also developed TCP Loopback Accelerator to bypass TCP stack on the same machine and iSCSI load-balancer to assign new iSCSI sessions to new CPU core thus fixing issues in aging Microsoft iSCSI Initiator.

On our website, you can learn more about hyperconverged infrastructures powered by StarWind Virtual SAN.

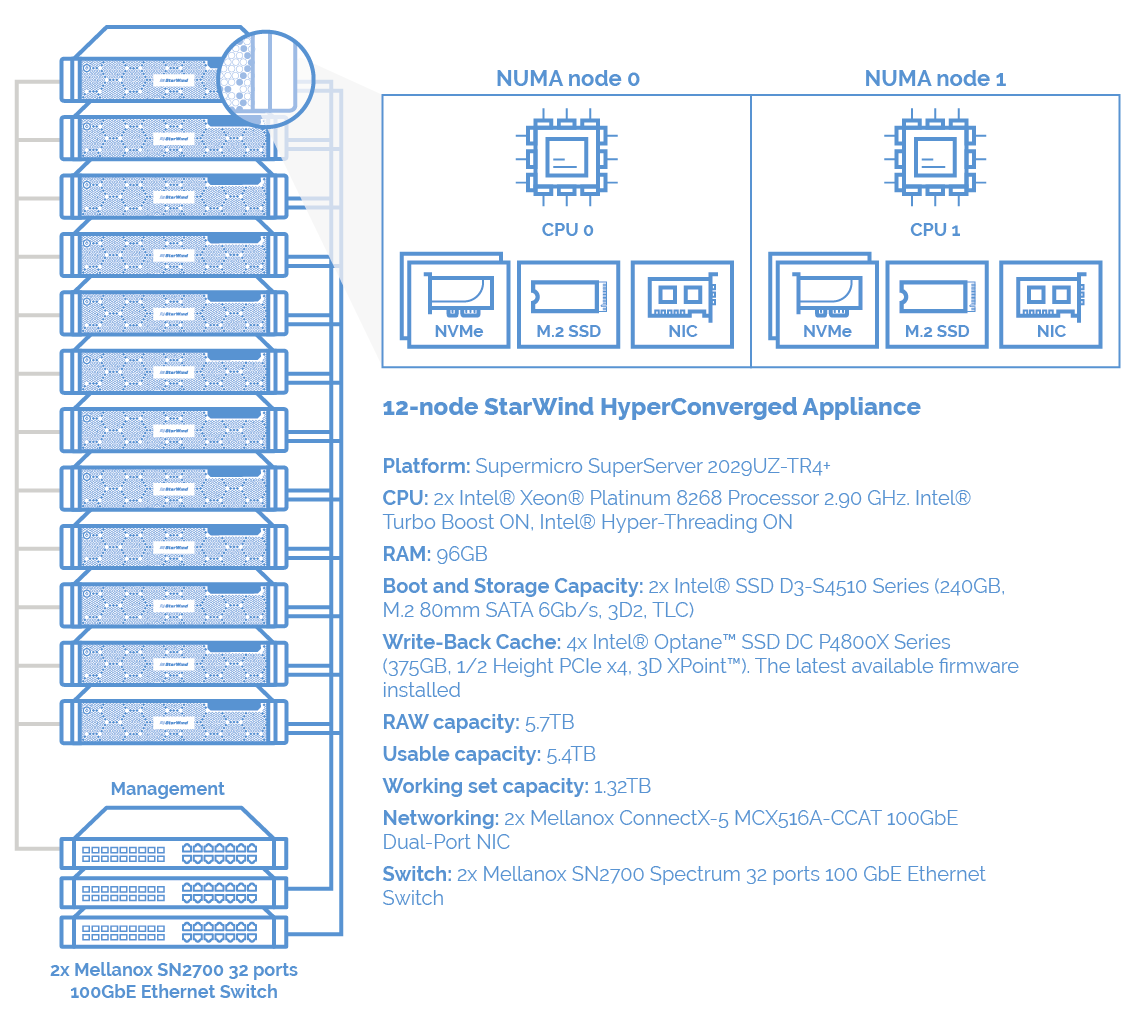

Platform: Supermicro SuperServer 2029UZ-TR4+

CPU: 2x Intel® Xeon® Platinum 8268 Processor 2.90 GHz. Intel® Turbo Boost ON, Intel® Hyper-Threading ON

RAM: 96GB

Boot and Storage Capacity: 2x Intel® SSD D3-S4510 Series (240GB, M.2 80mm SATA 6Gb/s, 3D2, TLC)

Write-Back Cache Capacity: 4x Intel® Optane™ SSD DC P4800X Series (375GB, 1/2 Height PCIe x4, 3D XPoint™). The latest available firmware installed

RAW capacity: 5.7TB

Usable capacity: 5.4TB

Working set capacity: 1.32TB

Networking: 2x Mellanox ConnectX-5 MCX516A-CCAT 100GbE Dual-Port NIC

Switch: 2x Mellanox SN2700 Spectrum 32 ports 100 GbE Ethernet Switch

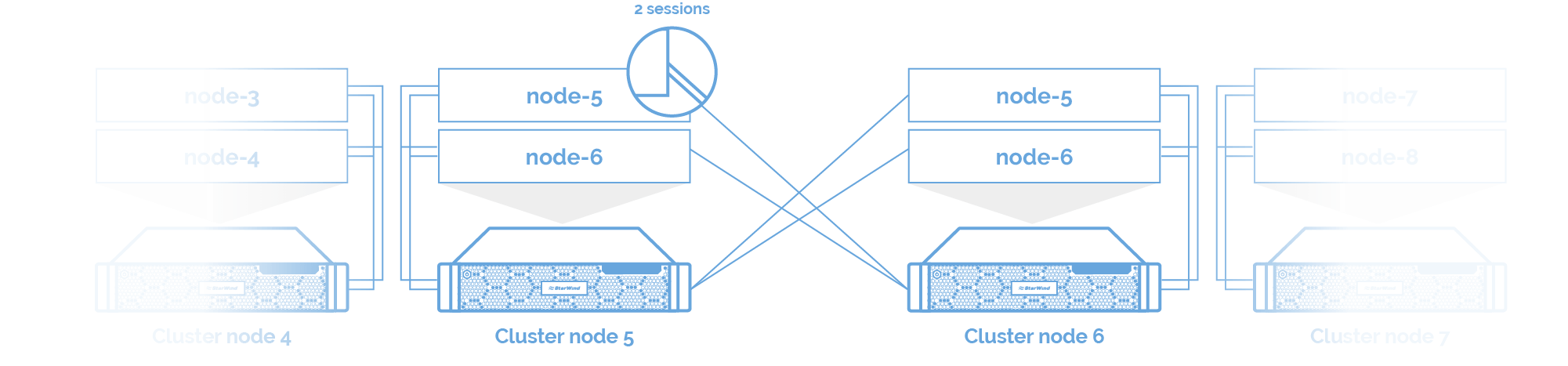

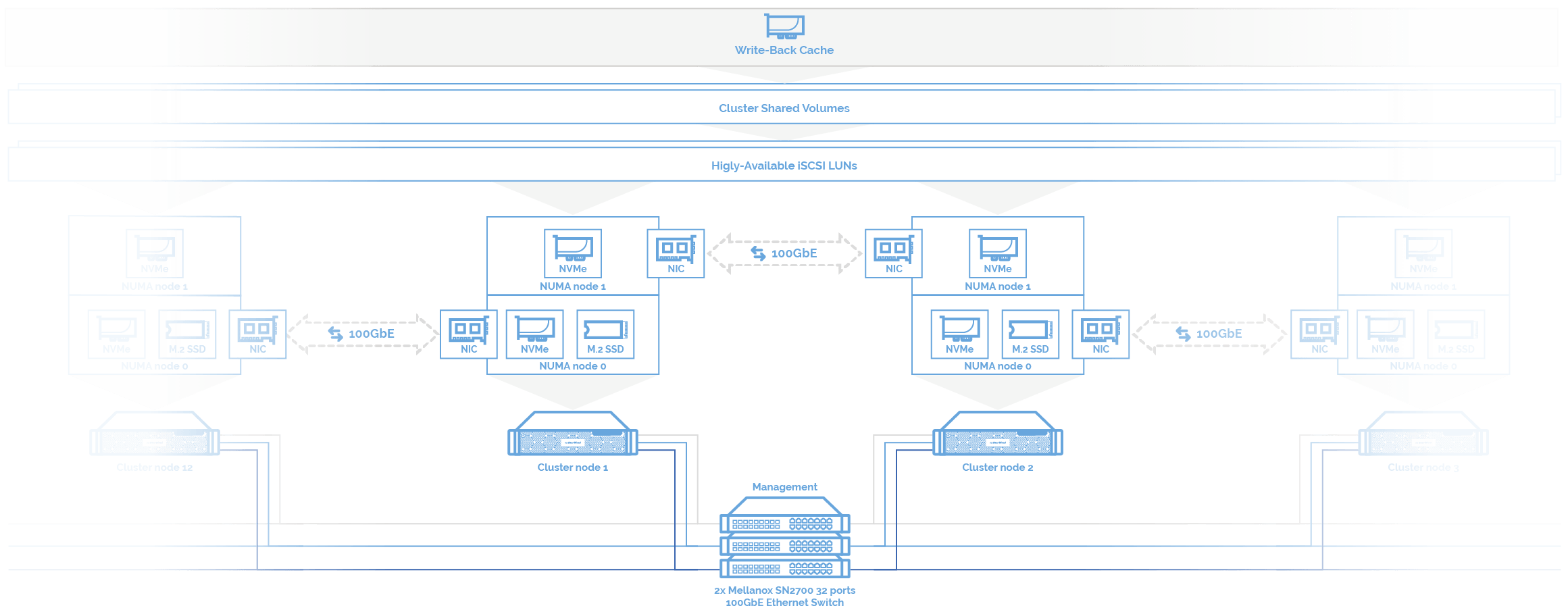

The diagram below illustrates servers’ interconnection.

NOTE: On every server, each NUMA node has 1x Intel® SSD D3-S4510, 2x Intel® Optane™ SSD DC P4800X Series and 1x Mellanox ConnectX-5 100GbE Dual-Port NIC. Such configuration enabled to squeeze maximum performance out of each piece of hardware. Such connection is rather a recommendation than a strict requirement. To obtain similar performance, no tweaking NUMA node configuration is required, meaning that the default settings were OK.

Operating system. Windows Server 2019 Datacenter Evaluation version 1809, build 17763.404 was installed on all nodes with the latest updates available on May 1, 2019. With performance perspective in mind, the power plan was set to High Performance, and all other settings, including the relevant side-channel mitigations (mitigations for Spectre v1 and Meltdown were applied), were left at default settings.

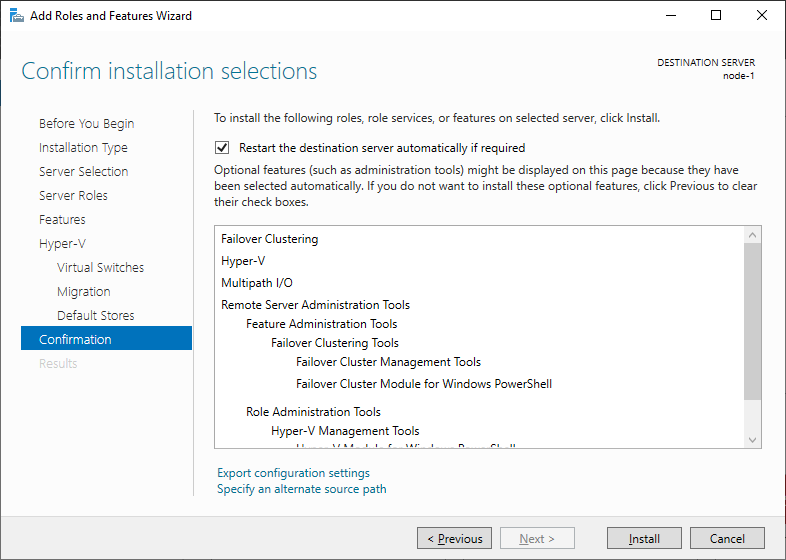

Windows Installation. Hype-V Role, MPIO, and Failover Cluster features

To make the deployment process faster, we made an image of Windows Server 2019 with Hype-V Role installed and MPIO and Failover Cluster features enabled. Later, the image was deployed on 12x Supermicro servers.

Driver installation. Firmware update

Once Windows installed, for each hardware piece hardware Windows Update were applied, and firmware updates were installed for Intel NVMe SSDs.

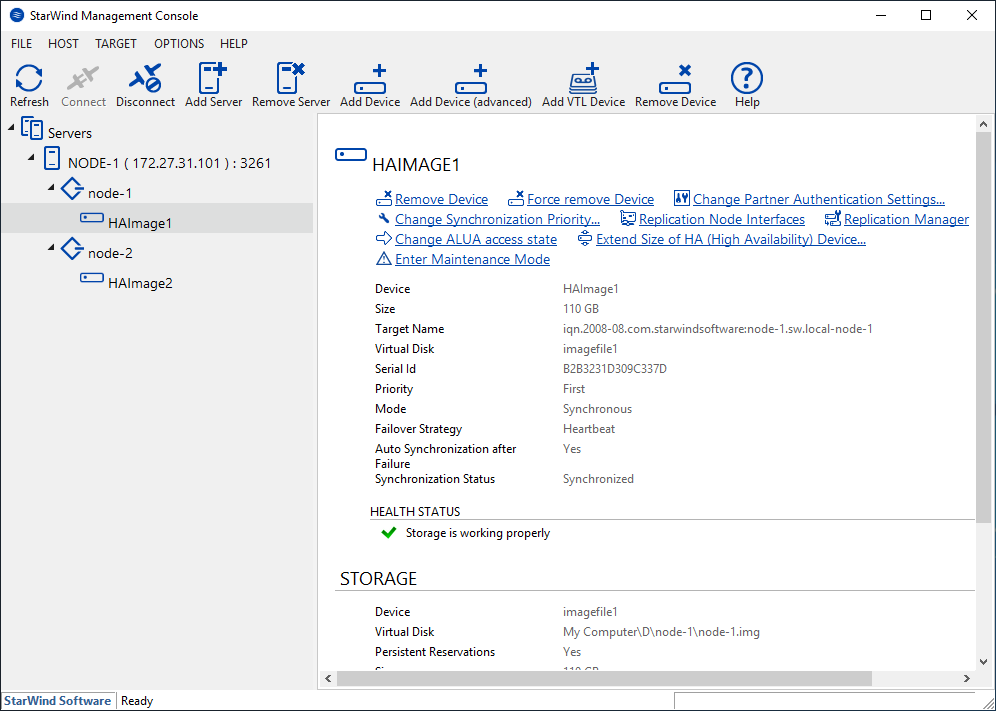

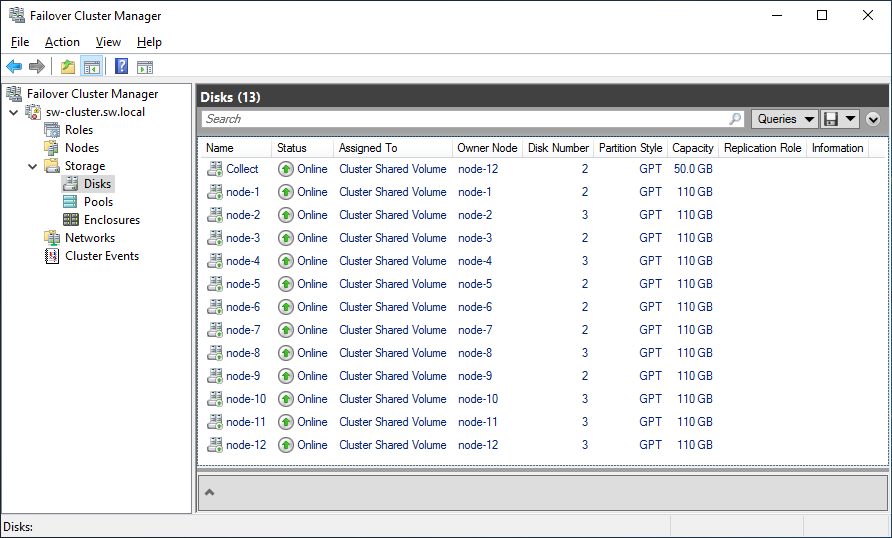

StarWind Virtual SAN. Current production-ready StarWind VSAN version (8.0.0.12996) was installed on each server. The entire cluster had 5.4TB usable capacity from 5.7TB RAW capacity. Microsoft recommends creating at least one Cluster Shared Volume per server node. Therefore, for 12 servers, we created 12 volumes with ReFS.

ReFS shows us convenient performance overtopping one we had using the NTFS file system.

Each volume had 110GB capacity and used two-way mirror resiliency, with allocation delimited to two servers. For particular working set we ended with 1.32TB of total usable storage. All other settings, like columns and interleave, were default. To accurately measure IOPS to persistent storage only, the in-memory CSV read cache was disabled.

StarWind iSCSI Accelerator (Load Balancer). We used built-in Microsoft iSCSI Initiator together with our own user-mode iSCSI initiator for. Microsoft iSCSI initiator was developed in the “stone age”, when servers had one- or two-socket CPUs with a single core per socket. Having more powerful servers nowadays, the Initiator does not work as it should.

So, we developed iSCSI Accelerator as a filter driver between Microsoft iSCSI Initiator and the network stack. Every time a new iSCSI session is created, it is assigned to a free CPU core. Therefore, the performance of all CPU cores will be used uniformly, and latency approaches zero. Distributing workloads in such way ensures smart compute resource utilization: no cores are overwhelmed while others are idle.

StarWind iSCSI Accelerator (Load Balancer) was installed on each cluster node in order to balance virtualized workloads between all CPU cores in Hyper-V servers.

StarWind Loopback Accelerator. As a part of StarWind Virtual SAN, StarWind Loopback Accelerator was installed and configured in order to significantly decrease latency times and CPU load for cases when Microsoft iSCSI Initiator connects to StarWind iSCSI Target over the loopback interface. This is zero-copy memory in loopback mode, thus most of TCP stack bypassed.

NOTE: Due to the fast path provided by StarWind Loopback Accelerator, each iSCSI LUN had 2 loopback iSCSI session and 2 external partner iSCSI sessions. Least Queue Depth (LQD) MPIO policy was set. This policy maximizes bandwidth utilization, automatically utilizes the active/optimized path with the smallest current outstanding queue.

Block iSCSI/iSER (RDMA). Like StarWind HyperConverged Appliances, 12-node HCI cluster features Mellanox NICs and switches. In this study, StarWind Virtual SAN utilized iSER for backbone links to RDMA, delivering maximum possible performance.

NOTE: Windows Server 2019 doesn’t have iSER (RDMA) support just yet. Lack of all RDMA put pressure on CPU affecting and limiting performance. Ignoring local Windows TCP/IP stack overhead, StarWind’s built-in user-land iSER initiator is used for data and metadata synchronization, and acknowledgment “guest” writes over iSER (RDMA). The latency time on data-in-memory copy operations is higher than we expect. Hence, the 4K IO blocks’ performance results are lower than we can obtain from storage.

As a result, the IO performance was accelerated with a combination of RDMA, DMA in loopback, and TCP connections.

NUMA node. Taking into account NUMA node configuration on each cluster node, to replicate shared storage between two servers, the virtual disk was configured using a network adapter located on the same NUMA node as it was located on. For example, on cluster node 3, the virtual disk was created on Intel Optane SSD and was located on NUMA node 1. So, for disk mirroring, Mellanox ConnectX-5 100GbE NIC located on NUMA node 1 also.

For 12-node hyperconverged cluster, 12 Cluster Shared Volumes were created on top of 12 synchronously-mirrored StarWind virtual disks according to Microsoft recommendations.

Hyper-V VMs. Empirically generalized, we took 4 virtual machines x 24 virtual processors each = 48 virtual processors per each cluster node to saturate the performance. That’s 48 total Hyper-V Gen 2 VMs across the 12 server nodes. Each VM runs Windows Server 2019 Standard and is assigned 8GiB of memory.

Write-Back Cache. Intel Optane NVMe SSDs are configured as Write-Back caching device for each VM.

NOTE: NUMA spanning was disabled to ensure that virtual machines always ran with optimal performance according to the known facts about NUMA spanning.

In virtualization and hyperconverged infrastructures, it’s common to judge on solution performance based on the number of storage input/output (I/O) operations per second, or “IOPS” – essentially, the number of reads or writes that virtual machines can perform. A single VM can generate a huge number of either random or sequential reads/writes. In real production environments, there usually are tons of VMs, and that makes the IOPS fully randomized. 4 kB block-aligned IO is a block size that Hyper-V virtual machines use, so it was our IO pattern of choice.

In general, hardware and software vendors often use this very type of pattern when it comes to performance. They basically measure the best possible performance under the worst circumstances.

In this article, we not only performed the same benchmarks as Microsoft while measuring Storage Spaces Direct performance but also carried out additional tests for other IO patterns that are commonly used in virtualization production environments.

VM Fleet. We used the open-source VM Fleet tool available on GitHub. VM Fleet makes it easy to orchestrate DISKSPD, the popular Windows micro-benchmark tool, in hundreds or thousands of Hyper-V virtual machines at once.

Let’s take a closer look at DISKSPD settings. To saturate performance, we set 16 threads per file (-t16). Taking into account Intel Optane recommendations, the given number of threads was used for numerous storage IO tests. As a result, we got the highest storage performance in the saturation point under 32 outstanding IOs per thread (-o32). To disable the hardware and software caching, we set unbuffered IO (-Sh). We specified -r for random workloads and -b4K for 4 kB block size. Read/write proportion was altered with the -w parameter.

Here’s how DISKSPD was started: .\diskspd.exe -b4 -t16 -o32 -w0 -Sh -L -r -d900 [...]

NOTE: All writes are cached first. That’s why we modified the VM Fleet scripts for benchmarking. We wanted VM Fleet to read IO performance from both cache and CSVFS.

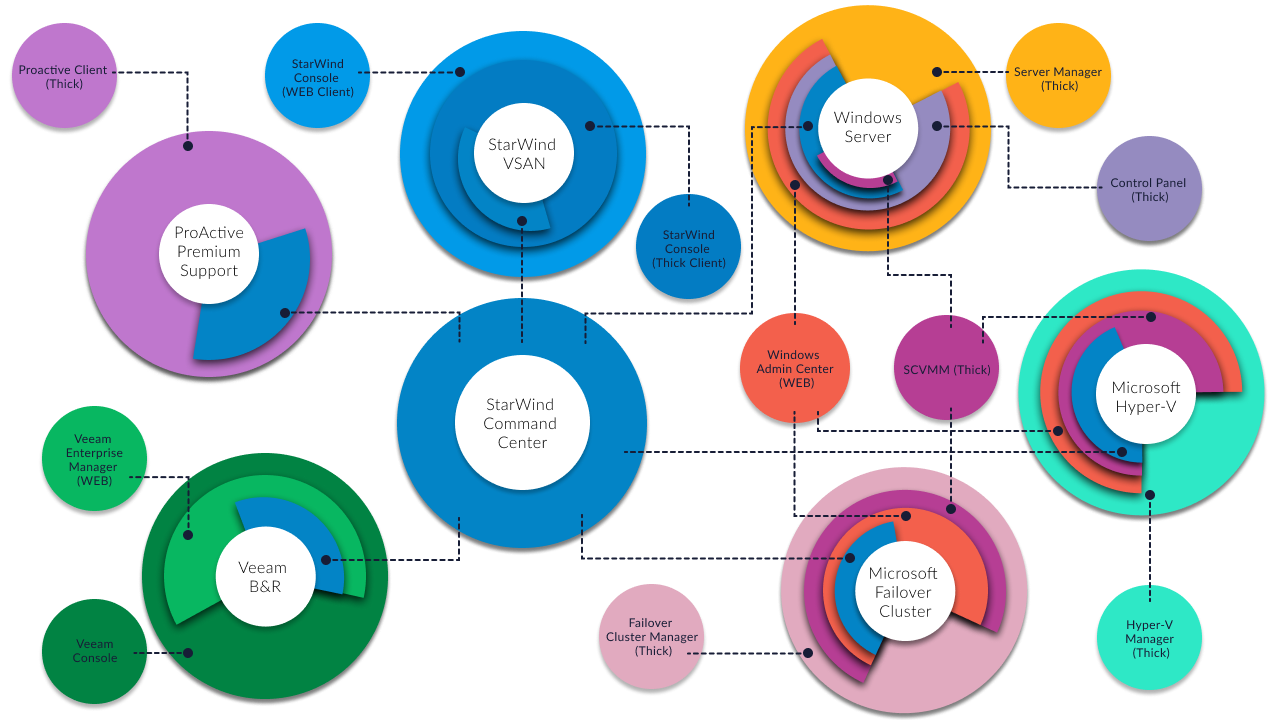

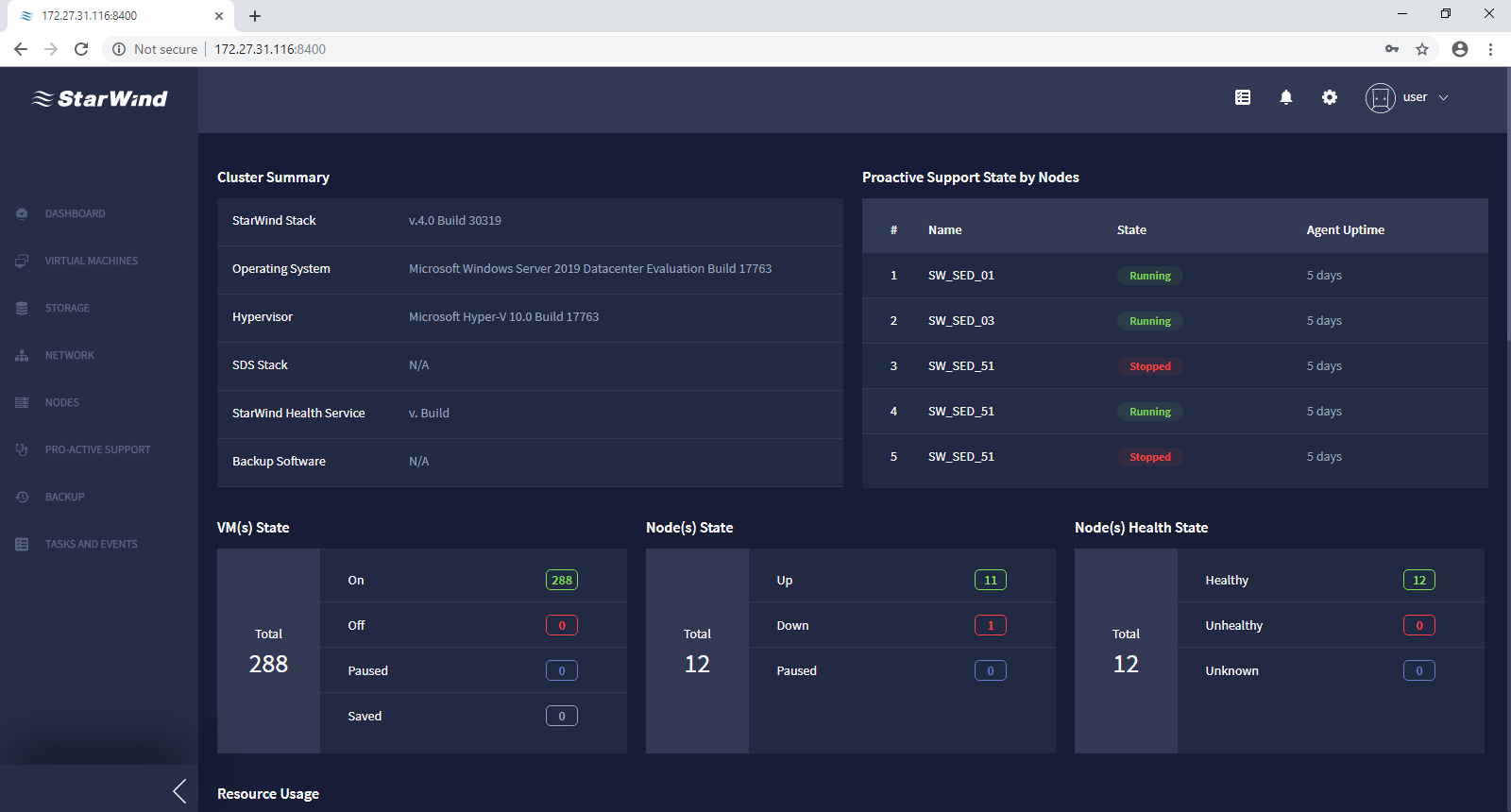

StarWind Command Center. Designed as a replacement for routine tasks to featureless Windows Admin Center and bulky System Center Configuration Manager, StarWind Command Center consolidates sophisticated dashboards that cover all the important information about the state of each environment component on a single screen.

Being a single-pane-of-glass tool, StarWind Command Center allows solving the whole range of tasks related to managing and monitoring your IT infrastructure, applications, and services. As a part of StarWind ecosystem, StarWind Command Center allows managing a hypervisor (VMware vSphere, Microsoft Hyper-V, Red Hat KVM, etc.) and integrates with Veeam Backup & Replication and public cloud infrastructure. On top of that, the solution incorporates StarWind ProActive Premium Support that monitors the cluster 24/7, predicts failures, and reacts to them before things go south.

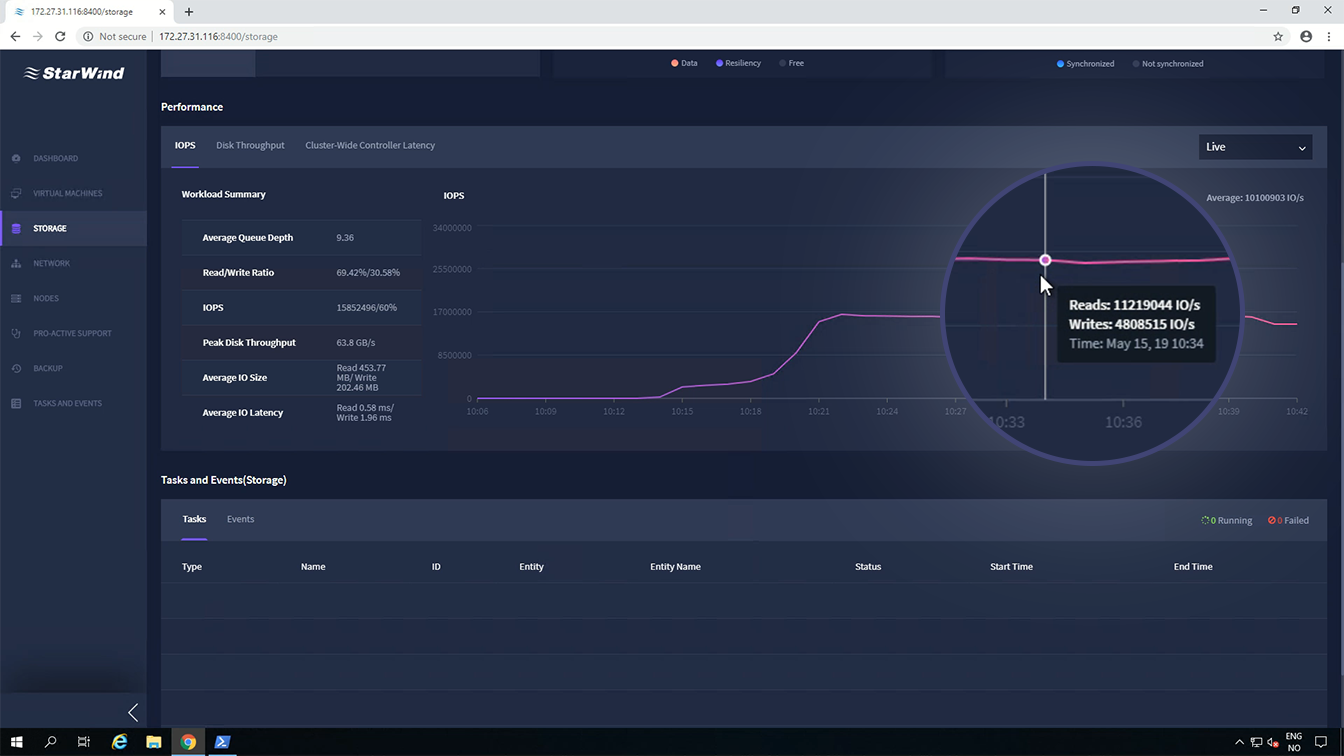

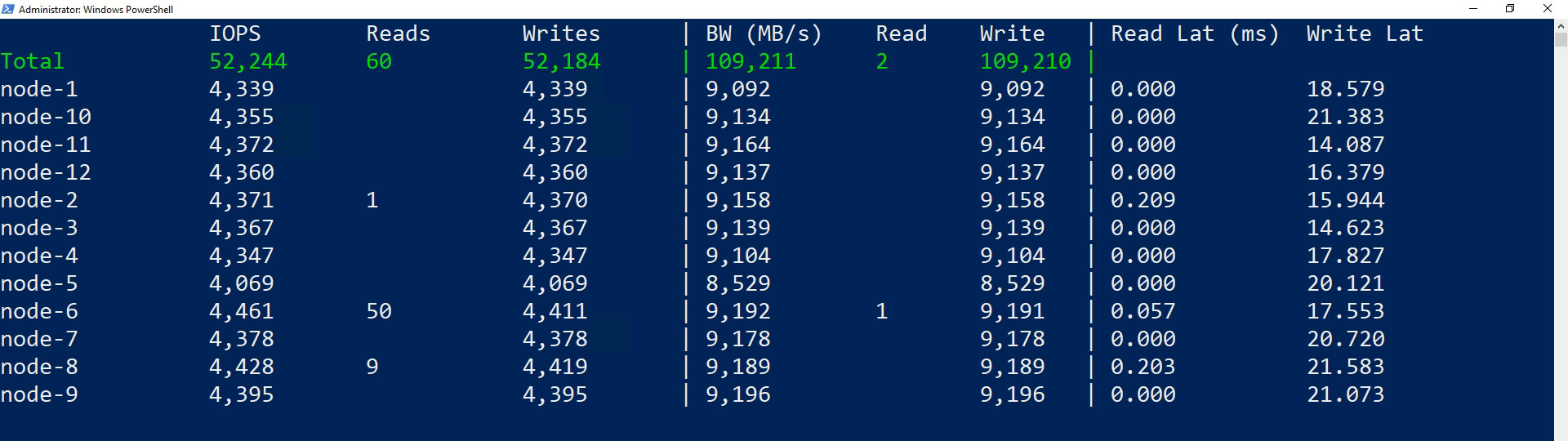

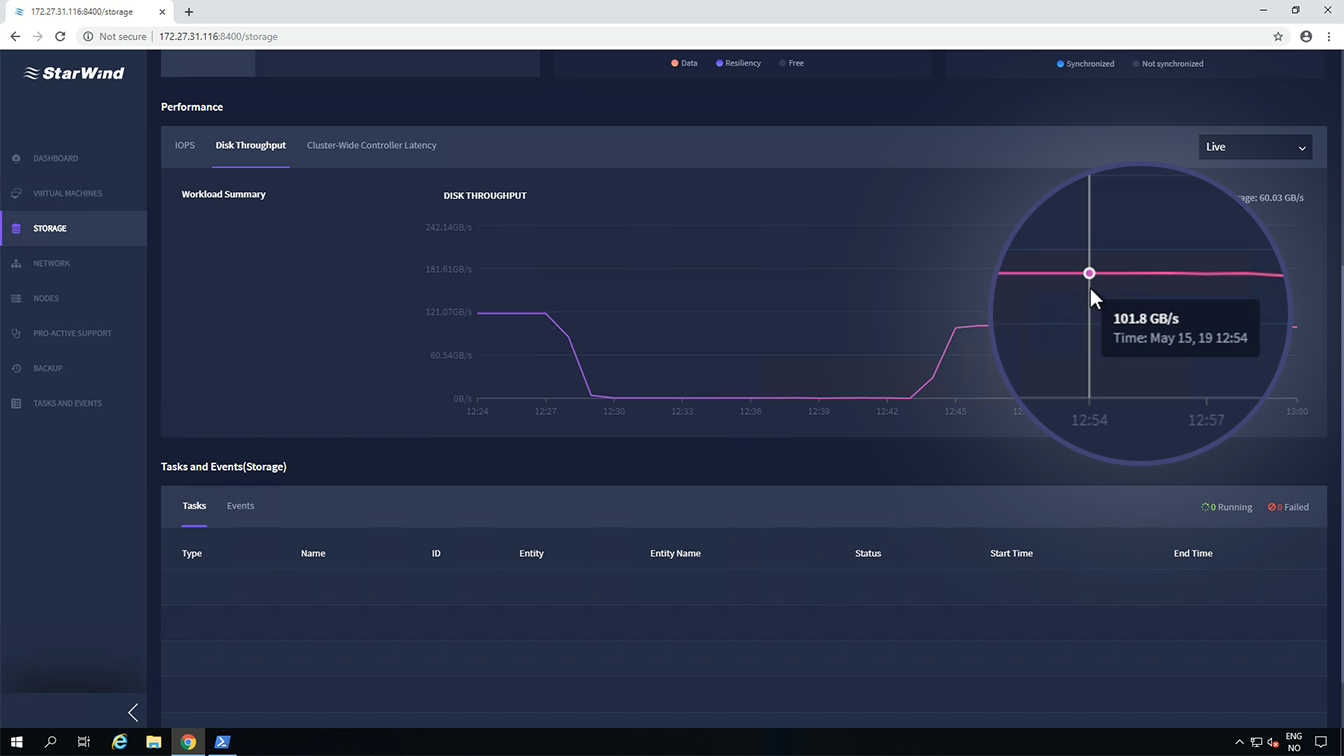

For instance, StarWind Command Center Storage Performance Dashboard features an interactive chart of cluster-wide aggregate IOPS measured at the CSV filesystem layer in Windows. More detailed reporting is available in the command-line output of DISKSPD and VM Fleet.

The other side of storage performance is the latency – how long an IO takes to complete. Many storage systems perform better under heavy queuing, which helps to maximize parallelism and busy time at every layer of the stack. But there’s a tradeoff: queuing increases latency. For example, if you can do 100 IOPS with sub-millisecond delay, you may also be able to achieve 200 IOPS if you can tolerate higher delay. Latency time is good to watch out for: sometimes the largest IOPS benchmarking numbers are only possible with delays that would otherwise be unacceptable.

Cluster-wide aggregate IO latency, as measured at the same layer in Windows, is plotted on the HCI Dashboard too.

Any storage system that provides fault tolerance makes distributed copies of writes, which must traverse the network and incurs backend write amplification. For this reason, the largest IOPS benchmark numbers are typically achieved only with reads, especially if the storage system has common-sense optimizations to read from the local copy whenever possible, which StarWind Virtual SAN does.

NOTE: To make it right, we show you VM Fleet results and StarWind Command Center results together with the videos of those tests.

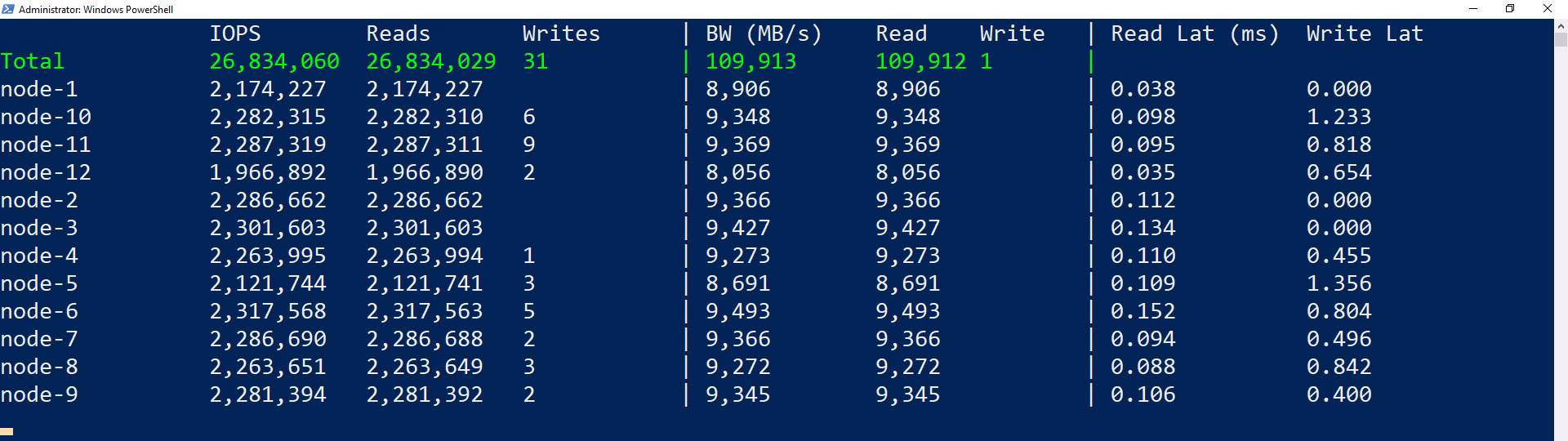

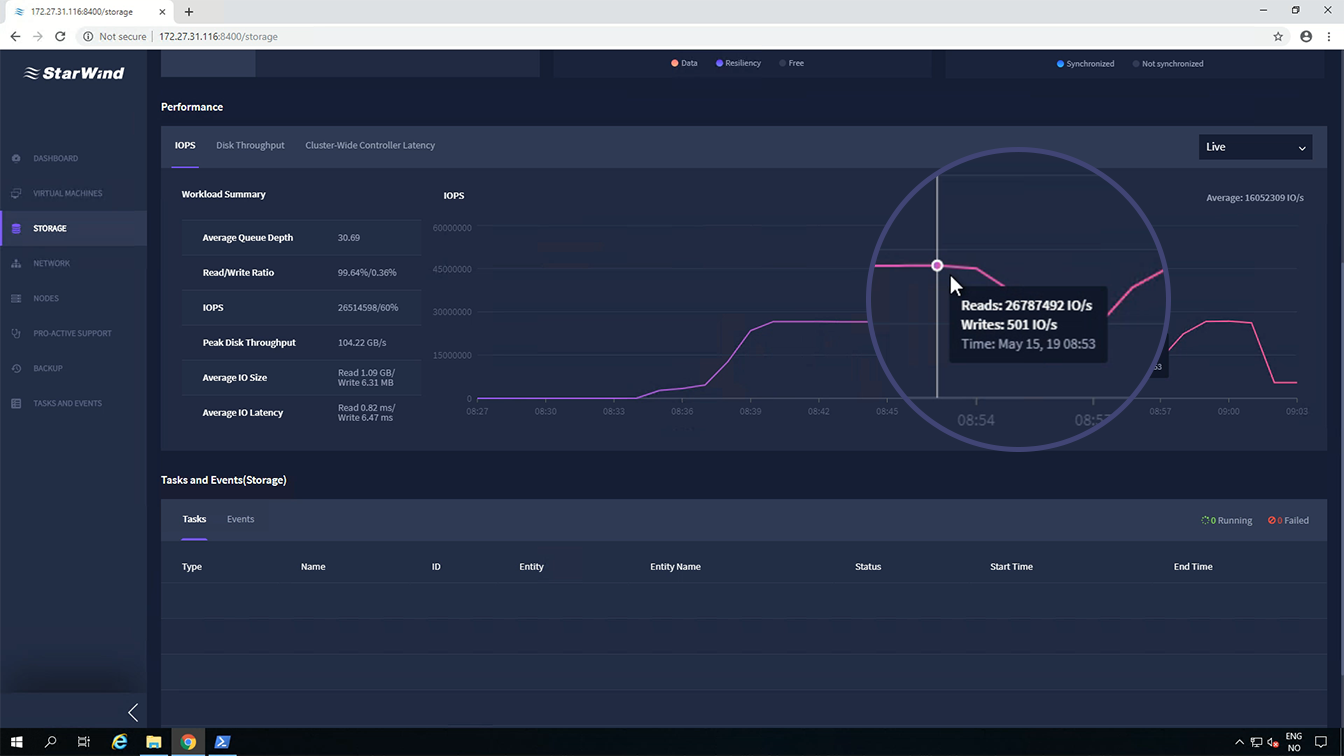

Action 1: 4К random read --> .\Start-Sweep.ps1 -b 4 -t 16 -o 32 -w 0 -d 1500 -p r

With 100% reads, the cluster delivers 26,834,060 IOPS. This is 101.5% performance out of theoretical 26,400,000 IOPS: each node has four Intel Optane NVMe SSD each performs 550,000 IOPS.

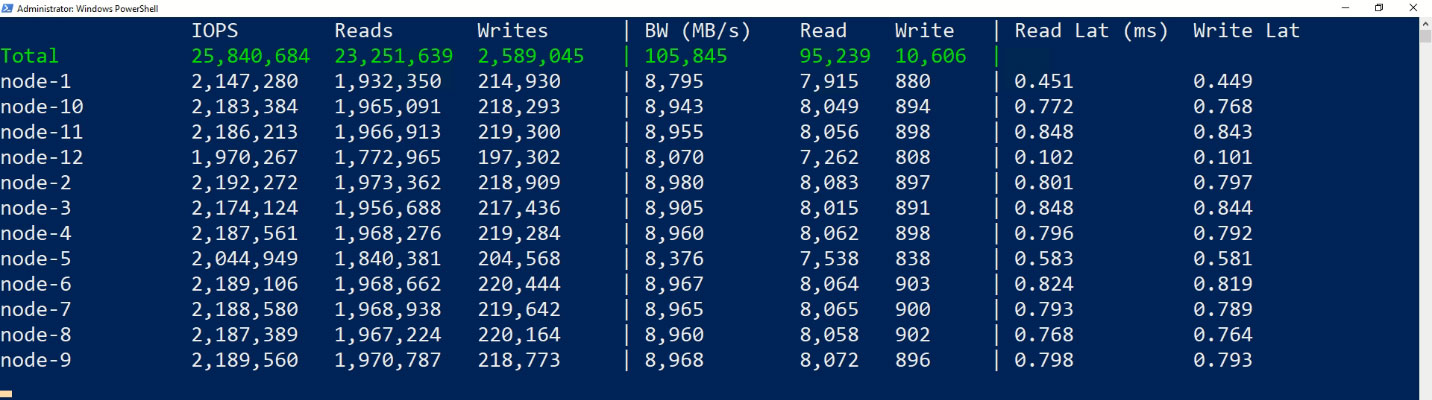

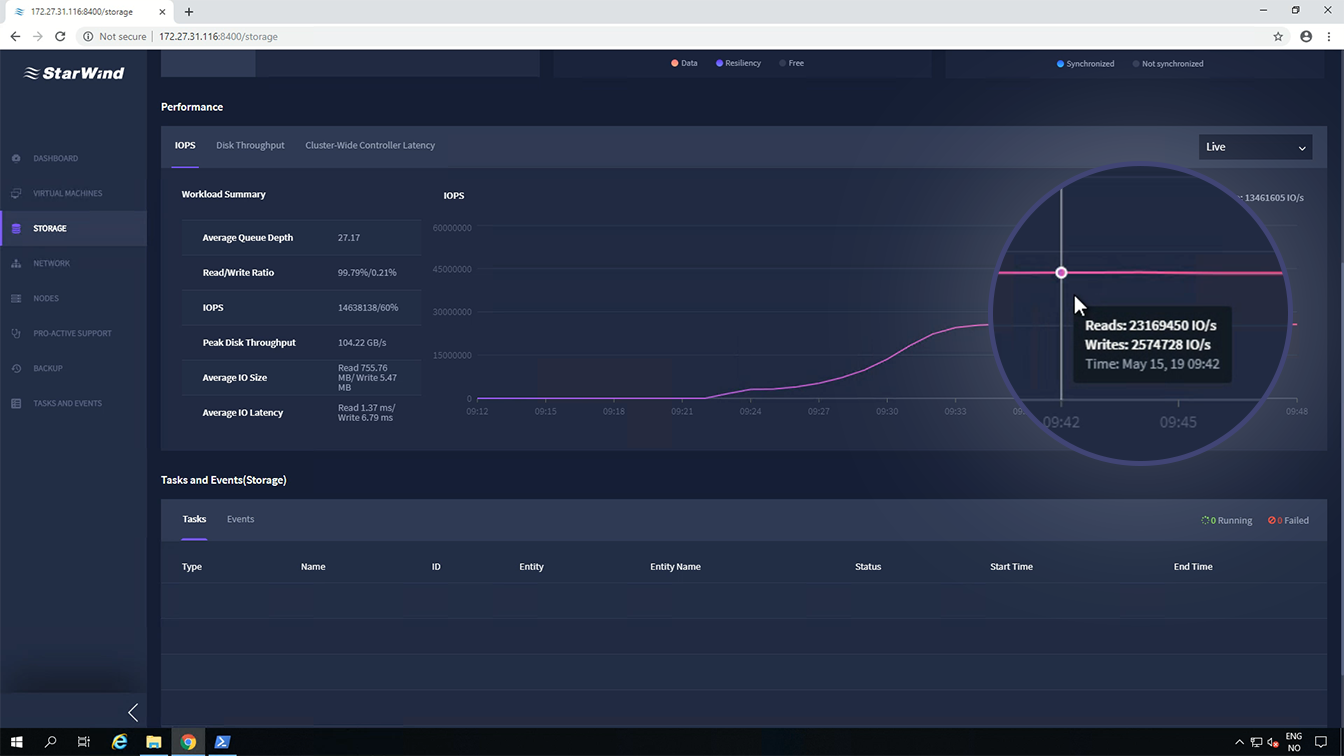

Action 2: 4К random read/write 90/10 --> .\Start-Sweep.ps1 -b 4 -t 16 -o 32 -w 10 -d 1500 -p r

With 90% random reads and 10% writes, the cluster delivers 25,840,684 IOPS.

NOTE: We observed double writes since StarWind Virtual SAN synchronizes each virtual disk. This can be clearly seen in StarWind Command Center.

Action 3: 4К random read/write 70/30 --> .\Start-Sweep.ps1 -b 4 -t 16 -o 32 -w 30 -d 1500 -p r

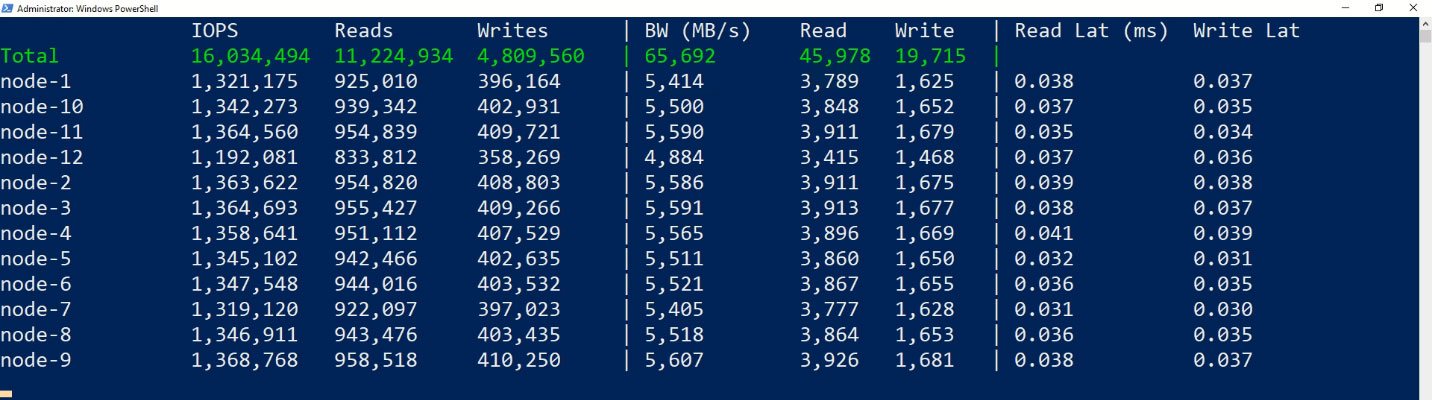

With 70% random reads and 30% writes, the cluster delivers 16,034,494 IOPS.

NOTE: Since StarWind Virtual SAN synchronize each virtual disk, we have double writes which are seen on StarWind Command Center.

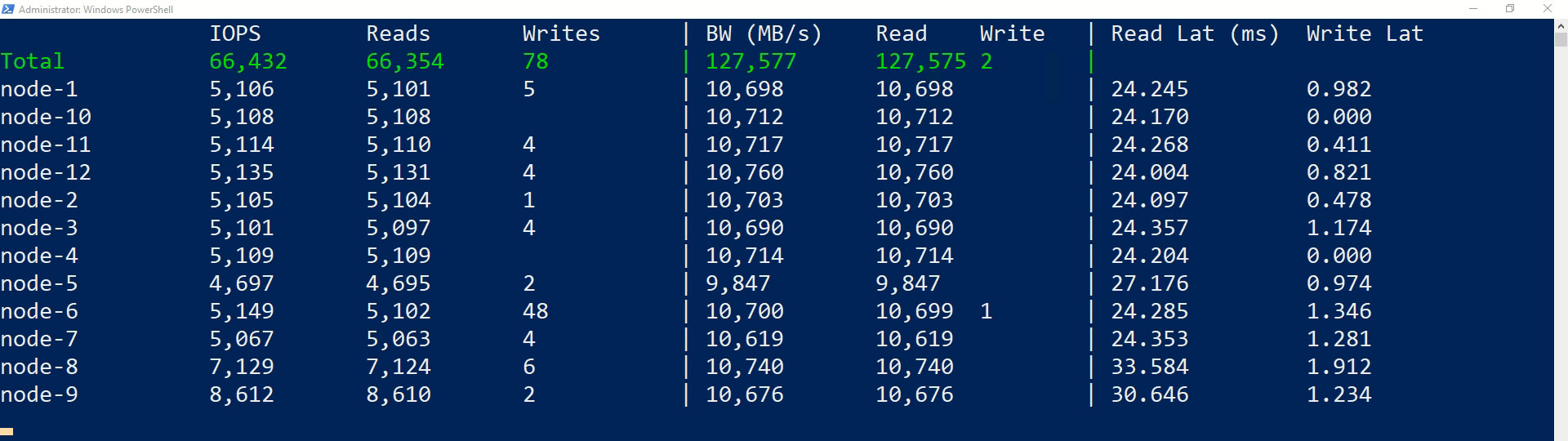

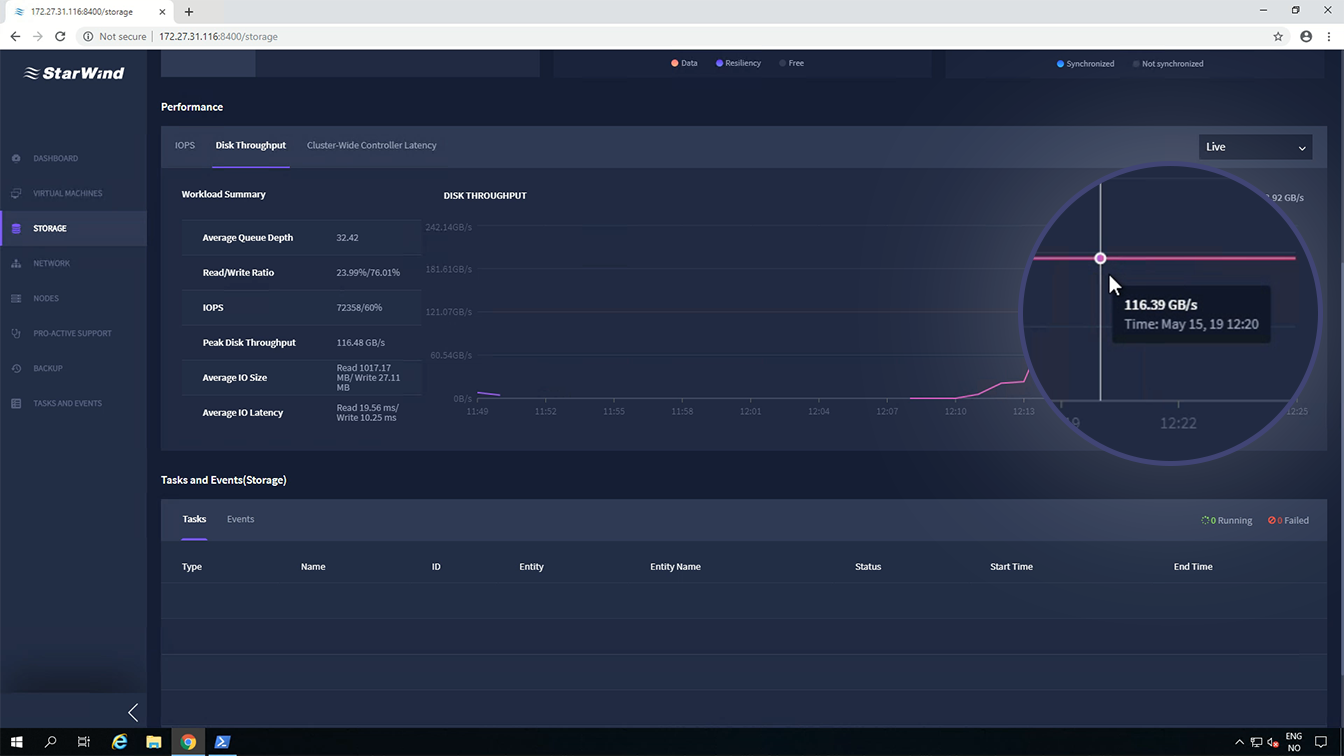

Action 4: 2M sequential read --> .\Start-Sweep.ps1 -b 2048 -t 4 -o 8 -w 0 -d 900 -p s

With 100% random reads, the cluster utilizes the network throughput –116.39GBps

This is 103.5% performance out of theoretical 112.5GBps.

Action 5: 2M sequential write --> .\Start-Sweep.ps1 -b 2048 -t 4 -o 8 -w 100 -d 900 -p s

With 100% random writes, the cluster utilizes all the network throughput – 101.8GBps

Here are all the results, with the same 12-server HCI cluster:

| Run | Parameters | Result |

|---|---|---|

| Maximize IOPS, all-read | 4 kB random, 100% read | 26,834,060 IOPS1 |

| Maximize IOPS, read/write | 4 kB random, 90% read, 10% write | 25,840,684 IOPS |

| Maximize IOPS, read/write | 4 kB random, 70% read, 30% write | 16,034,494 IOPS |

| Maximize throughput | 2 MB sequential, 100% read | 116.39GBps2 |

| Maximize throughput | 2 MB sequential, 100% write | 101.8GBps |

1 - 101.5% performance out of theoretical 26,400,000 IOPS

2 - 103.5% performance out of theoretical 112.5GBps

This article presents results of the second benchmarking stage. It highlights the performance of a 12-node production-ready StarWind HCA cluster where each server was powered with Intel® Xeon® Platinum 8268 processors, Intel® SSD D3-S4510 Series as primary storage and Intel® Optane™ SSD DC P4800X Series drives as dedicated cache, and Mellanox ConnectX-5 100 GbE NICs.

12-node all-flash NVMe cluster delivered 26.834 million IOPS, 101.5% performance out of theoretical 26.4 million IOPS.

This was really breakthrough performance for a production configuration that became possible due to StarWind Virtual SAN as shared storage and using write-back cache (iSCSI without RDMA for client access is used land) and backbone connections running over iSER. In our environment, no proprietary technologies were used, meaning that similar performance can be obtained with any hypervisor using iSCSI initiators, StarWind Virtual SAN, and Intel Optane configured as caching device.