vSphere Replication has proved to be a great bonus to any paid vSphere license. It is an amazing and simple tool that provides cheap and semi-automated Disaster Recovery solution. Another great use case for vSphere Replication is the migration of virtual machines.

vSphere Replication 6.x came with plenty of new useful features:

- Network traffic compression to reduce replication time and bandwidth consumption

- Linux guest OS quiescing

- Increase in scalability – one VRA server can replicate up to 2000 virtual machines

- Replication Traffic isolation – that is what we are going to talk today.

The goal of traffic separation is to enhance network performance by ensuring the replication traffic does not impact other business critical traffic. This can be done either by using VDS Network Input Output Control to set limits or shares for outgoing or incoming replication traffic. Another benefit of traffic isolation addresses security concern of mixing sensitive replication traffic with other traffic types.

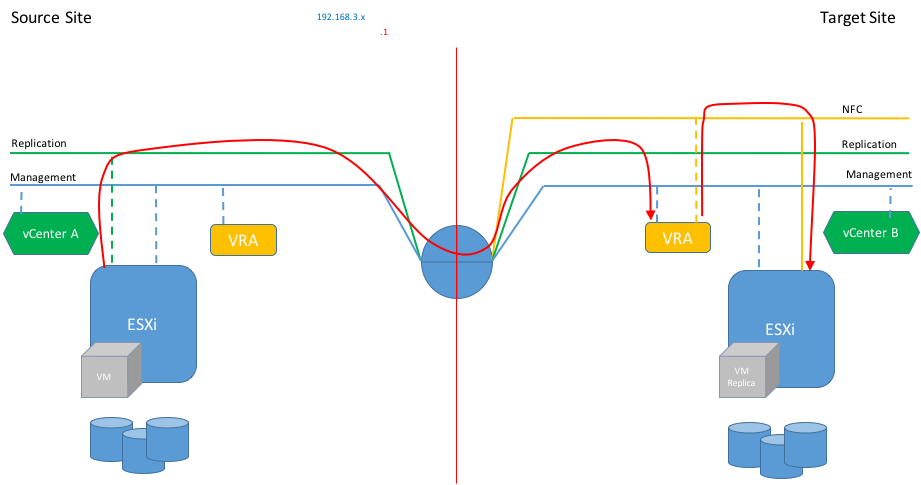

Traditional traffic flow

Prior to vSphere 6 the replication traffic was sent and received using the management interfaces of ESXi and VRA appliances. Therefore, traditional traffic flow would look like in the diagram below:

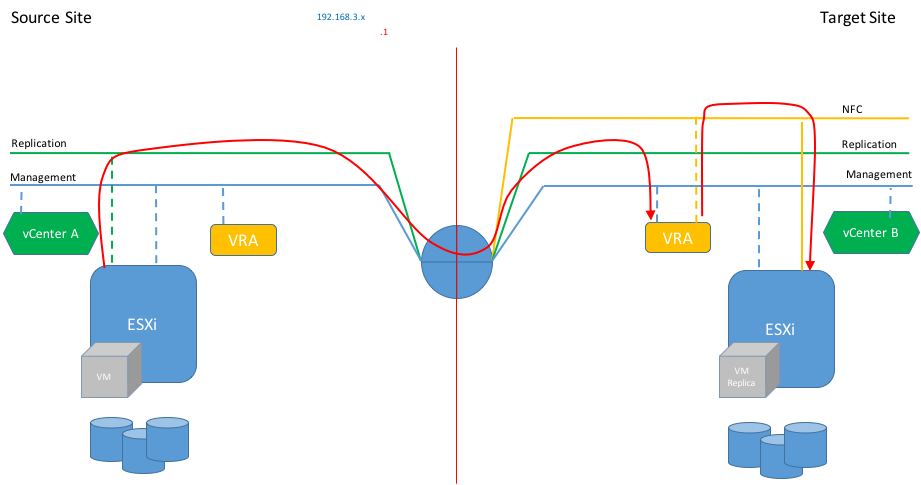

Replication Traffic Isolation

Apparently, if you follow the official configuration guide the isolation of replication traffic applies to source ESXi – vSphere Replication appliance only. The separation of NFC traffic is not covered in this scenario and it will still use the management network.

Reading vSphere Replication Admin guide I noticed it also lacks configuration steps on adding a static route to achieve isolation of replication traffic, even thought, it is an essential step of the setup.

I know many people hoped and tried to use TCP/IP stacks as vSphere Replication makes a perfect use case for this feature, but so far TCP/IP stack can be used only for vMotion and Provisioning traffic. Hopefully, TCP/IP Stack feature will get full functionality in the next release of vSphere.

You can see in the following diagram.

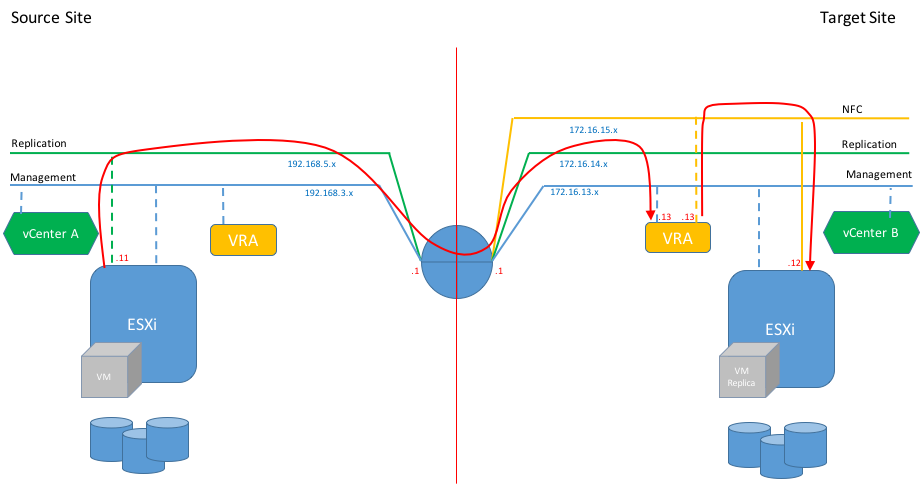

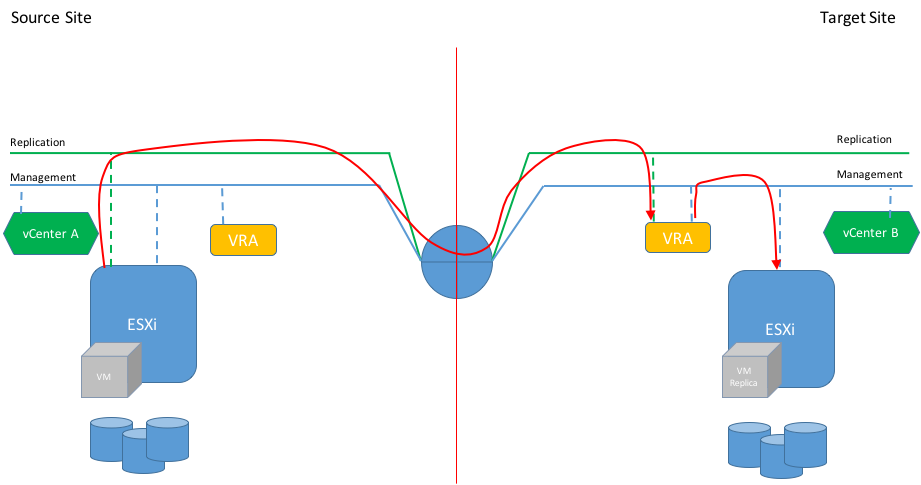

Full Isolation

Interestingly, the VMware documentation does not provide any instruction on how to isolate NFC traffic from the VRA to target datastore, although it is absolutely possible. By default the NFC traffic will be sent to target ESXi host from VRA management interface. So, here we have two options to isolate the NFC traffic from the management traffic:

- Send it using the Replication network. That means VRA server will be handling replication and NFC traffic using the same interface. Personally, I don’t like this option as it mixes two types of traffic which makes the configuration of Network I/O Control a bit more complicated.

- Add a third interface for NFC traffic only. That’s what we are going to configure and to test.

The diagram below depicts the replication traffic flow when each traffic type is moved across its dedicated network.

Even though the VRA at the source site is deployed it does not participate in the traffic flow. Its role is to establish connection with the second site and coordinate replication activity. It will also be used in case of reverse replication. However, for the sake of simplicity I drew only one-way replication in these diagrams.

The entire configuration of the network traffic isolation can be split in the following steps:

- Deploy vSphere Replication Appliance at source and target sites

- Register vRA with their corresponding vCenter Single Sign On

- Configure Replication Connection between sites if using different SSO domains.

These first 3 tasks are well documented in vSphere Replication Admin guide so I will be focusing on the tasks related to the traffic isolation, the ones that are not covered comprehensively in the official documentation.

This diagram shows the IP addressing I used in my home lab to test the Replication & NFC traffic isolation.

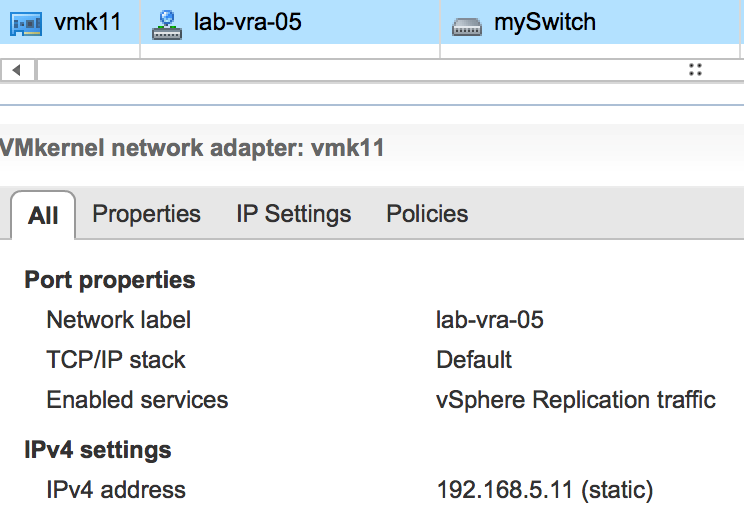

So let’s start configuration of the source ESXi host:

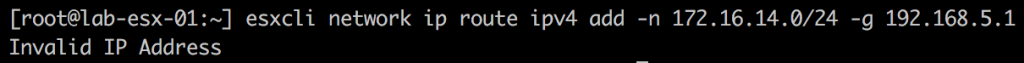

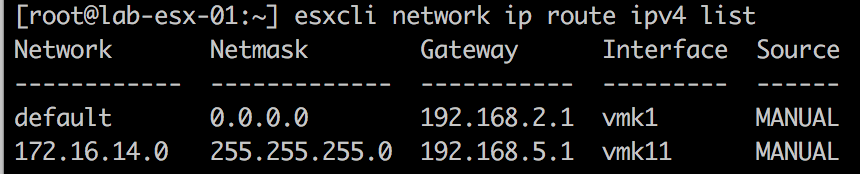

You can safely ignore the ‘Invalid IP Address’. The static route is added anyway, but it is still worth checking it.

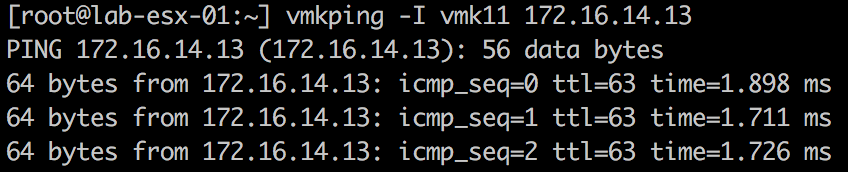

Note, we cannot use ‘vmkping’ to check the the replication traffic flow as the VRA has not been configured with static route for return traffic yet.

Obviously, you don’t want to configure every single host manually. Therefore, I would recommend to take advantage of host profiles to apply these changes to bulk number of hosts. Alternatively, you can use PowerCLI, but if you still don’t use Host Profiles are a making a huge mistake.

Now it is time to proceed with the configuration of VRA at target site

- Shutdown appliance

- Take a snapshot. Will save you time of VRA redeployment if something goes wrong.

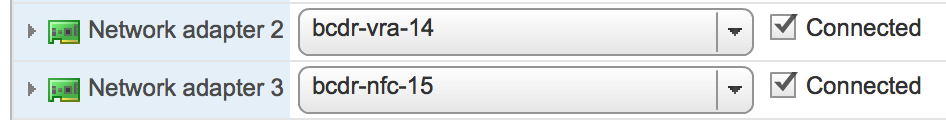

- Add 2 more vmnics and place them into correct portgroups

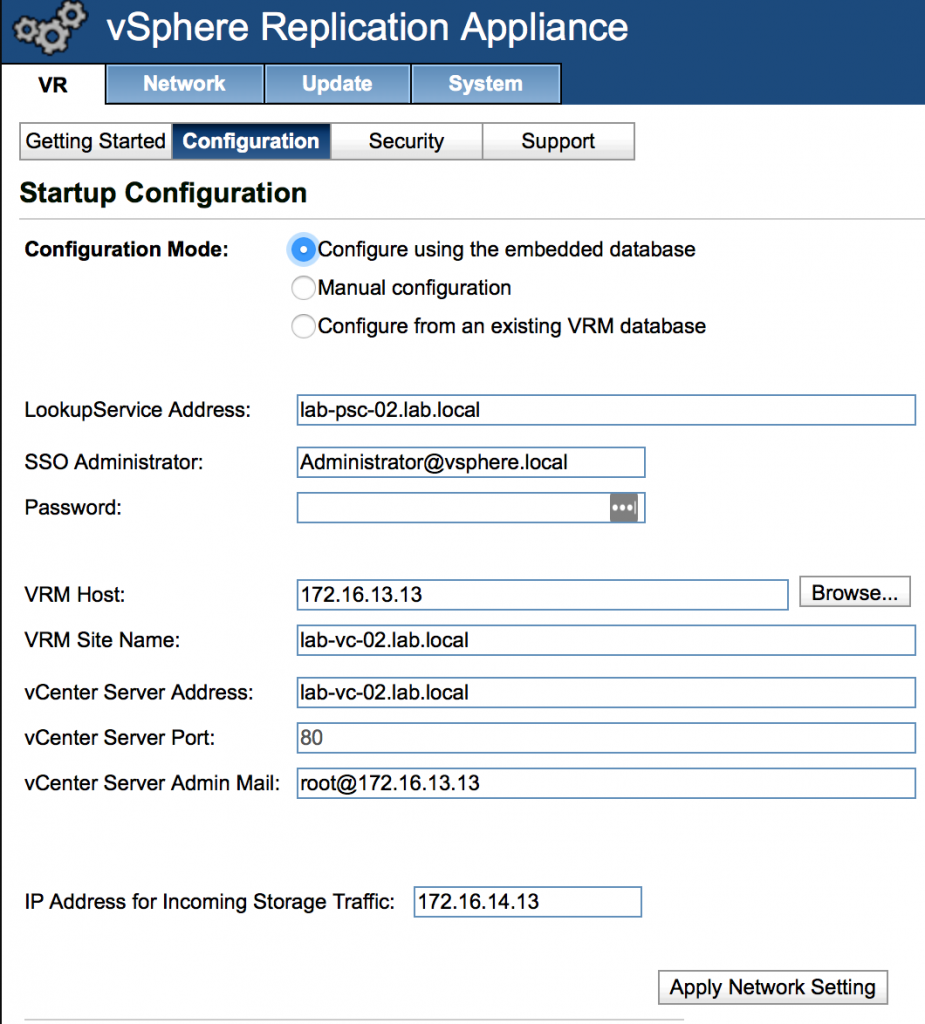

- Power on vRA and log into vra:5480

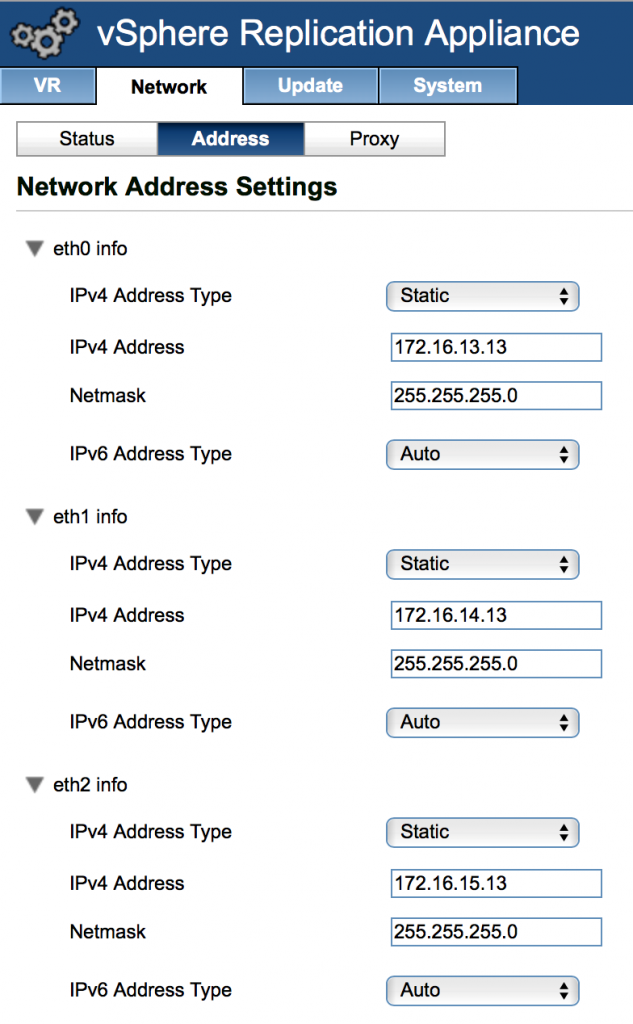

- Configure IP addresses for the newly added virtual NICs under ‘Network’ tab and press ‘Save Settings’ butting

- Specify IP Address to be used for Incoming Storage traffic sent from the Replication Vmkernel adapter of the source ESXI host

- Get access to the VRA console. If you want to use SSH you will have to enable and configure it first as per this KB https://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2112307

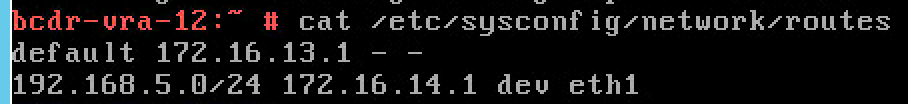

- Add static route for replication subnet at the source site.

To do that you will need to edit the /etc/sysconfig/network/routes file using the following format

|

1 |

X.X.X.X/X via X.X.X.X dev interface |

Make sure the file is saved correctly

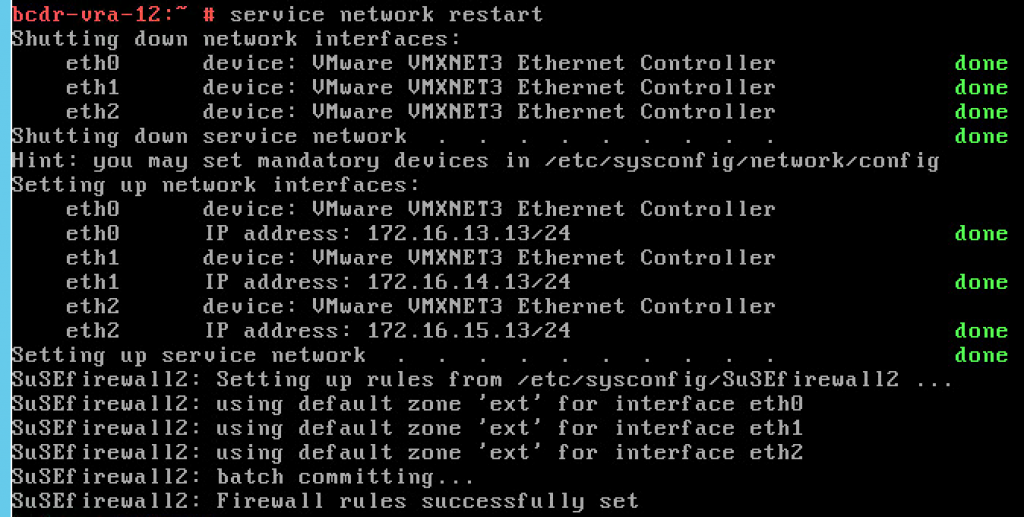

And restart networking service

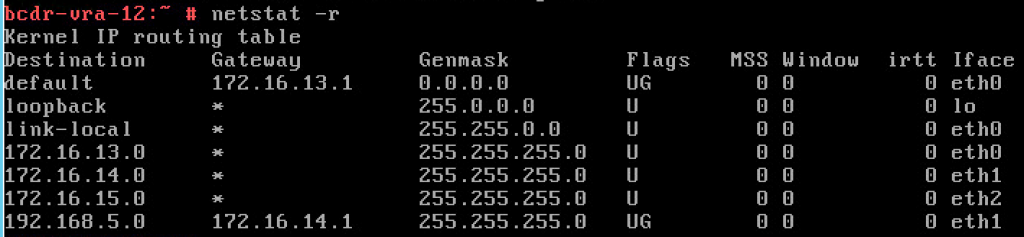

Now you can check the routing table to make sure the new route is present there.

At this point you can go back to the ssh session of the source ESXi host and test static routing between replication subnets using vmkping

The final step is the configuration of NFC Replication VMKernel adapter on the target ESXi host

If you have done everything correctly, you should be able to configure and to run a test replication. That will give you the chance to confirm the traffic flows over the right routes.

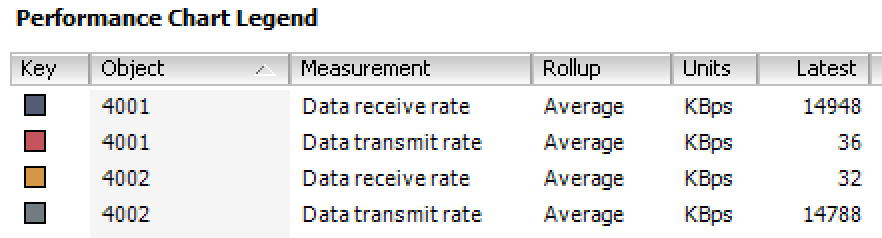

In my case I was just monitoring inbound and outbound traffic on two additional virtual NICs of VRA at Target site.

As you remember the first NIC was designated for the replication traffic and the second one is used for NFC.

As you can see in the screenshot the Data receive rate on the Replication vNIC is equal to Data transmit rate on the NFC vNIC of the VRA server. Thus, we proved the achievement of our goal of complete replication traffic isolation.

As you can see it is pretty simple configuration as long as you fully understand how the traffic is split across different networks. Although, it is really pity that VMware has not fully enabled TCP/IP stacks to make this process simpler and more user friendly.