Introduction

The present series of articles describes VSAN from StarWind for vSphere edition. In the first article, I talked about what VSAN from StarWind for vSphere is and how to set it up on the hardware RAID. VSAN from StarWind for vSphere is not just a simple Windows VM but a ready-to-go Linux-based VM. Using StarWind VSAN for vSphere, the process of deploying VMs, providing storage, connecting it, and creating highly available VMs becomes as easy as ABC.

The second part from this series focuses on Software RAID configuration recommendations. In the blog post, you will be able to find all the necessary information regarding work with StarWind VSAN for vSphere and mdadm.

MDADM (MDRAID)

Often the creation of an IT environment is planned without a hardware RAID controller. There is a variety of choices between Software RAIDs (e.g. ZFS, Btrfs), but we are going to talk about MDADM.

What’s mdadm?

MDADM is a standard (included in almost any Linux distribution) tool for managing RAID arrays. It has 7 modes of operations, but only a few of them are commonly used in real life, namely Create, Assemble, and Monitor. MDADM supports all RAID levels recommended by StarWind and you can find all the recommendations at the following link: https://knowledgebase.starwindsoftware.com/guidance/recommended-raid-settings-for-hdd-and-ssd-disks/

Configuration steps

In order to configure Software RAID inside of a StarWind VSAN VM, HBA or RAID controller must be

passed directly to the VM. Let’s do that 😊

Detailed configuration guide could be found here: https://www.starwindsoftware.com/resource-library/starwind-virtual-san-for-vsphere-linux-software-raid-configuration-guide

You should have StarWind VSAN for vSphere on each ESXi host you are planning to use. If you have not done it yet, check the following guide in order to proceed: https://www.starwindsoftware.com/resource-library/starwind-virtual-san-for-vsphere-installation-and-configuration-guide

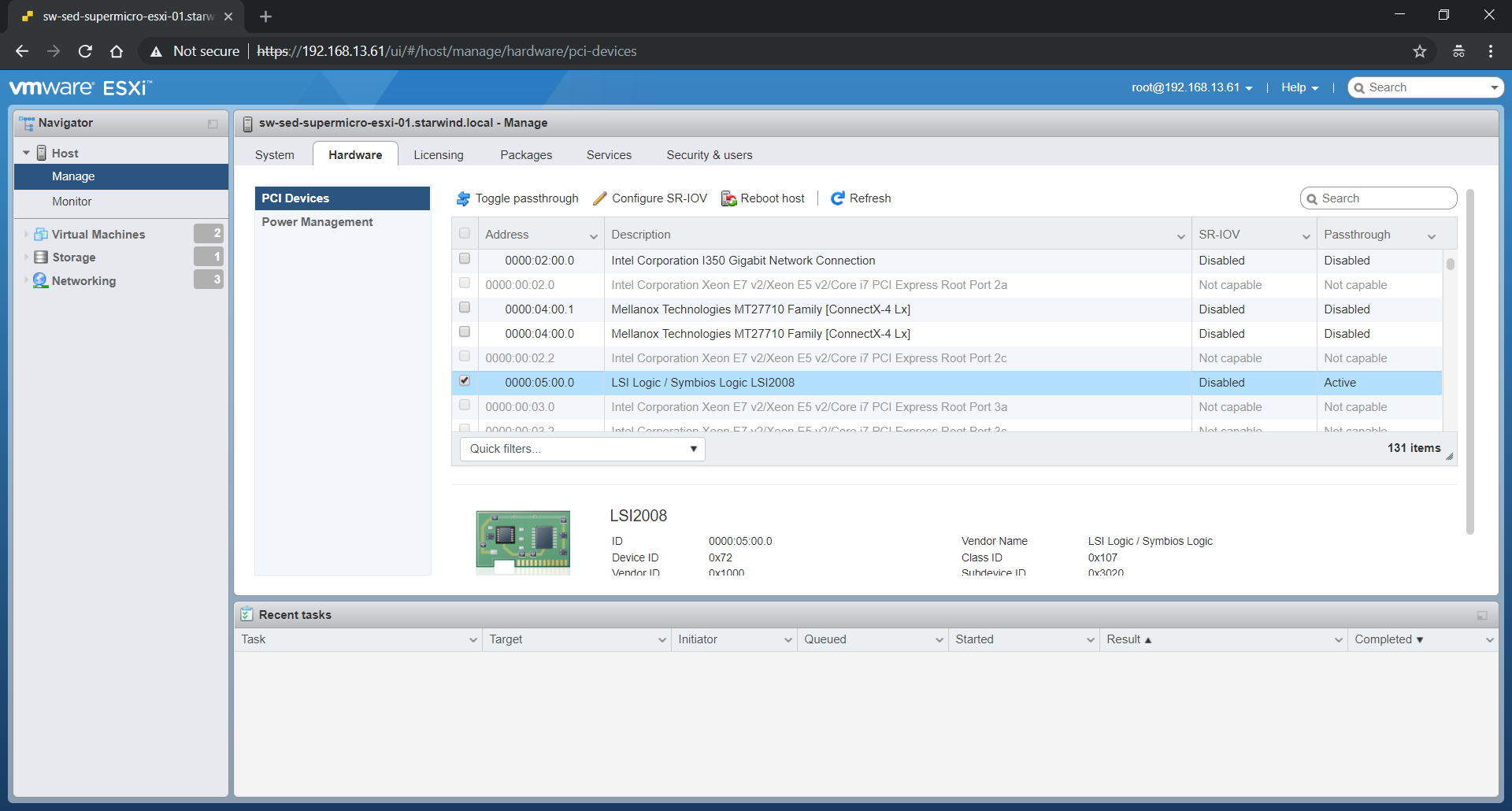

Now we need to pass through the PCIe device to the VM. It can be done either directly from ESXi host or from the vSphere client. On the Manage page of the host, you can find the Hardware tab with your HBA or RAID Controller indication (that’s the case of this article).

As you can see, the LSI Logic device is already Active for passthrough. To achieve this, find the device needed and click Toggle passthrough. Reboot the host afterward.

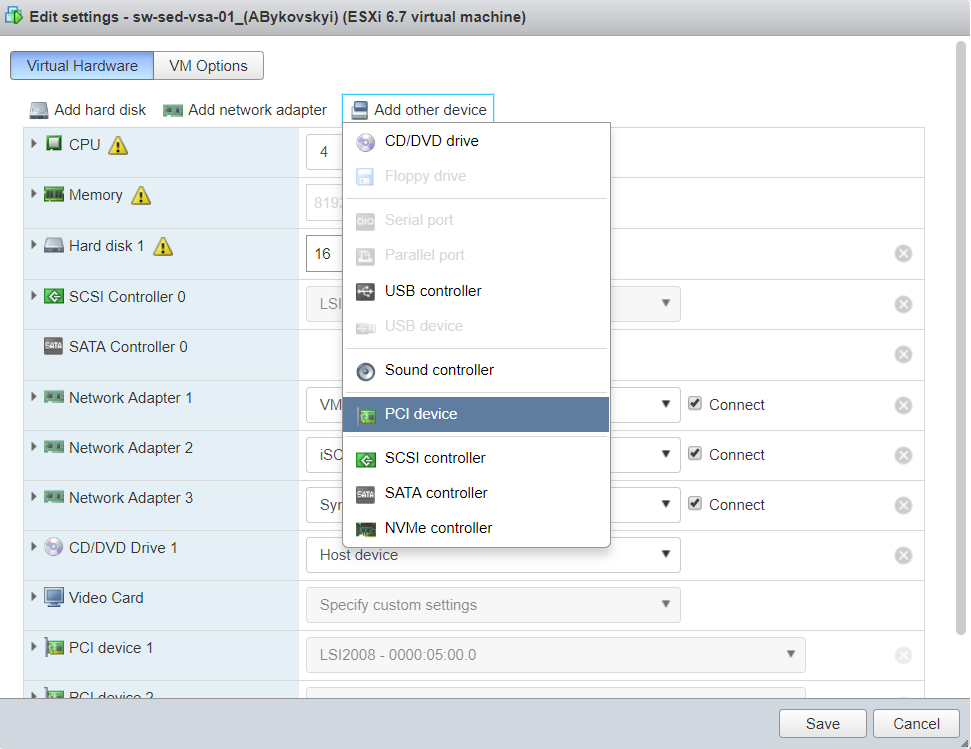

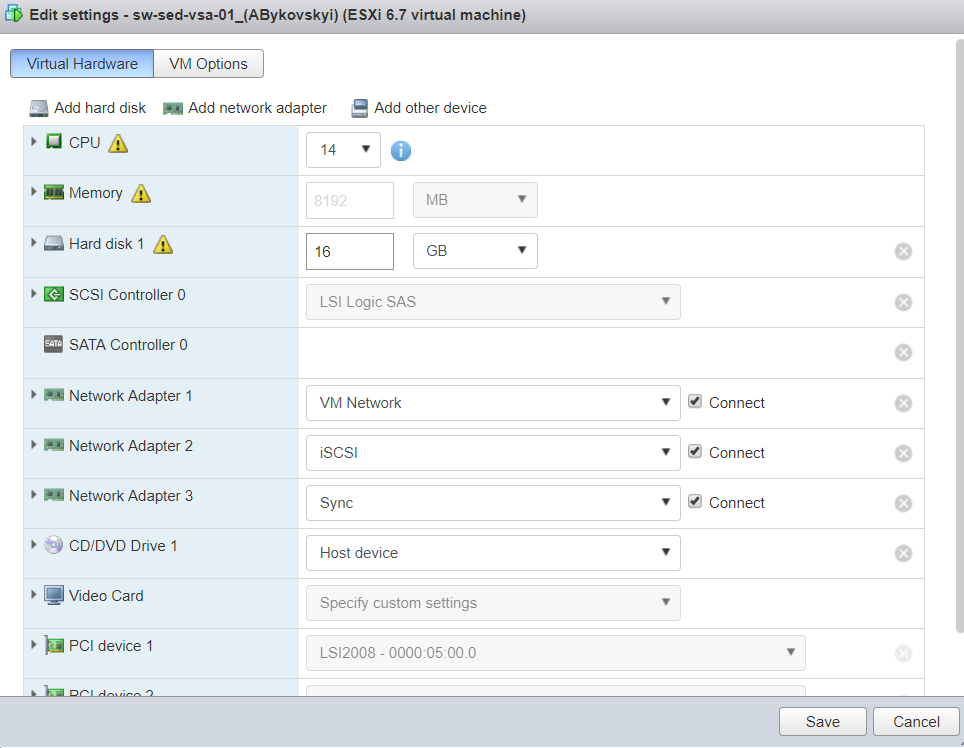

After the reboot, edit settings of StarWind VM to add PCIe device.

Do not forget to check that the proper device was added, and RAM is reserved by the VM.

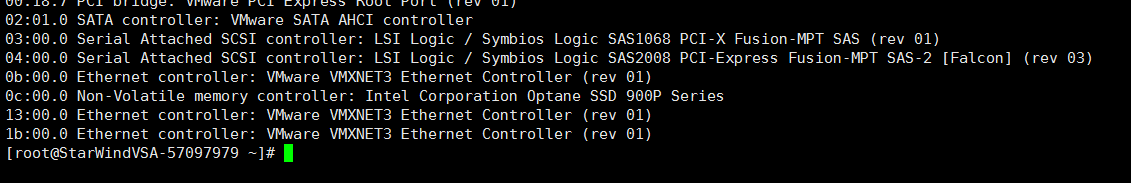

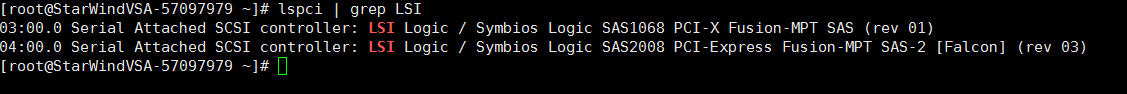

Connect to the VM using SSH. Use “lspci” command to list all PCI devices and find HBA which was added.

Since I have LSI controller in my lab, “lspci | grep LSI” command can be used.

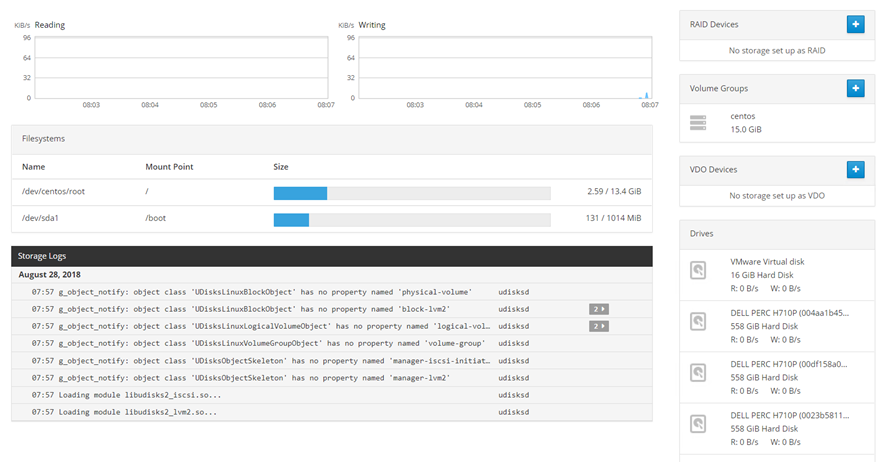

Login to the VM: https://IP-address:9090

Go to the Storage page. The disks connected to HBA will appear on the drives section.

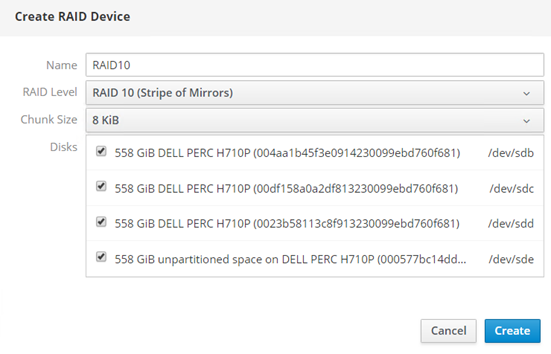

Click “+” on the RAID Devices section. Popup menu will appear. I have HDDs and will configure RAID10 according to our recommendations. Chunk size for the array of 4 disks should be 8KiB:

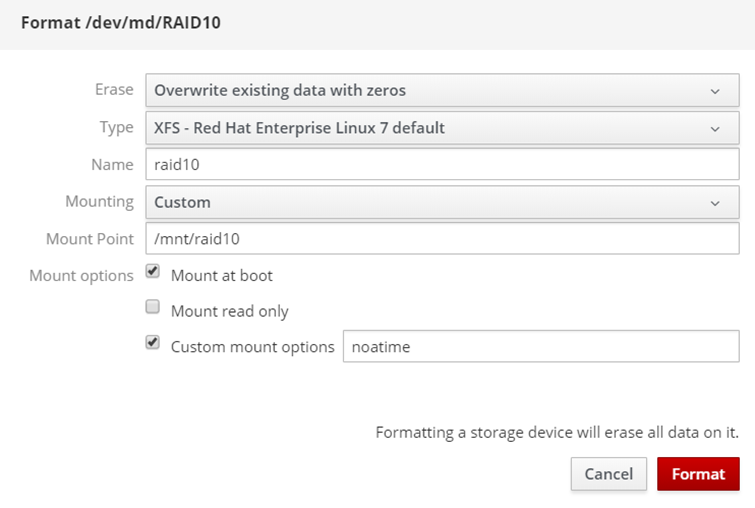

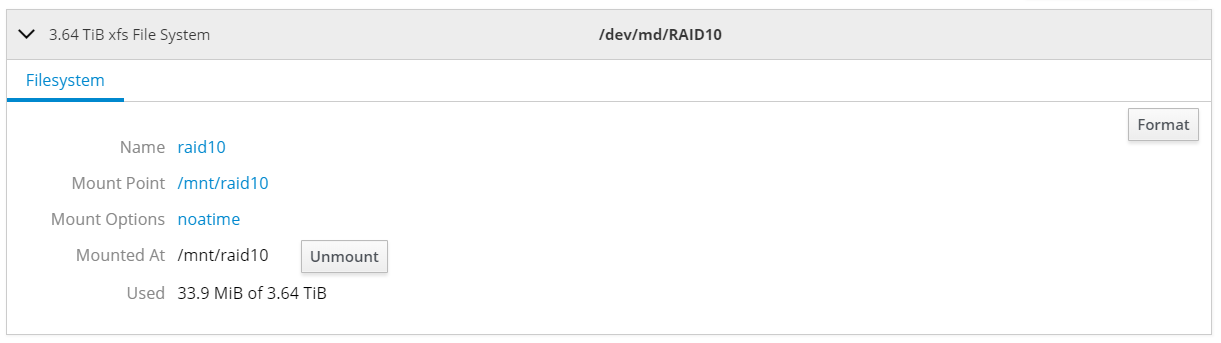

Wait for the RAID synchronization to finish and create XFS partition on top of it.

Hint. Add noatime mount option on the Custom mount options section. XFS will automatically choose the size of sunit and swidth mount options.

After the successful format, the device should be mounted.

Now the RAID array is ready to store StarWind images and, of course, client VMs.

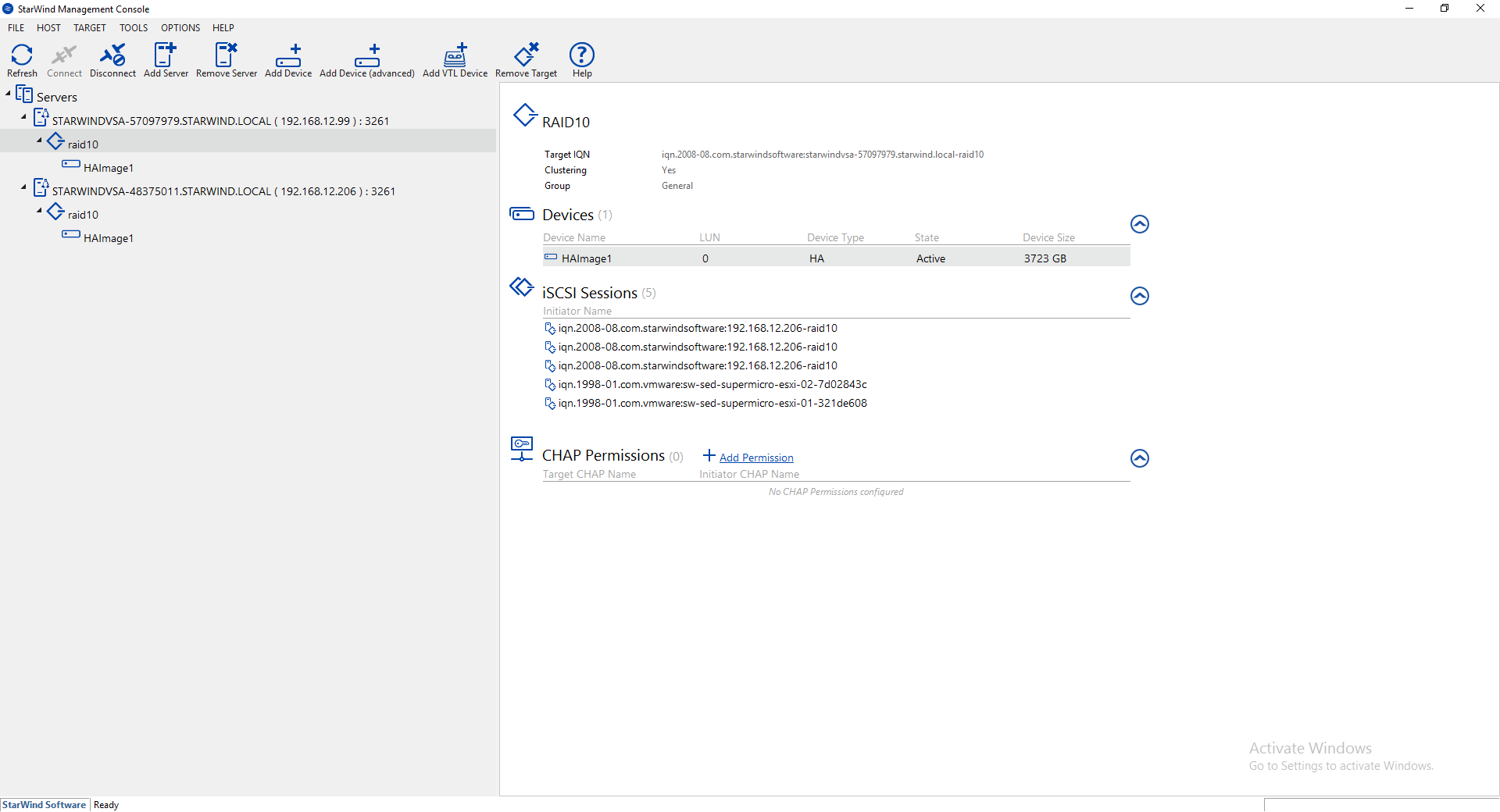

Finally, StarWind HA device can be created. This part is covered in the following guide starting from step 17:

https://www.starwindsoftware.com/help/ConfiguringSynchronousReplication.html

As a result:

Detailed 2-node cluster with VMware vSphere 6.5 configuration guide can be found here:

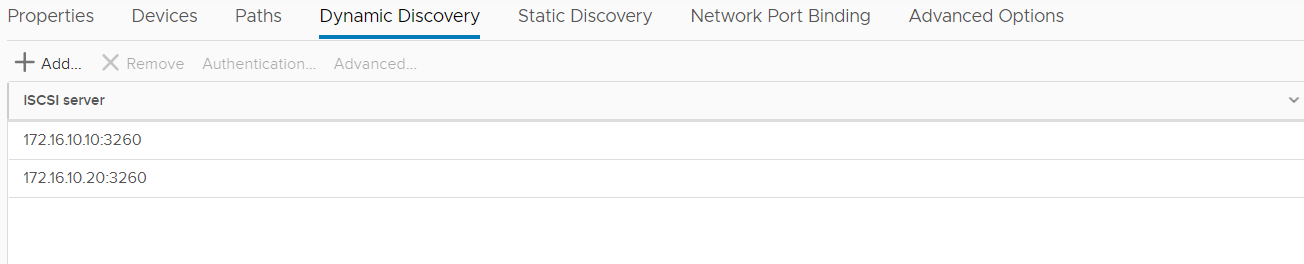

Connect it in VMware iSCSI Software Adapter on both hosts. Add iSCSI IP addresses of StarWind VMs to the Dynamic Discovery tab.

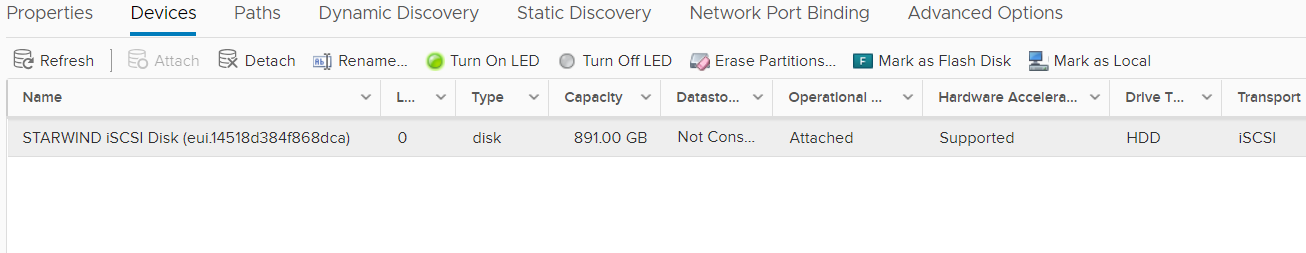

Rescan Storage and StarWind Device will appear.

Hint. Do not forget to configure the automated script to avoid manual storage rescan.

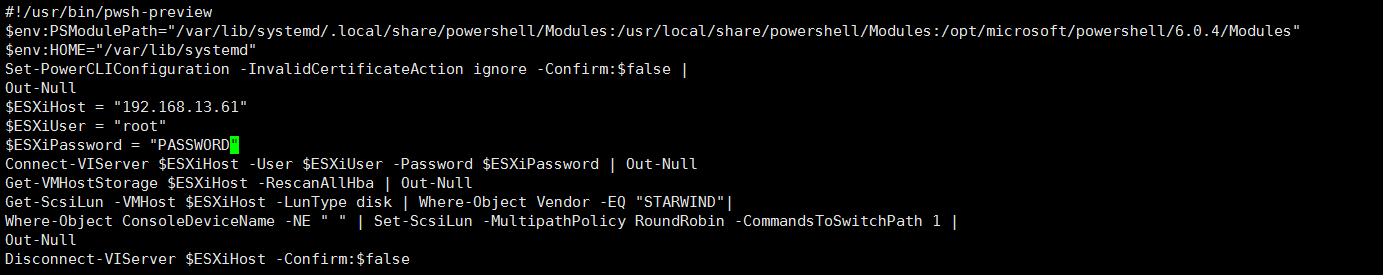

Login to the StarWind VM via ssh and edit /opt/StarWind/StarWindVSA/drive_c/StarWind/hba_rescan.ps1 file adding credentials of ESXi host where VM is running:

Create datastore and make you VMs HA 😊