StarWind Virtual SAN: Configuration Guide for VMware vSphere [ESXi], VSAN Deployed as a Controller VM using PowerShell CLI

- July 28, 2024

- 34 min read

- Download as PDF

Annotation

Relevant Products

This guide applies to StarWind Virtual SAN and StarWind Virtual SAN Free (Version V8 (Build 15260, OVF Version 20230901) and earlier).

For newer versions of StarWind Virtual SAN (Version V8 (Build 15260, CVM Version 20231016) and later), please refer to this configuration guide: StarWind Virtual SAN: Configuration Guide for VMware vSphere [ESXi], VSAN Deployed as a Controller Virtual Machine (CVM) using PowerShell CLI

Purpose

This guide describes the process for the deployment of StarWind Virtual SAN on VMware vSphere, with VSAN set up as a Controller Virtual Machine (CVM). It offers key insights and steps to ensure a seamless deployment.

Audience

Designed for IT specialists, system administrators, and VMware professionals, seeking to proficiently set up StarWind Virtual SAN on VMware vSphere.

Expected Result

Upon completion of this guide, users will get a fully configured VMware vSphere environment with StarWind Virtual SAN as a Controller Virtual Machine (CVM).

StarWind Virtual SAN for vSphere VM requirements

Prior to installing StarWind Virtual SAN Virtual Machines, please make sure that the system meets the requirements, which are available via the following link: https://www.starwindsoftware.com/system-requirements

Storage provisioning guidelines: https://knowledgebase.starwindsoftware.com/guidance/how-to-provision-physical-storage-to-starwind-virtual-san-controller-virtual-machine/

Recommended RAID settings for HDD and SSD disks:

https://knowledgebase.starwindsoftware.com/guidance/recommended-raid-settings-for-hdd-and-ssd-disks/

Please read StarWind Virtual SAN Best Practices document for additional information: https://www.starwindsoftware.com/resource-library/starwind-virtual-san-best-practices

Pre-Configuring the Servers

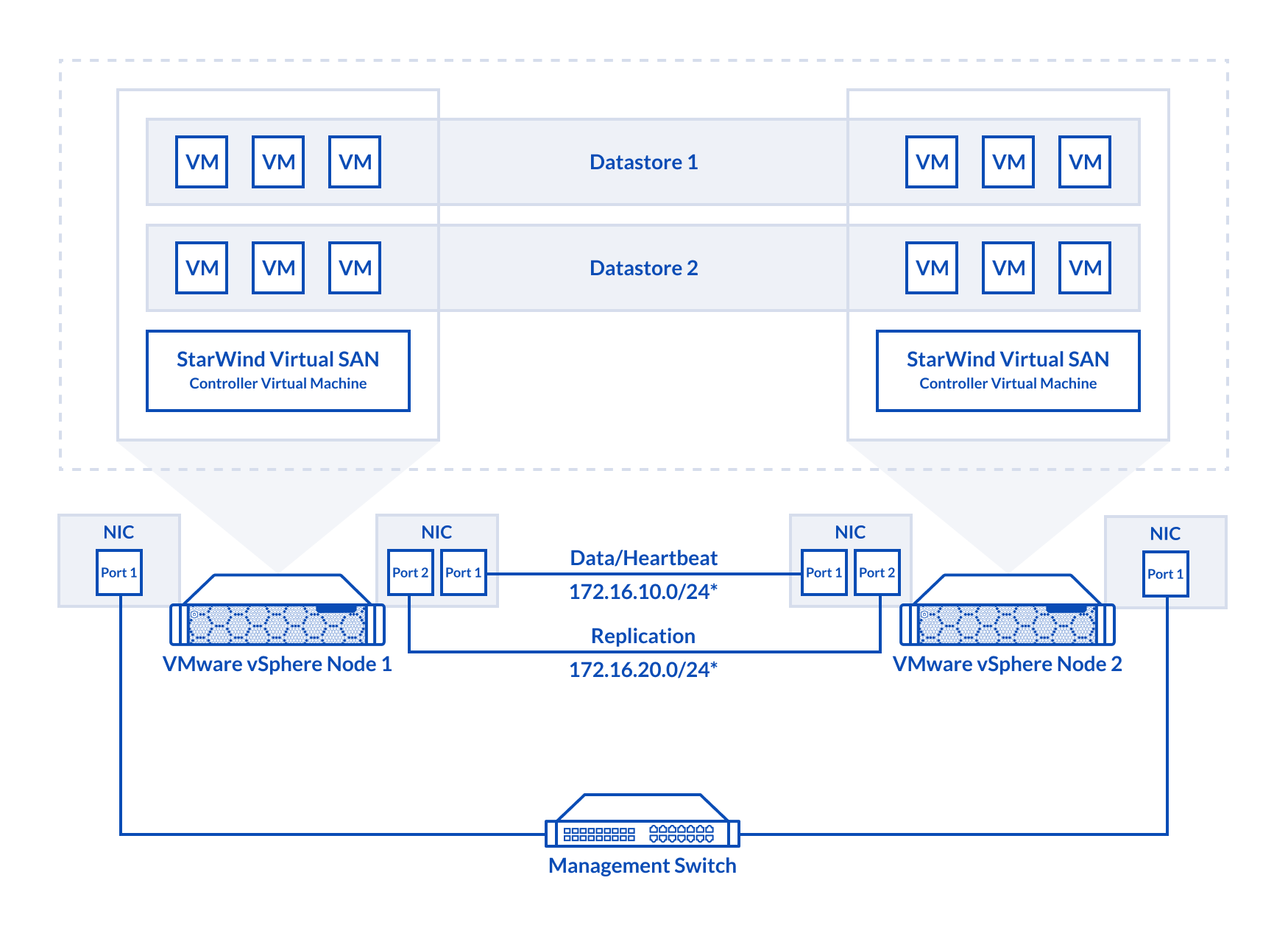

The diagram below illustrates the network and storage configuration of the solution:

1. ESXi hypervisor should be installed on each host.

2. StarWind Virtual SAN for vSphere VM should be deployed on each ESXi host from an OVF template, downloaded on this page: https://www.starwindsoftware.com/release-notes-build

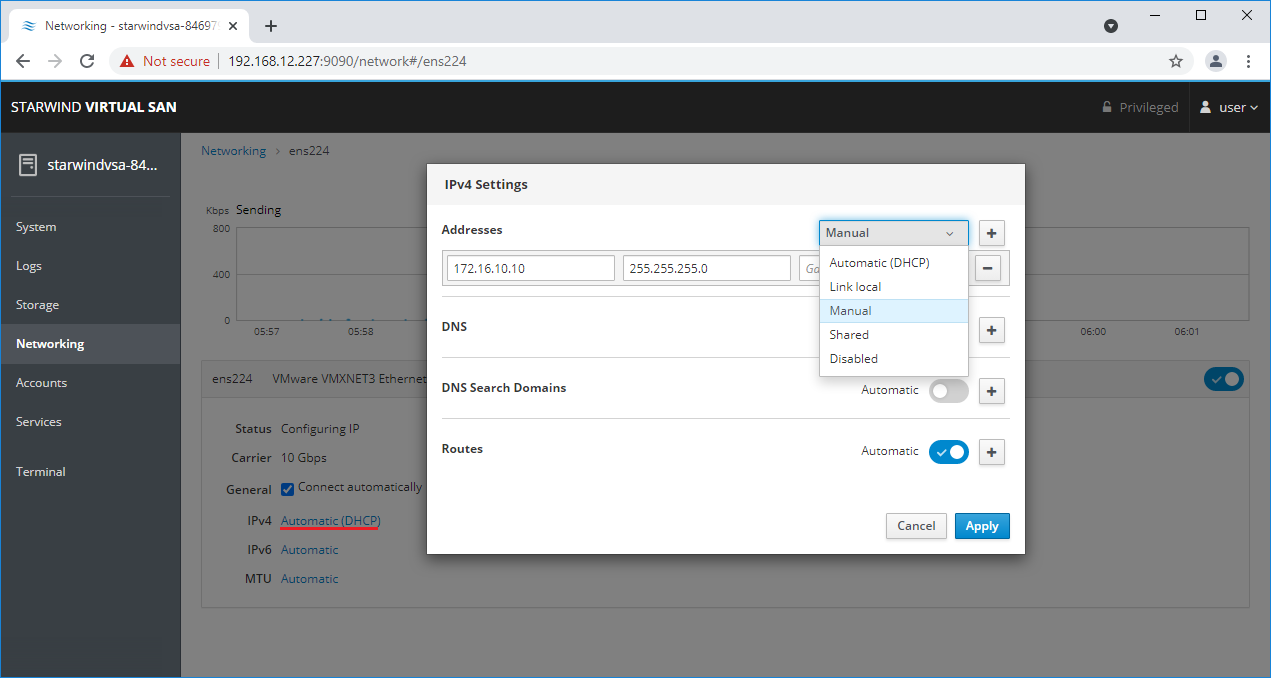

3. The network interfaces on each node for Synchronization and iSCSI/StarWind heartbeat interfaces should be in different subnets and connected directly according to the network diagram above. Here, the 172.16.10.x subnet is used for the iSCSI/StarWind heartbeat traffic, while the 172.16.20.x subnet is used for the Synchronization traffic.

NOTE: Do not use iSCSI/Heartbeat and Synchronization channels over the same physical link. Synchronization and iSCSI/Heartbeat links and can be connected either via redundant switches or directly between the nodes.

vCenter Server can be deployed separately on another host or as VCSA on StarWind VSAN highly-available storage, created in this guide.

Preparing Environment for StarWind VSAN Deployment

Configuring Networks

Configure network interfaces on each node to make sure that Synchronization and iSCSI/StarWind heartbeat interfaces are in different subnets and connected physically according to the network diagram above. All actions below should be applied to each ESXi server.

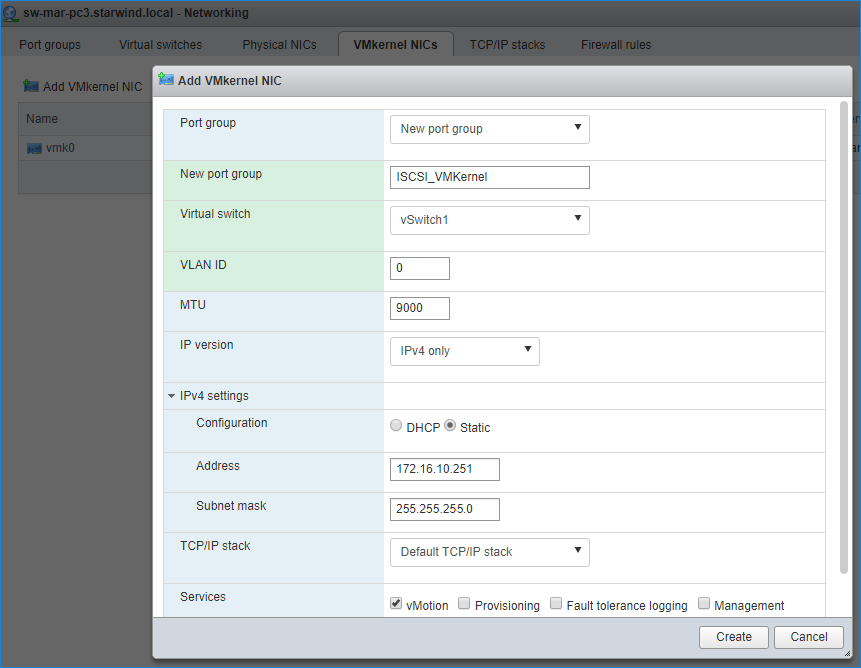

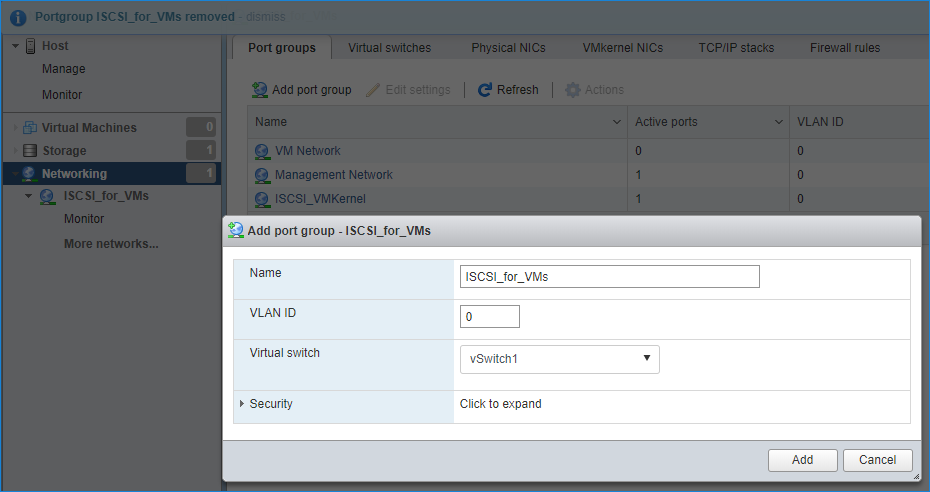

NOTE: Virtual Machine Port Group should be created for both iSCSI/ StarWind Heartbeat and the Synchronization vSwitches. VMKernel port should be created only for iSCSI traffic. Static IP addresses should be assigned to VMKernel ports.

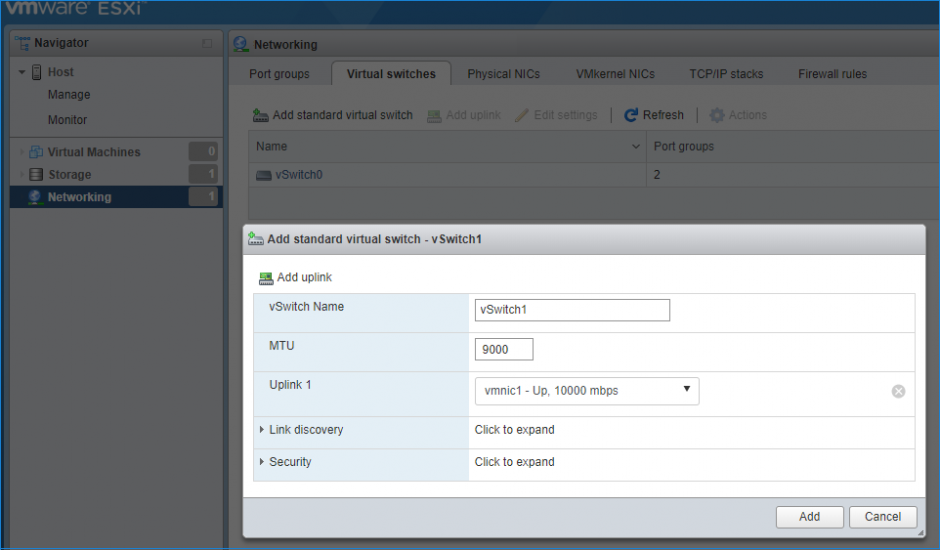

NOTE: It is recommended to set MTU to 9000 on vSwitches and VMKernel ports for iSCSI and Synchronization traffic. Additionally, vMotion can be enabled on VMKernel ports.

1. Using the VMware ESXi web console, create two standard vSwitches: one for the iSCSI/ StarWind Heartbeat channel (vSwitch1) and the other one for the Synchronization channel (vSwitch2).

2. Create a VMKernel port for the iSCSI/ StarWind Heartbeat channel.

3. Add a Virtual Machine Port Groups on the vSwitch for iSCSI traffic (vSwtich1) and on the vSwitch for Synchronization traffic (vSwitch2).

4. Repeat steps 1-3 for any other links intended for Synchronization and iSCSI/Heartbeat traffic on ESXi hosts.

Deploying StarWind Virtual SAN for vSphere

1. Download zip archive that contains StarWind Virtual SAN for vSphere: https://www.starwindsoftware.com/starwind-virtual-san#download

2. Extract virtual machine files from the downloaded archive.

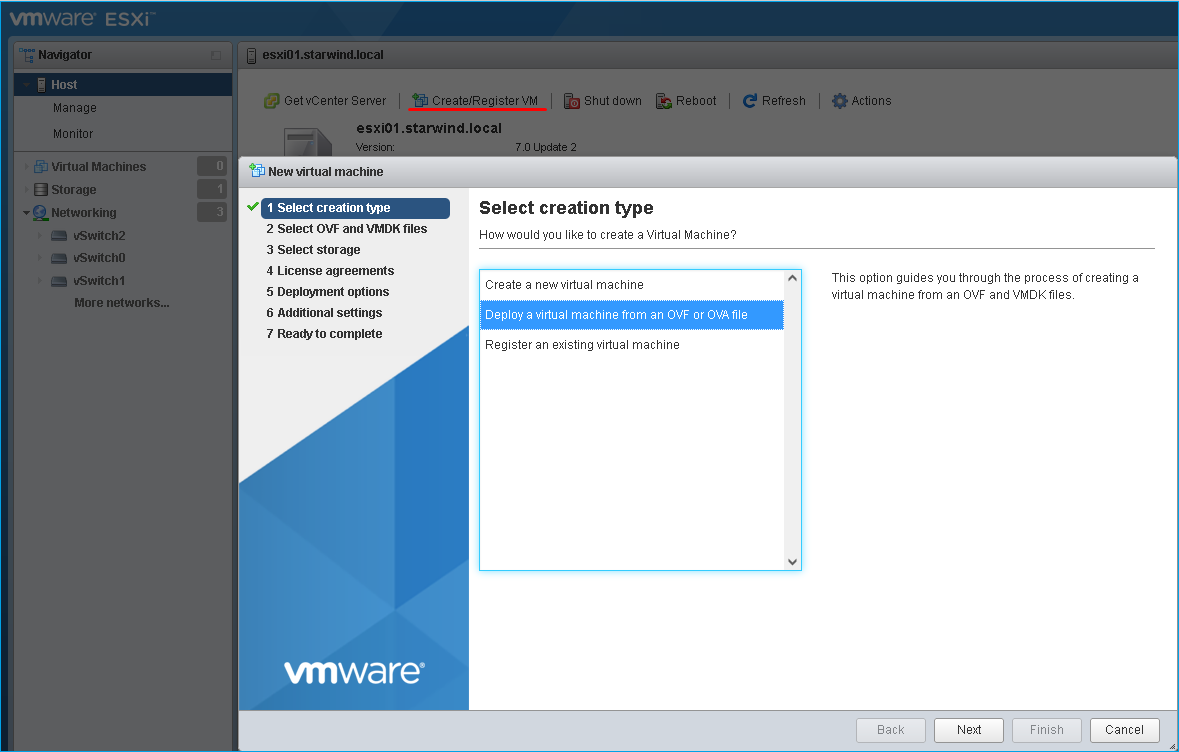

3. Deploy a virtual machine on each ESXi host using the “Create/Register VM” button. Select “Deploy a virtual machine from an OVF or OVA file” in the Select creation type section and press Next.

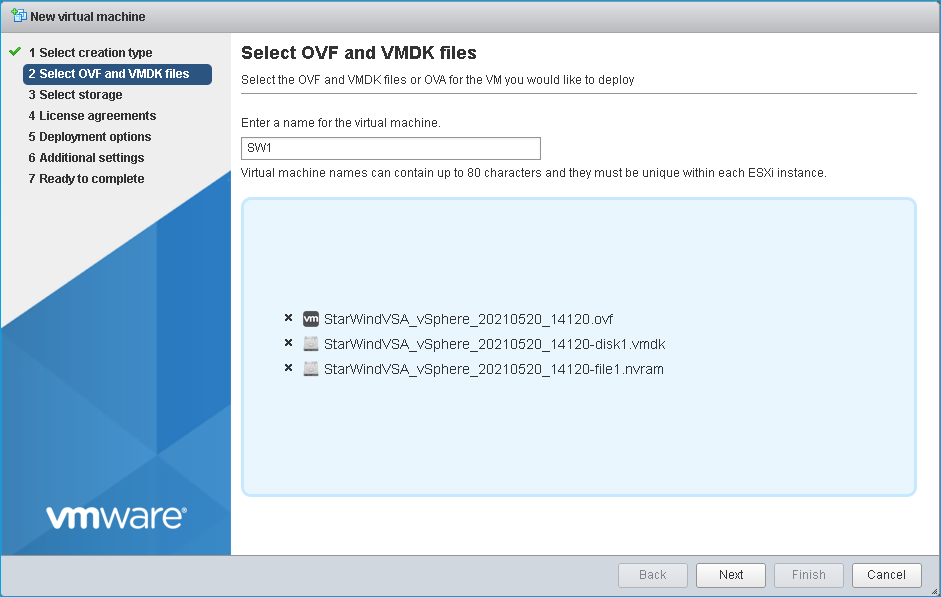

4. Specify the name for the virtual machine with StarWind VSAN, drag and drop the extracted files to the wizard, and press Next.

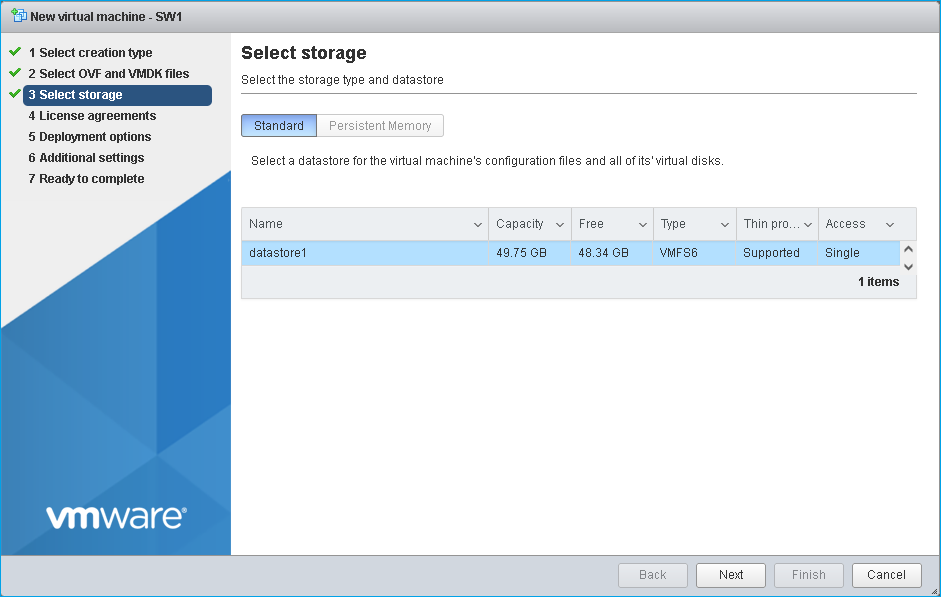

5. Specify the location for the StarWind Virtual SAN VM and press Next.

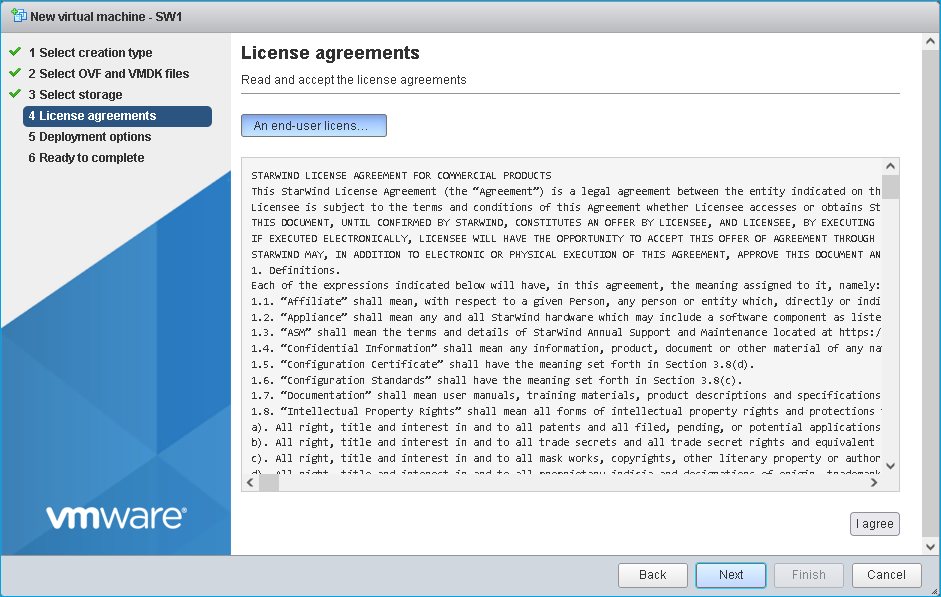

6. Read and accept the license agreements by pressing on “I agree” button. Press Next to continue.

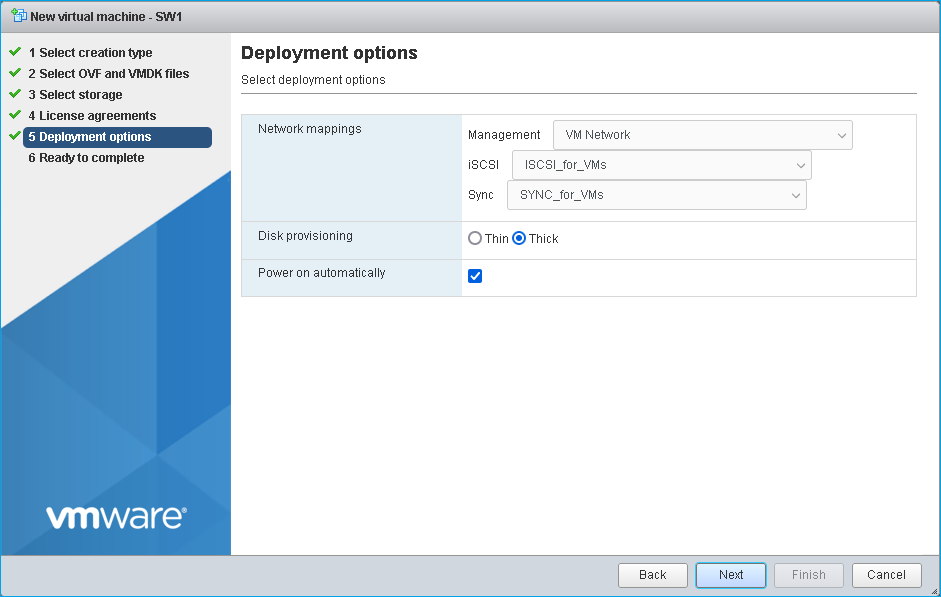

7. Choose the proper networks for the VM by their purpose and other options.

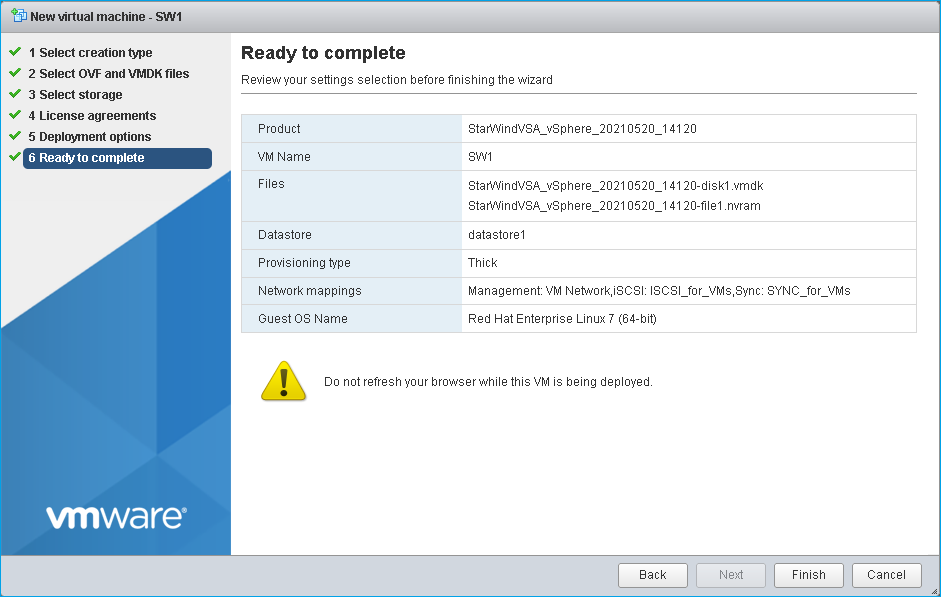

8. Review the settings and click Finish to start the deployment process.

12. Repeat all the steps from this section on the other ESXi hosts.

NOTE: In some cases, it’s recommended to reserve memory for StarWind VSAN VM.

NOTE: When using StarWind with the synchronous replication feature inside of a Virtual Machine, it is recommended not to make backups and/or snapshots of the Virtual Machine with the StarWind VSAN service installed, as this could pause the StarWind Virtual Machine. Pausing the Virtual Machines while the StarWind VSAN service in under load may lead to split-brain issues in synchronous replication devices, thus to data corruption.

Configuring StarWind VMs Startup/Shutdown

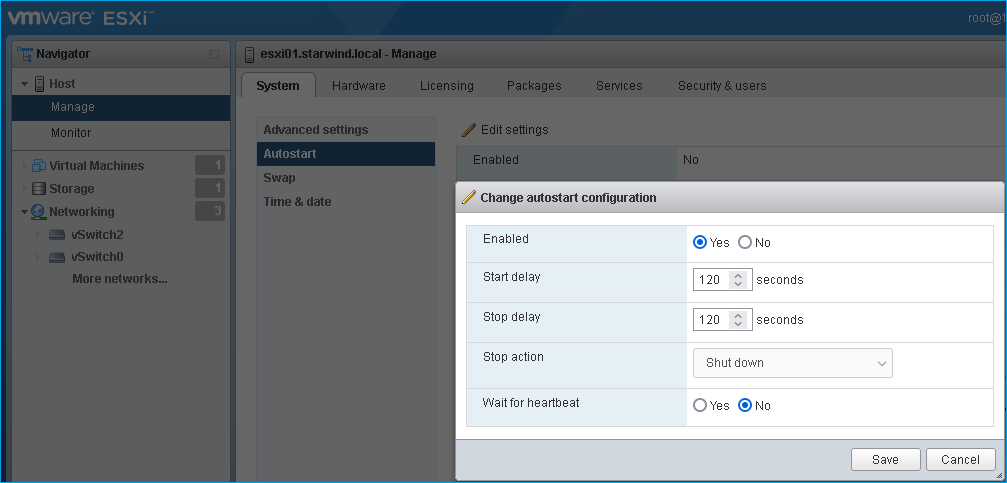

1. Setup the VMs startup policy on both ESXi hosts from Manage -> System tab in the ESXi web console. In the appeared window, check Yes to enable the option and choose the stop action as Shut down. Click Save to proceed.

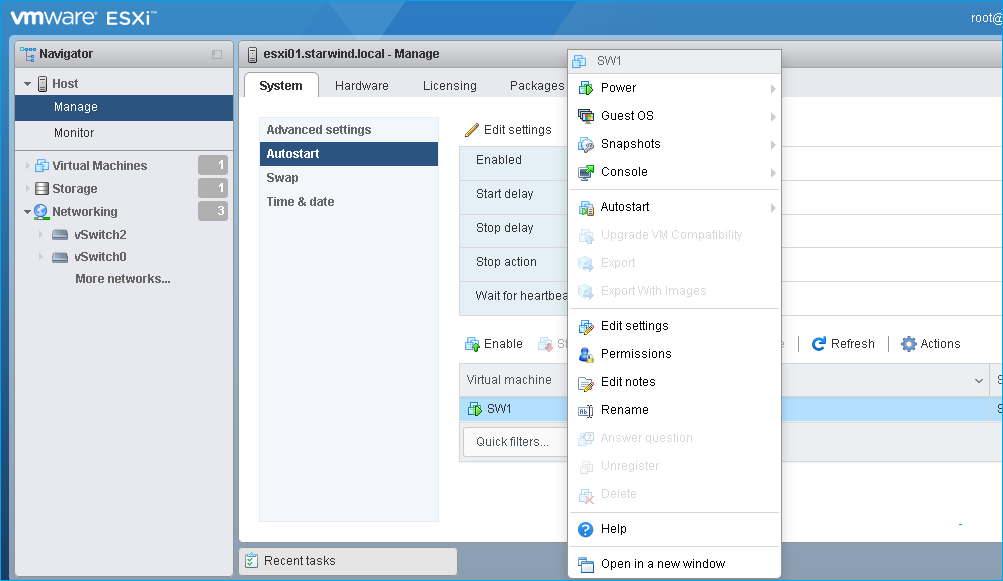

2. To configure a VM autostart, right-click on the VM, navigate to Autostart and click Enable.

3. Complete the actions above on StarWind VM located on all ESXi hosts.

4. Start the virtual machines on all ESXi hosts.

Configuring StarWind Virtual SAN VM settings

By default, the StarWind Virtual SAN virtual machine receives an IP address automatically via DHCP. It is recommended to create a DHCP reservation and set a static IP address for this VM. In order to access StarWind Virtual SAN VM from the local network, the virtual machine must have access to the network. In case there is no DHCP server, the connection to the VM can be established using the VMware console and static IP address can be configured manually.

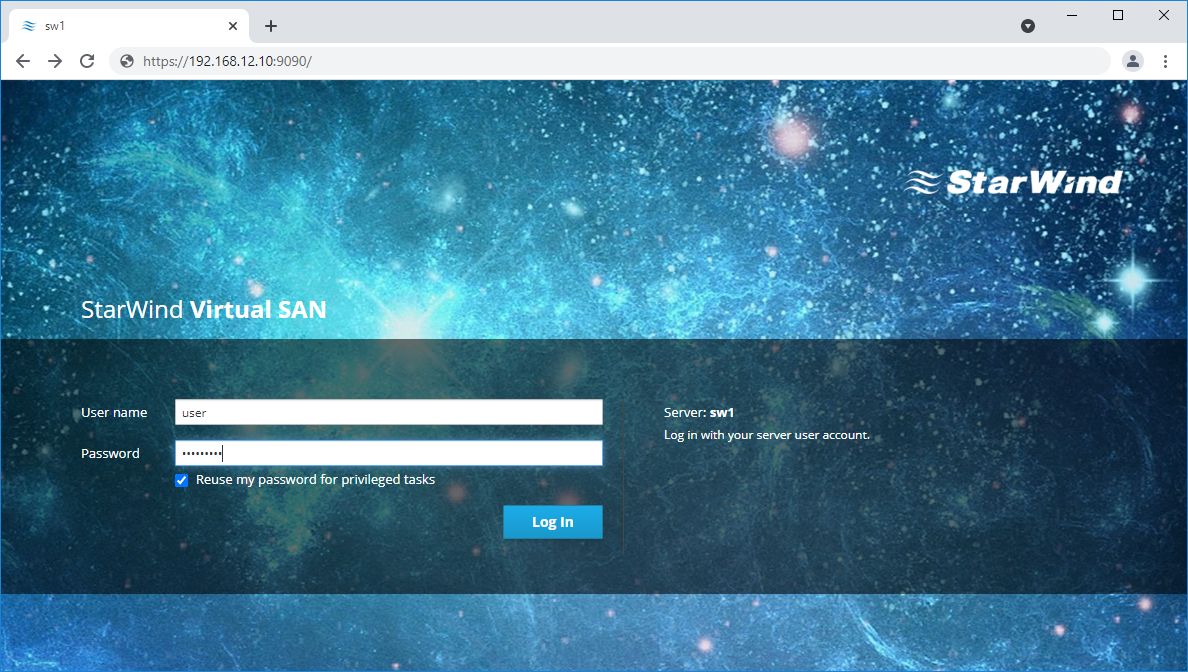

1. Open a web browser and enter the IP address of the VM, which it had received via DHCP (or had it assigned manually), and log in to StarWind Virtual SAN for vSphere using the following default credentials:

Username: user

Password: rds123RDS

NOTE: Make sure to tick Reuse my password for privileged tasks check box.

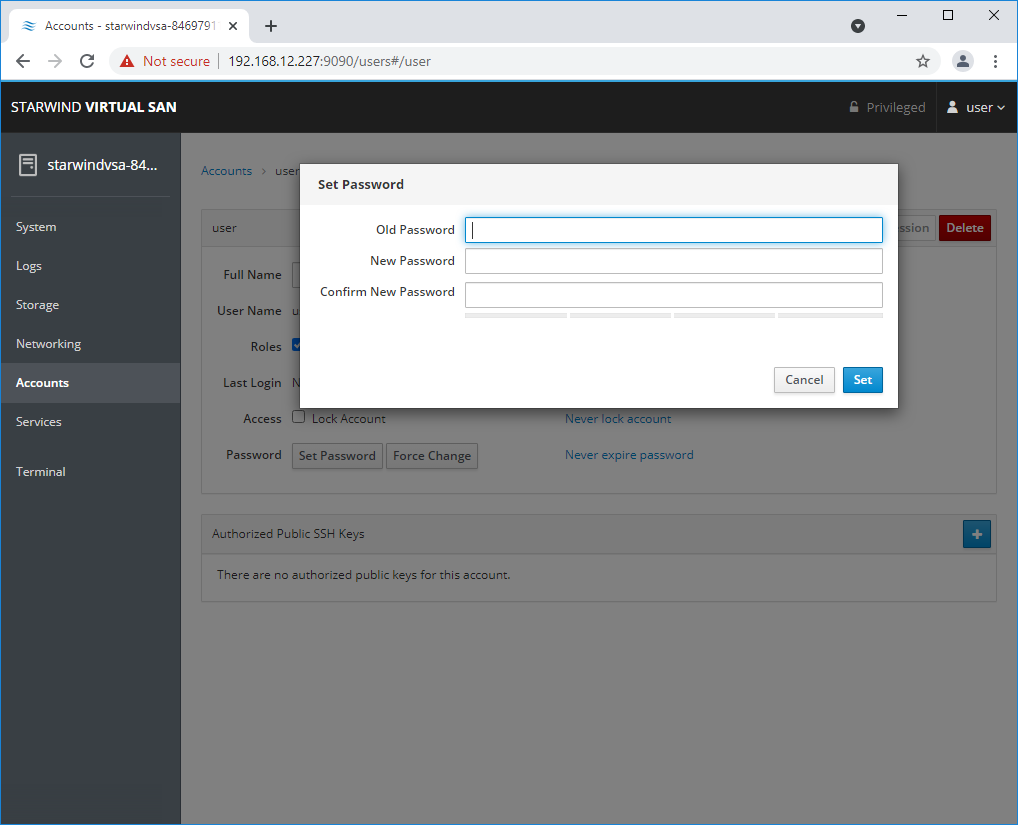

2. After the successful login, on the left sidebar, click Accounts.

3. Select a user and click Set Password.

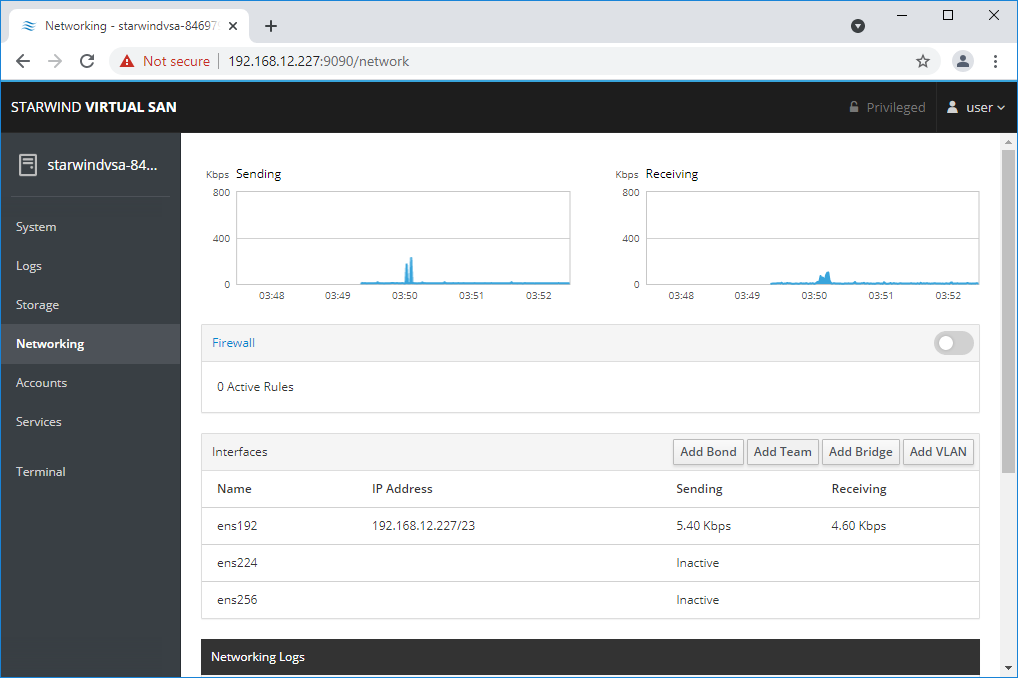

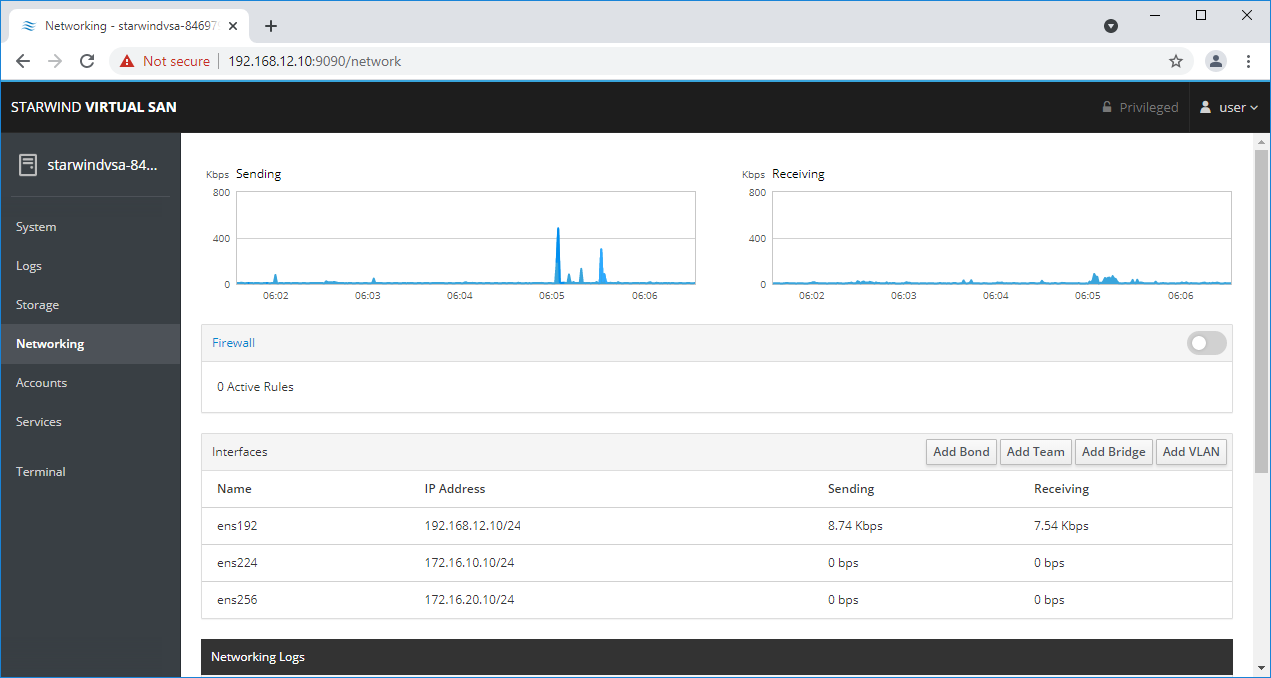

4. On the left sidebar, click Networking.

Here, the Management IP address of the StarWind Virtual SAN Virtual Machine, as well as IP addresses for iSCSI and Synchronization networks can be configured.

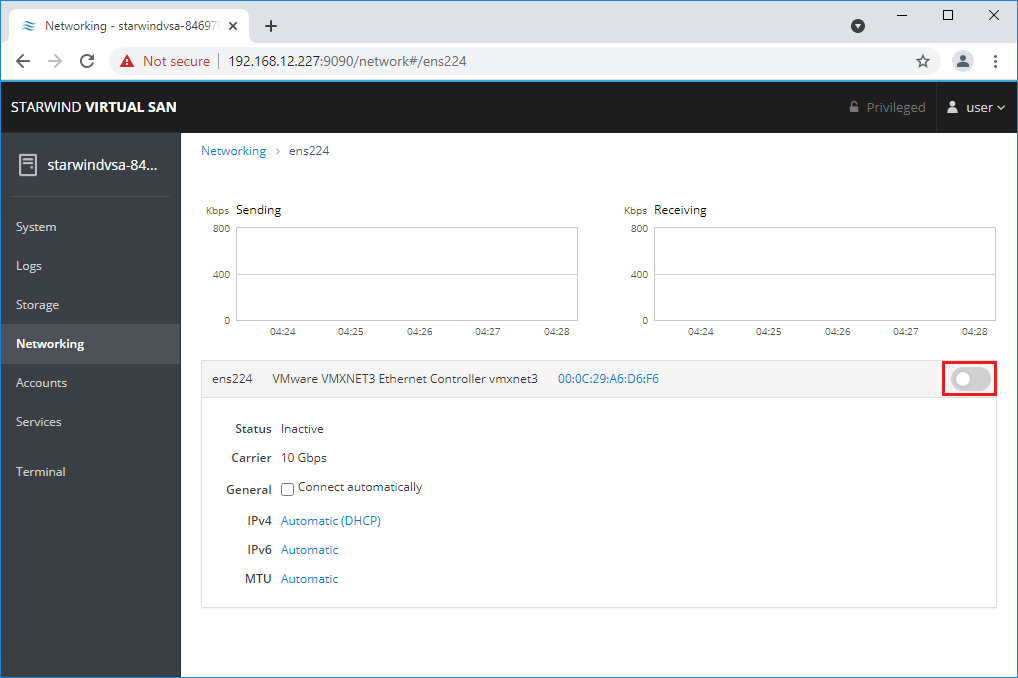

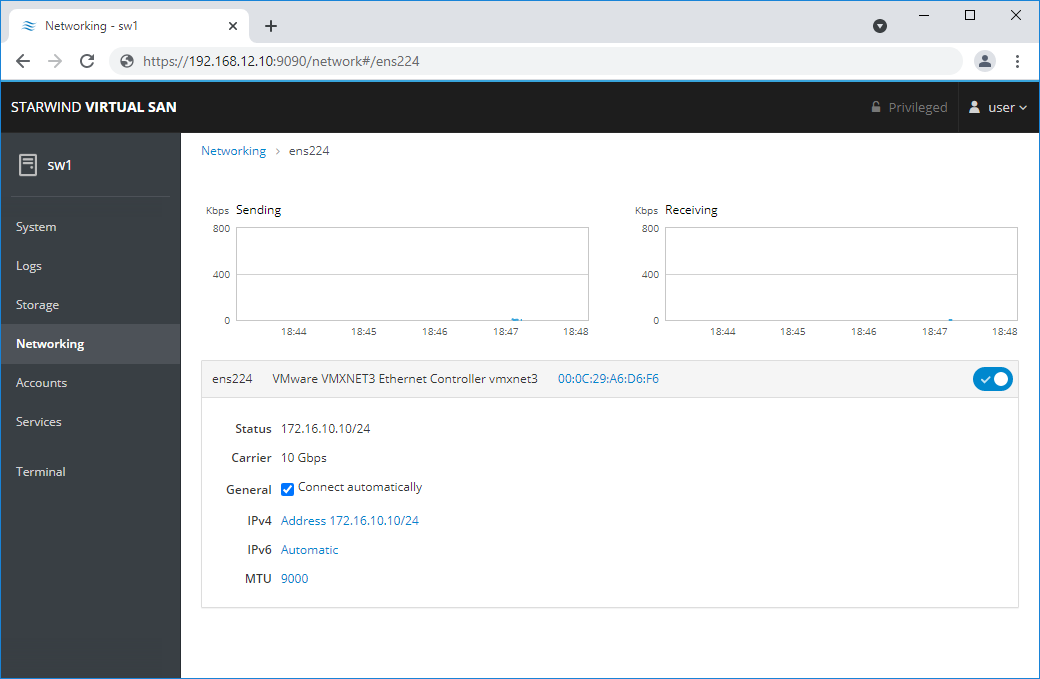

In case the Network interface is inactive, click on the interface, turn it on, and set it to “Connect automatically“.

5. Click on Automatic (DHCP) to set the IP address (DNS and gateway – for Management).

6. The result should look like on the picture below:

NOTE: It is recommended to set MTU to 9000 on interfaces, dedicated for iSCSI and Synchronization traffic. Change Automatic to 9000, if required.

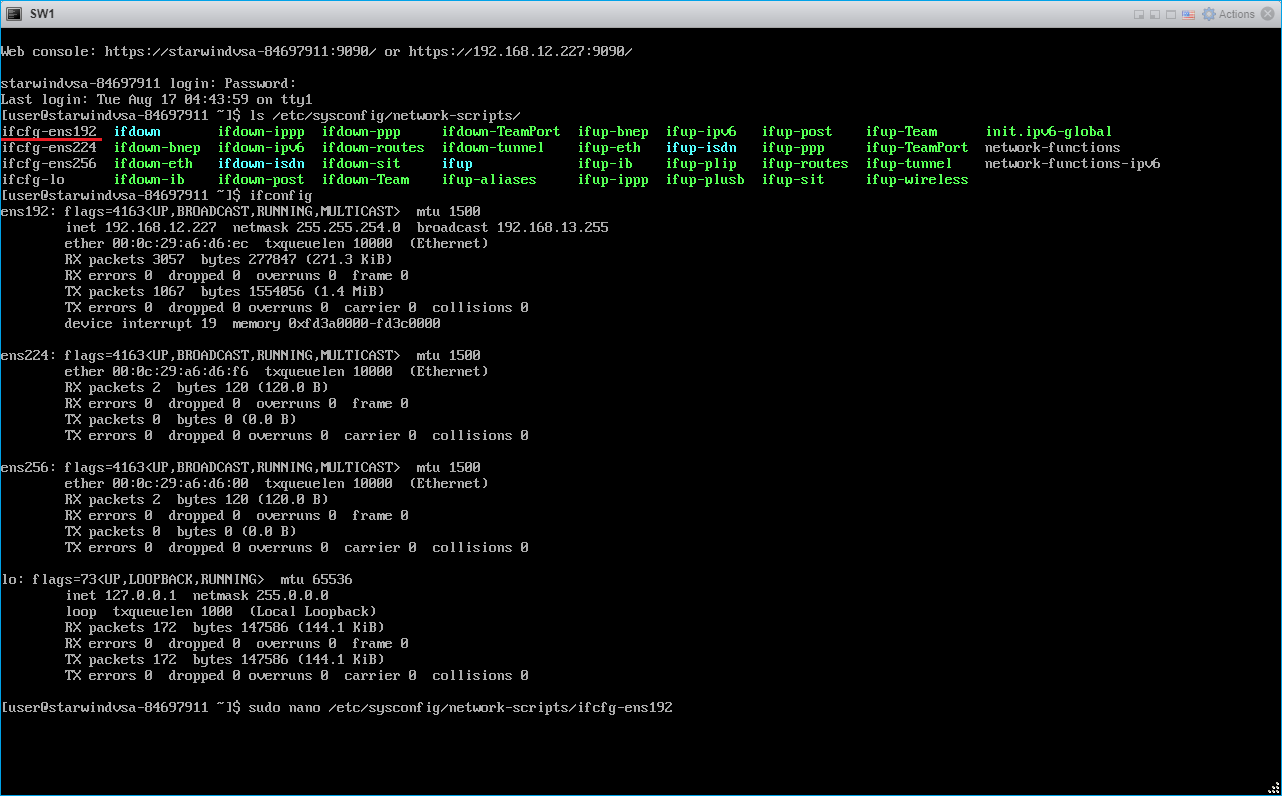

6. Alternatively, log in to the VM via the VMware console and assign a static IP address by editing the configuration file of the interface located by the following path: /etc/sysconfig/network-scripts

7. Open the file, corresponding to the Management interface using text editor, for example:

sudo nano /etc/sysconfig/network-scripts/ifcfg-ens192

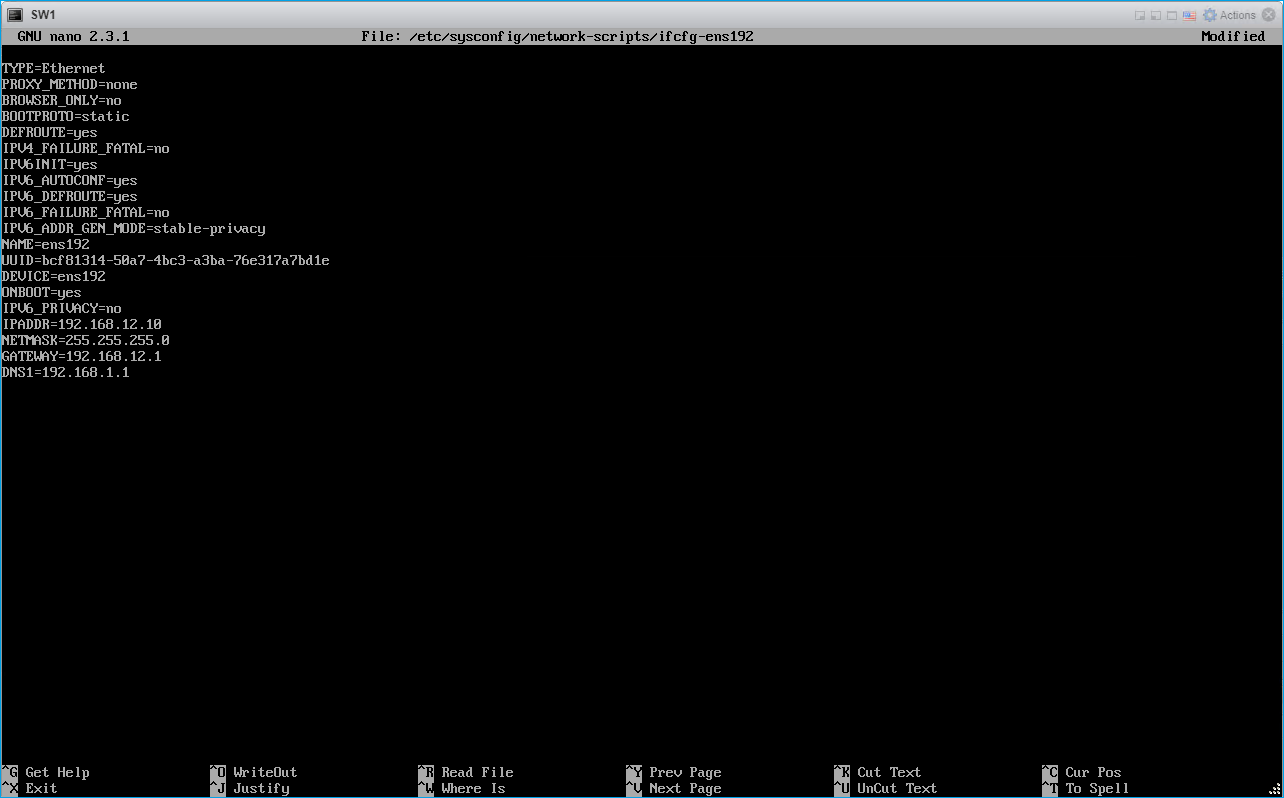

8. Edit the file:

Change the line BOOTPROTO=dhcp to: BOOTPROTO=static

Add the IP settings needed to the file:

IPADDR=192.168.12.10

NETMASK=255.255.255.0

GATEWAY=192.168.12.1

DNS1=192.168.1.1

By default, the Management link should have an ens192 interface name. The configuration file should look as follows

9. Restart interface using the following cmdlet: sudo ifdown ens192 , sudo ifup ens192 or restart the VM.

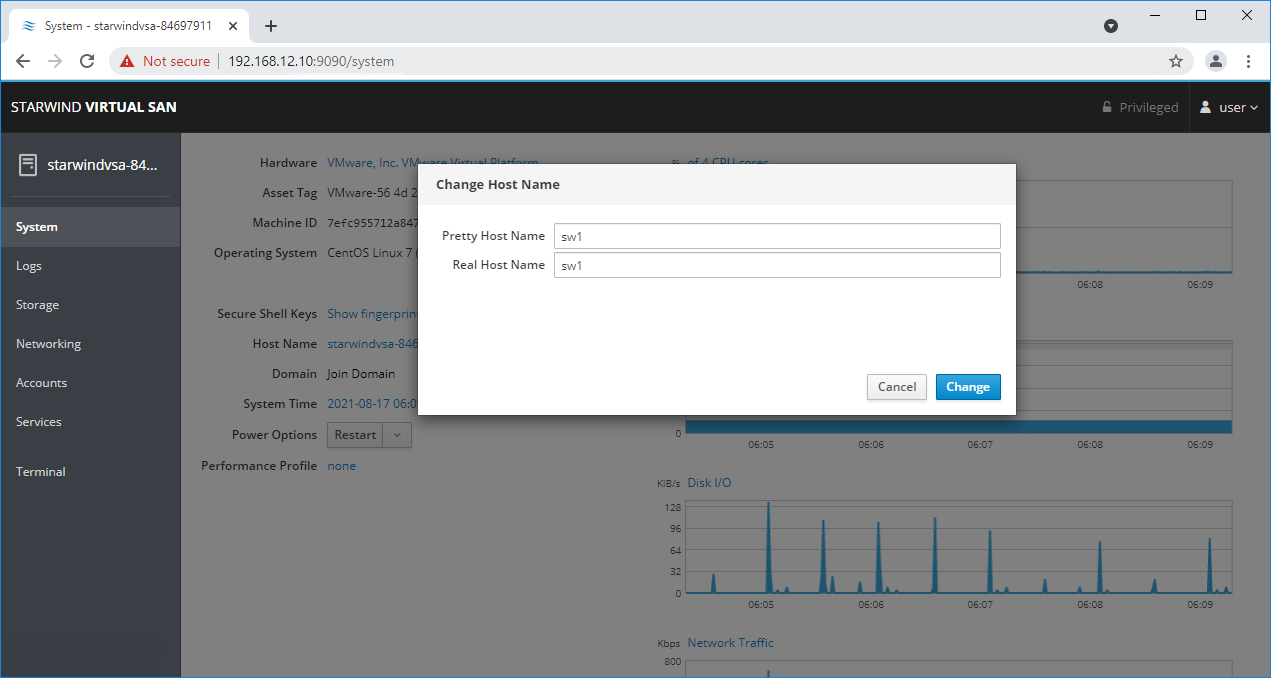

10. Change the Host Name from the System tab by clicking on it

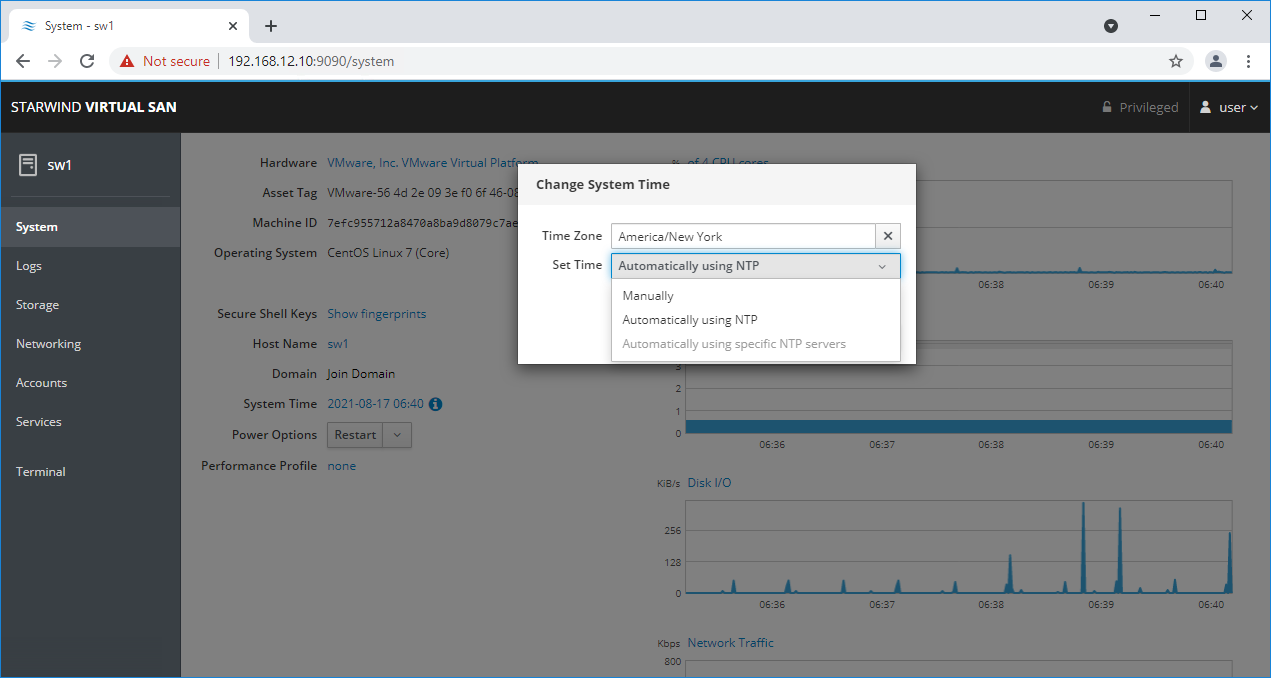

11. Change System time and NTP settings if required

12. Repeat the steps above on each StarWind VSAN VM.

Configuring StarWind Management Console

1. Install StarWind Management Console on a workstation with Windows OS (Windows 7 or higher, Windows Server 2008 R2 and higher) using the installator available here.

NOTE: StarWind Management Console and PowerShell Management Library components are required.

2. Select the appropriate option to apply the StarWind License key.

Once the appropriate license key has been received, it should be applied to StarWind Virtual SAN service via Management Console or PowerShell.

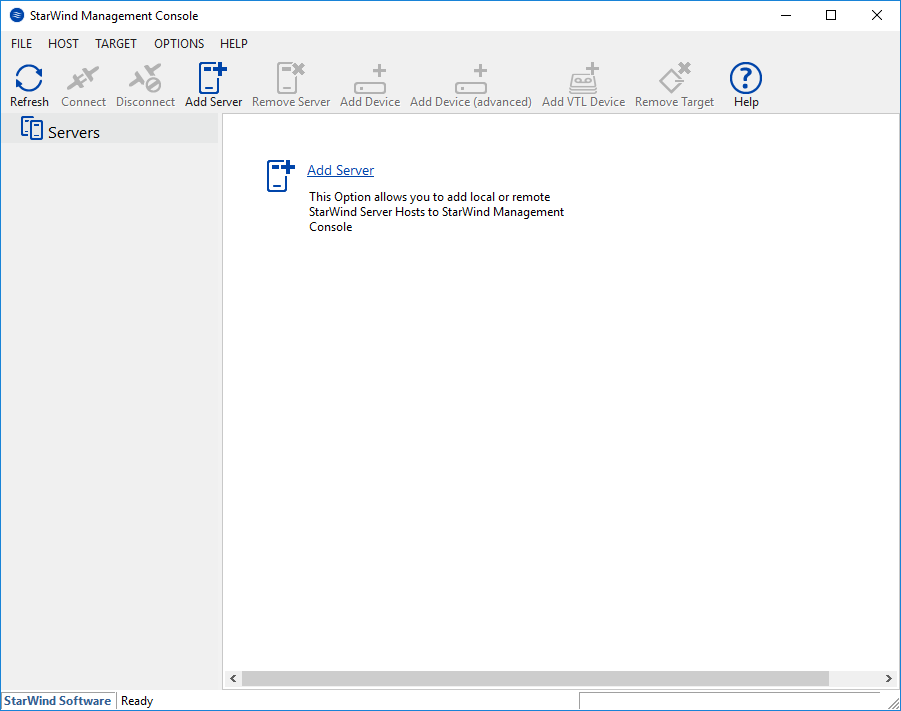

3. Open StarWind Management Console and click Add Server.

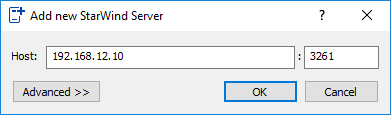

4. Type the IP address of the StarWind Virtual SAN in the pop-up window and click OK.

5. Select the server and click Connect.

6. Click Apply Key… on the pop-up window.

7. Select Load license from file and click the Load button.

8. Select the appropriate license key.

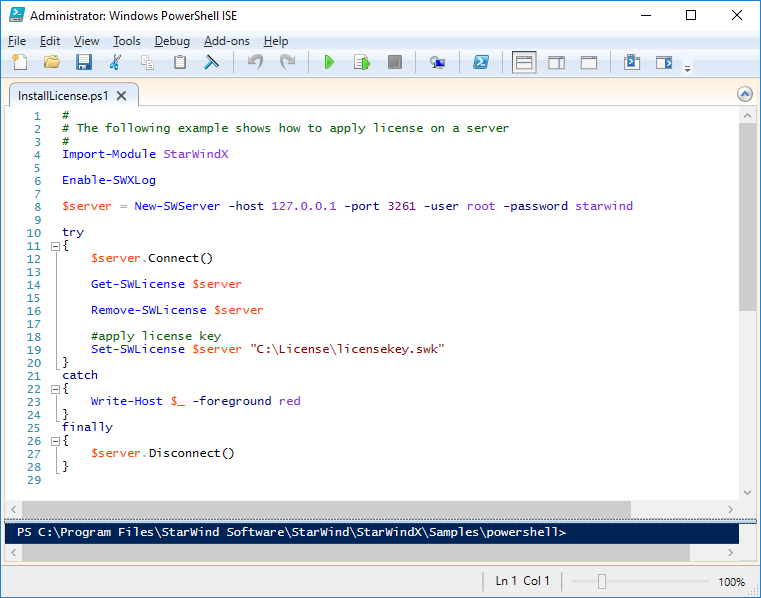

As an alternative, PowerShell can be used. Open StarWind InstallLicense.ps1 script with PowerShell ISE as administrator. It can be found here:

C:\Program Files\StarWind Software\StarWind\StarWindX\Samples\powershell\InstallLicense.ps1

Type the IP address of StarWind Virtual SAN VM and credentials of StarWind Virtual SAN service (defaults login: root, password: starwind).

Add the path to the license key.

9. After the license key is applied, StarWind devices can be created.

NOTE: In order to manage StarWind Virtual SAN service (e.g. create ImageFile devices, VTL devices, etc.), StarWind Management Console can be used.

Configuring Storage

StarWind Virtual SAN for vSphere can work on top of Hardware RAID or Linux Software RAID (MDADM) inside of the Virtual Machine.

Please select the required option:

Configuring StarWind Storage on Top of Hardware RAID

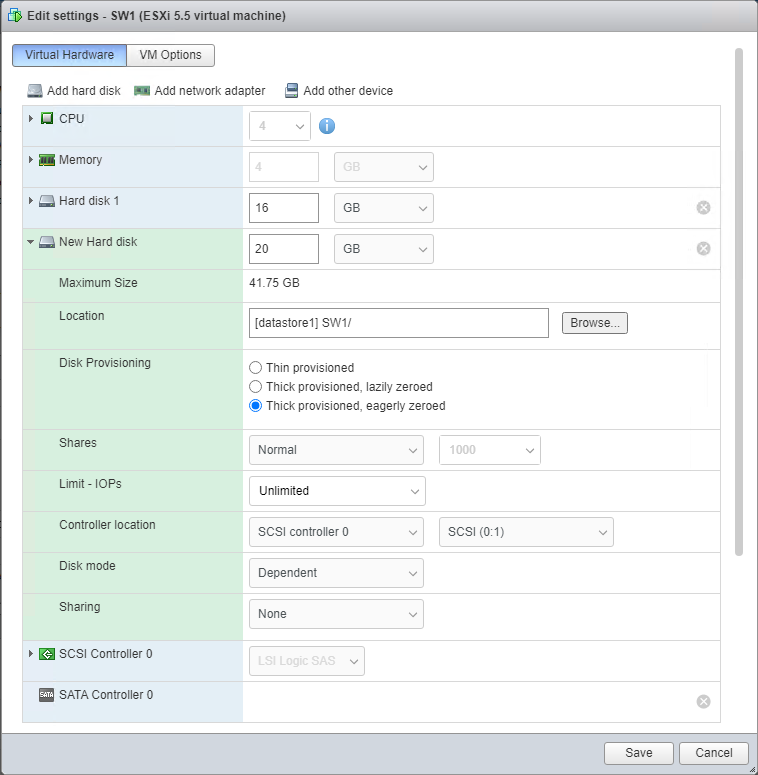

1. Add a new virtual disk to the StarWind Virtual SAN VM by editing its settings. Make sure it is Thick Provisioned Eager Zeroed. Virtual Disk should be located on the datastore provided by hardware RAID.

NOTE: Alternatively, the disk can be added to StarWind VSAN VM as RDM. The link to VMware documentation is below:

https://docs.vmware.com/en/VMware-vSphere/7.0/com.vmware.vsphere.vm_admin.doc/GUID-4236E44E-E11F-4EDD-8CC0-12BA664BB811.html

NOTE: If a separate RAID controller is available, it can be used as dedicated storage for StarWind VM, and RAID controller can be added to StarWind VM as a PCI device. In this case RAID volume will be available as a virtual disk in the Drives section in the Web console. Follow the instructions in the section below on how to add RAID controller as PCI device to StarWind VM.

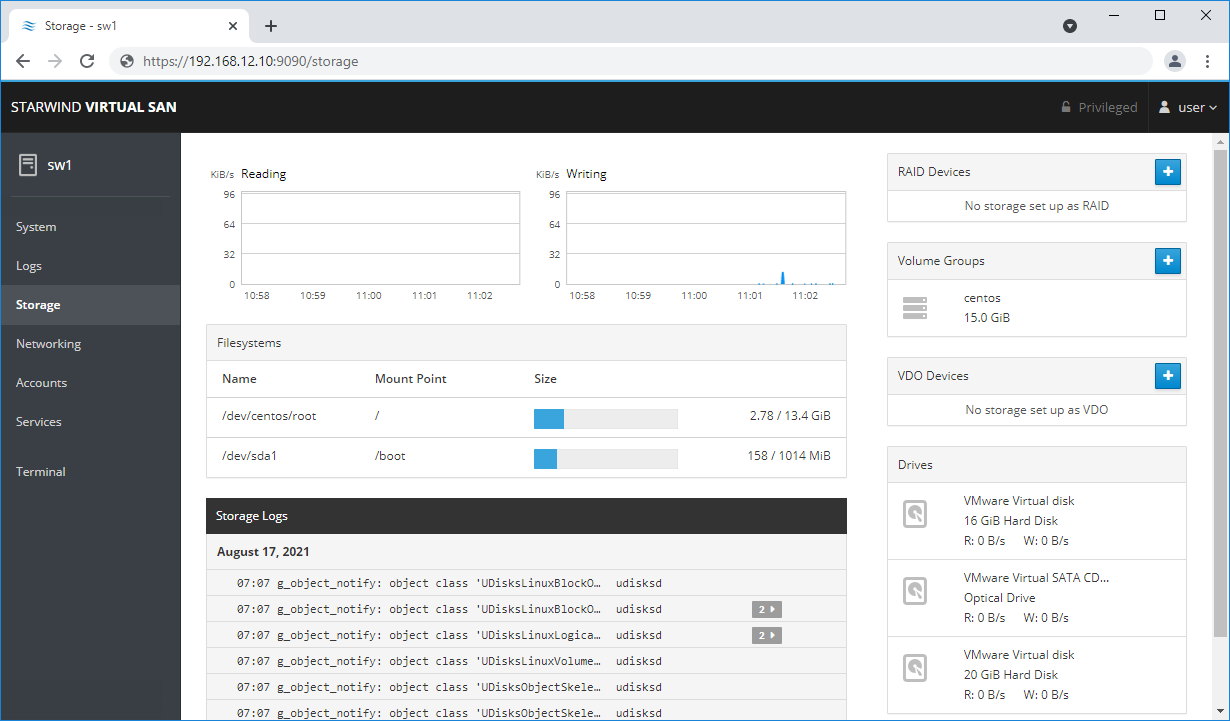

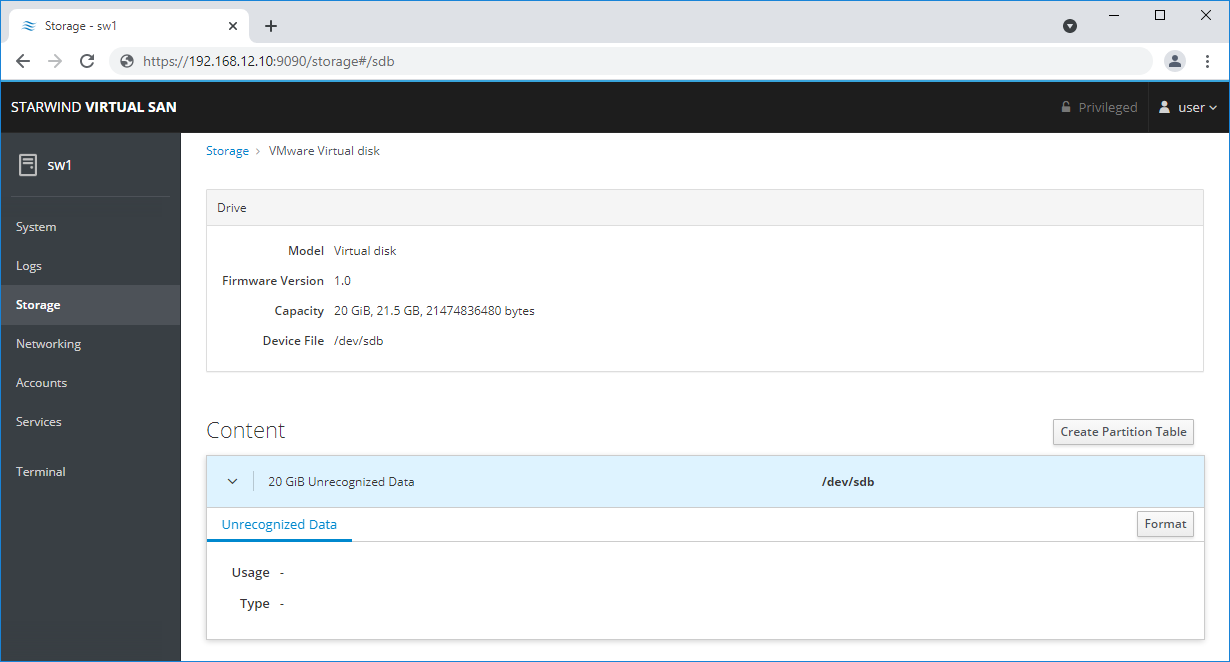

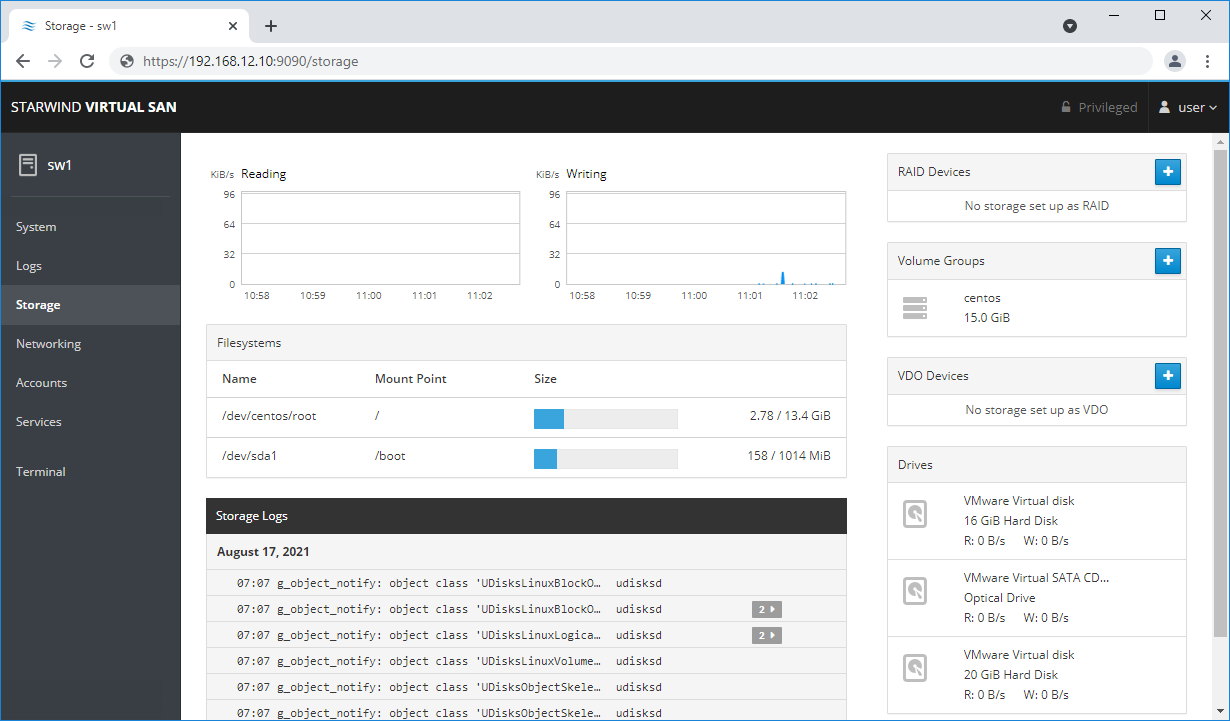

2. Login to StarWind VSAN VM web console and find in the Storage section under Drives the Virtual Disk that was recently added and choose it.

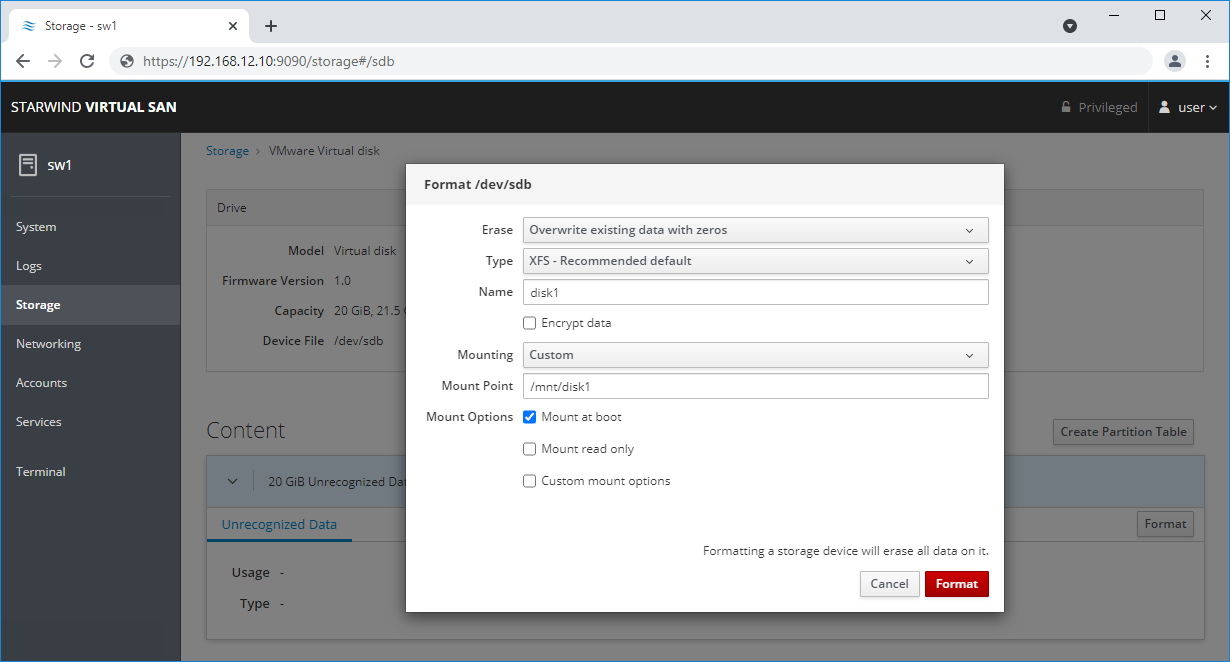

3. The added disk does not have any partitions and filesystem. Press Create partition table and press Format afterward to create the partition and format it.

NOTE: It is not necessary to overwrite data while creating partition.

4. Create the XFS partition. Specify the name and erase option. The mount point should be as following: /mnt/%yourdiskname% . Click Format. To enable OS boot when mount point is missing (e.g., hardware failure), add nofail as a boot option.

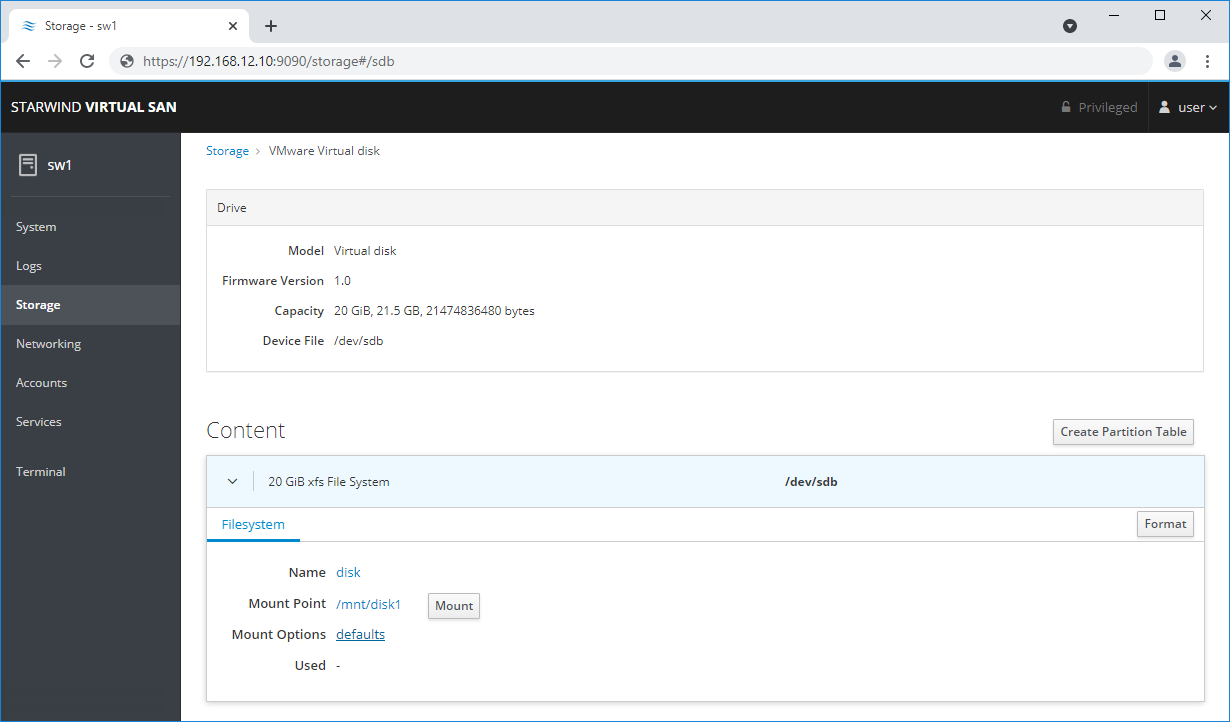

5. On the storage page of the disk, navigate to the Filesystem tab. Click Mount.

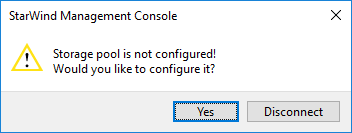

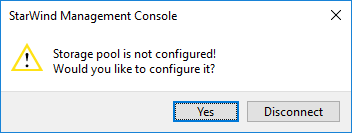

6. Using StarWind Management Console, connect to StarWind Virtual SAN VM and configure storage pool (default storage for StarWind devices) by clicking Yes.

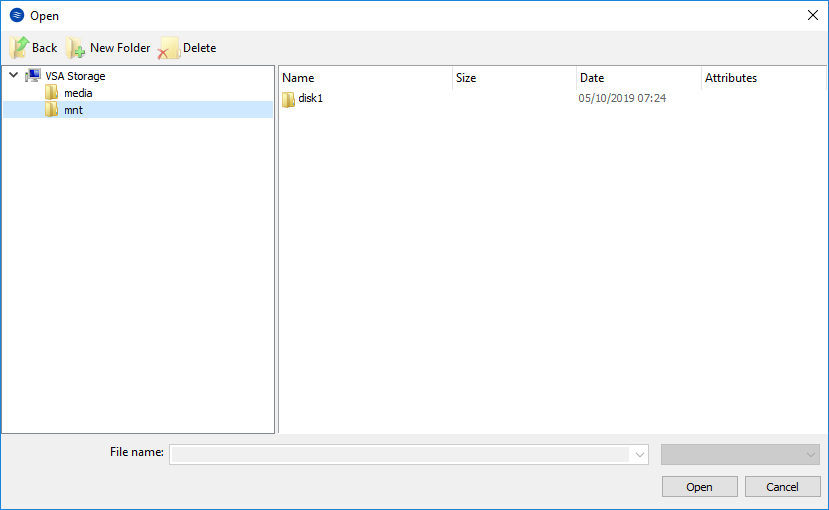

7. Select the disk which was recently mounted.

7. Select the disk which was recently mounted.

Configuring StarWind Storage on Top of Software RAID

Make sure that the prerequisites for deploying Software RAID with StarWind Virtual SAN are met:

- The ESXi hosts have all the drives connected through HBA or RAID controller in HBA mode

- StarWind Virtual SAN for vSphere VM is deployed on the ESXi server and turned off

- StarWind Virtual SAN VM is installed on a separate storage device available to the ESXi host (e.g. SSD, HDD etc.)

- HBA or RAID controller can be added via a DirectPath I/O passthrough device to a StarWind Virtual SAN VM without affecting ESXi host work

PCI Device Configuration

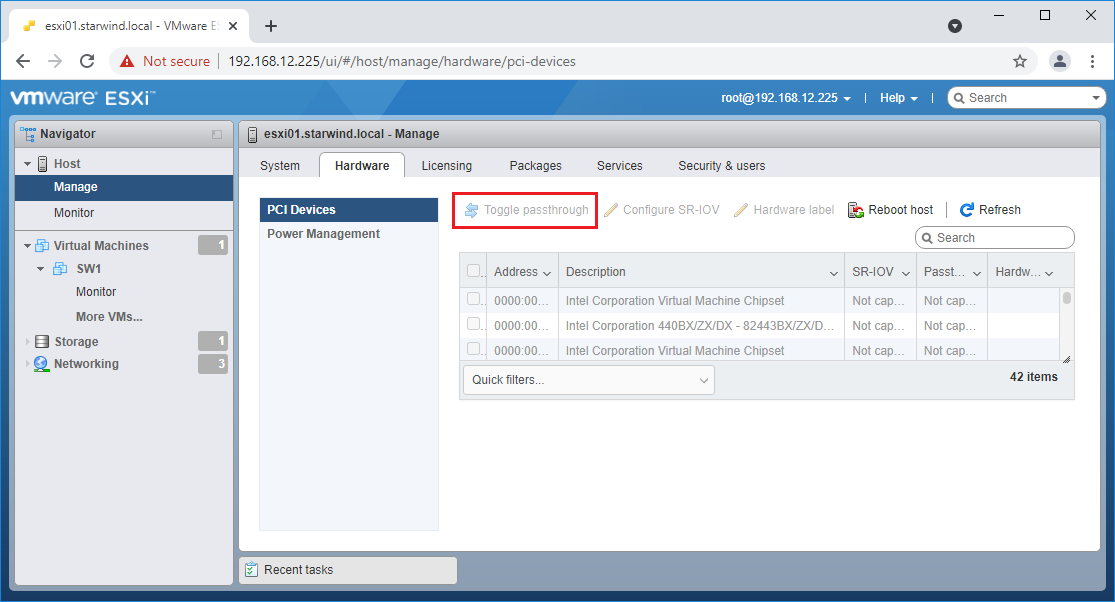

1. Login to the ESXi host where StarWind Virtual SAN VM is installed.

2. In the Navigator, go to Manage, and in the Hardware tab, select PCI Devices.

3. Locate the HBA/RAID Controller of the ESXi host. Check the box on the appropriate PCI device. Click Toggle passthrough.

4. Restart ESXi host to make PCI device available to VMs.

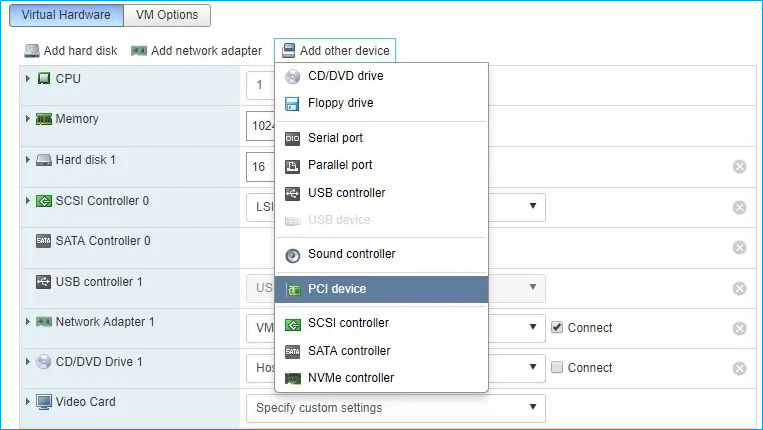

5. Right-click on the StarWind Virtual SAN VM to Edit Settings.

7. Click ADD NEW DEVICE. Select PCI Device.

8. Add HBA/RAID Controller to the VM. Reserve memory for the StarWind Virtual Machine. Click OK.

9. Boot StarWind Virtual SAN VM.

10. Repeat steps 1-8 for all hosts where StarWind Virtual SAN for vSphere is deployed.

11. Login to StarWind Virtual SAN VM via IP. The default credentials:

Login: user

Password: rds123RDS

NOTE: Please make sure that the default password is changed.

12. Go to the Storage page. The Drives section shows the drives connected to HBA/RAID Controller (if available). For each disk, create partition table.

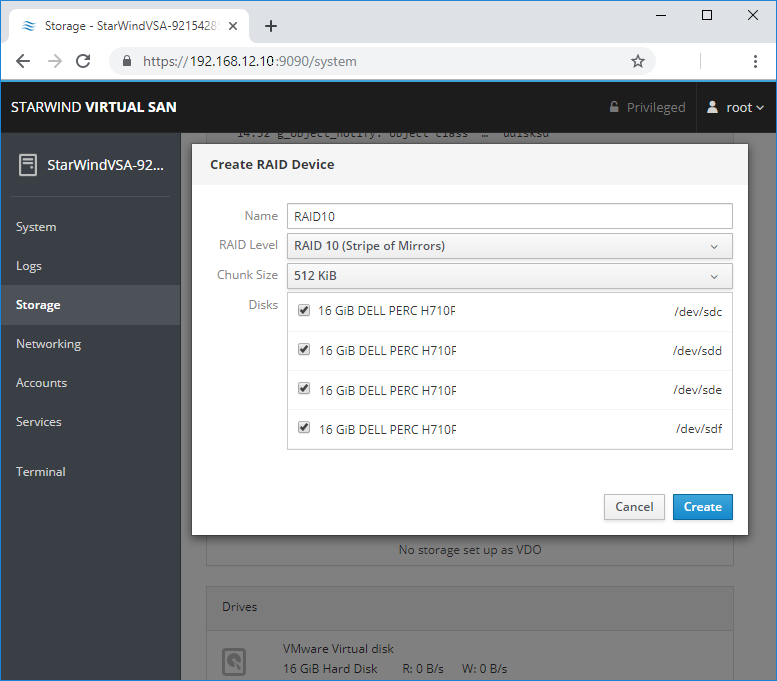

13. Click “+” in the RAID Devices section to create Software RAID. (In the current example, RAID 10 will be created with 4 HDD drives). The RAID configuration depends on the number of disks, chunk size, and array level are shown in the table below:

| RAID Level | Chunk size for HDD Arrays | Chunk size for SSD Arrays |

|

0 |

Disk quantity * 4Kb |

Disk quantity * 8Kb |

|

5 |

(Disk quantity – 1) * 4Kb |

(Disk quantity – 1) * 8Kb |

|

6 |

(Disk quantity – 2) * 4Kb |

(Disk quantity – 2) * 8Kb |

| 10 | (Disk quantity * 4Kb)/2 | (Disk quantity * 8Kb)/2 |

StarWind Software RAID recommended settings can be found here:

https://knowledgebase.starwindsoftware.com/guidance/recommended-raid-settings-for-hdd-and-ssd-disks/

14. Select the drives to add to the array.

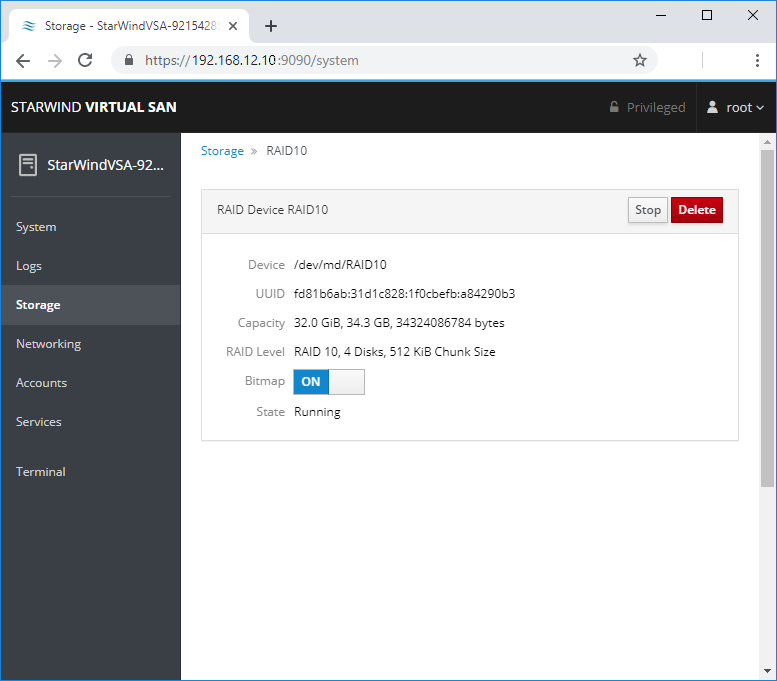

15. After the synchronization is finished, find the RAID array created. Press Create partition table and press Format afterward to create the partition and format it.

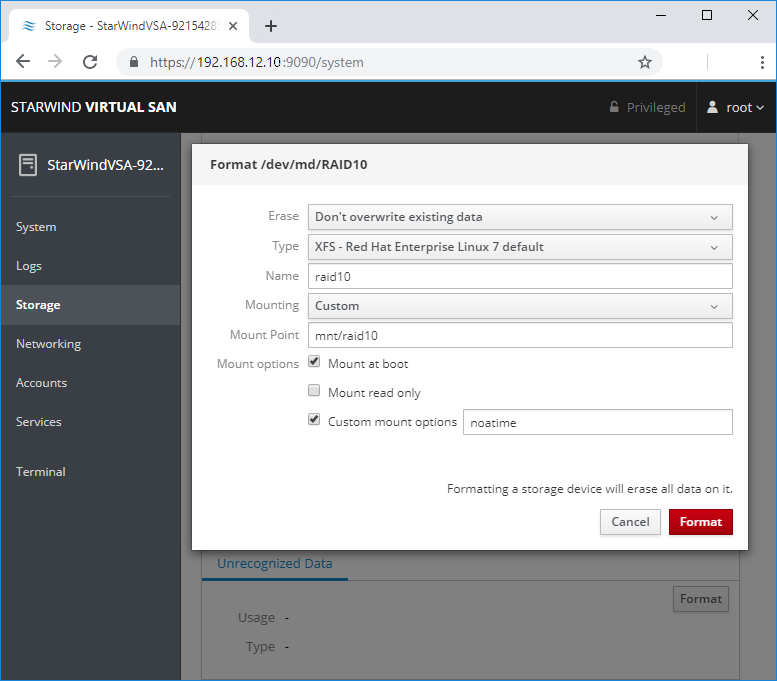

NOTE: It is not necessary to overwrite data while creating a partition.

16. Create the XFS partition. Mount point should be as follows: /mnt/%yourdiskname% . Select the Custom mounting option and type noatime. To enable OS boot when mount point is missing (e.g., hardware failure), add nofail as a boot option. Click Format.

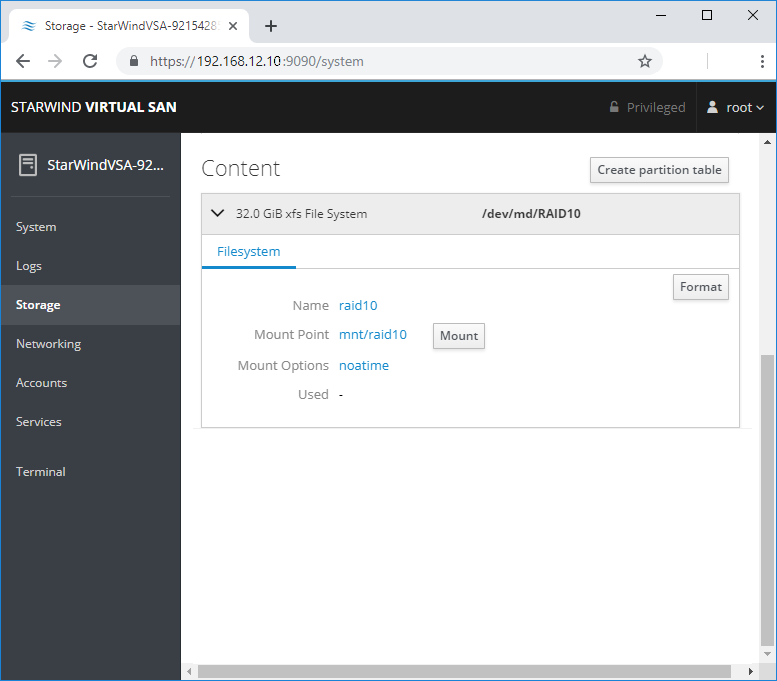

17. On the storage page of the disk, navigate to the Filesystem tab. Click Mount.

18. Connect to StarWind Virtual SAN from StarWind Management Console or from Web Console. Click Yes.

19. Select the disk recently mounted.

Creating StarWind HA LUNs using PowerShell

1. Open PowerShell ISE as Administrator.

2. Open StarWindX sample CreateHA_2.ps1 using PowerShell ISE. It can be found here: C:\Program Files\StarWind Software\StarWind\StarWindX\Samples\

NOTE: The script below creates a 1TB size 2-node HA device with a heartbeat failover strategy on StarWind nodes with management IP addresses 192.168.0.1 and 192.168.0.2 correspondingly.

The IP addresses 172.16.10.1 and 172.16.10.2 are used as heartbeat interfaces along with 192.168.0.1 and 192.168.0.2 for redundancy.

The IP addresses 172.16.20.1 and 172.16.20.2 on each node correspondingly as well as 172.16.21.1 and 172.16.21.2 are used for the devices synchronization between the nodes.

The script does not create a directory. Make sure you create the directory listed as $imagePath value before running the script.

Make sure to open 3261 and 3260 ports.

The same approach applies to CreateHA_3.ps1 which allow to create a 3-way replica HA device.

3. Configure script parameters according to the following example:

param($addr="192.168.0.1", $port=3261, $user="root", $password="starwind",

$addr2="192.168.0.2", $port2=$port, $user2=$user, $password2=$password,

#common

$initMethod="NotSynchronize",

$size=1048576,

$sectorSize=512,

$failover=0,

$bmpType=1,

$bmpStrategy=0,

#primary node

$imagePath="/mnt/sdb1/volume1",

$imageName="device1",

$createImage=$true,

$storageName="",

$targetAlias="target1",

$poolName="pool1",

$syncSessionCount=1,

$aluaOptimized=$true,

$cacheMode="none",

$cacheSize=0,

$syncInterface="#p2=172.16.20.2:3260,172.16.21.2:3260",

$hbInterface="#p2=172.16.10.2:3260,192.168.0.2:3260",

$createTarget=$true,

$bmpFolderPath="",

#secondary node

$imagePath2="/mnt/sdb1/volume1",

$imageName2="device1",

$createImage2=$true,

$storageName2="",

$targetAlias2="target1",

$poolName2="pool1",

$syncSessionCount2=1,

$aluaOptimized2=$false,

$cacheMode2=$cacheMode,

$cacheSize2=$cacheSize,

$syncInterface2="#p1=172.16.20.1:3260,172.16.21.1:3260",

$hbInterface2="#p1=172.16.10.1:3260,192.168.0.1:3260",

$createTarget2=$true,

$bmpFolderPath2=""

)

Import-Module StarWindX

try

{

Enable-SWXLog -level SW_LOG_LEVEL_DEBUG

$server = New-SWServer -host $addr -port $port -user $user -password $password

$server.Connect()

$firstNode = new-Object Node

$firstNode.HostName = $addr

$firstNode.HostPort = $port

$firstNode.Login = $user

$firstNode.Password = $password

$firstNode.ImagePath = $imagePath

$firstNode.ImageName = $imageName

$firstNode.Size = $size

$firstNode.CreateImage = $createImage

$firstNode.StorageName = $storageName

$firstNode.TargetAlias = $targetAlias

$firstNode.AutoSynch = $autoSynch

$firstNode.SyncInterface = $syncInterface

$firstNode.HBInterface = $hbInterface

$firstNode.PoolName = $poolName

$firstNode.SyncSessionCount = $syncSessionCount

$firstNode.ALUAOptimized = $aluaOptimized

$firstNode.CacheMode = $cacheMode

$firstNode.CacheSize = $cacheSize

$firstNode.FailoverStrategy = $failover

$firstNode.CreateTarget = $createTarget

$firstNode.BitmapStoreType = $bmpType

$firstNode.BitmapStrategy = $bmpStrategy

$firstNode.BitmapFolderPath = $bmpFolderPath

#

# device sector size. Possible values: 512 or 4096(May be incompatible with some clients!) bytes.

#

$firstNode.SectorSize = $sectorSize

$secondNode = new-Object Node

$secondNode.HostName = $addr2

$secondNode.HostPort = $port2

$secondNode.Login = $user2

$secondNode.Password = $password2

$secondNode.ImagePath = $imagePath2

$secondNode.ImageName = $imageName2

$secondNode.CreateImage = $createImage2

$secondNode.StorageName = $storageName2

$secondNode.TargetAlias = $targetAlias2

$secondNode.AutoSynch = $autoSynch2

$secondNode.SyncInterface = $syncInterface2

$secondNode.HBInterface = $hbInterface2

$secondNode.SyncSessionCount = $syncSessionCount2

$secondNode.ALUAOptimized = $aluaOptimized2

$secondNode.CacheMode = $cacheMode2

$secondNode.CacheSize = $cacheSize2

$secondNode.FailoverStrategy = $failover

$secondNode.CreateTarget = $createTarget2

$secondNode.BitmapFolderPath = $bmpFolderPath2

$device = Add-HADevice -server $server -firstNode $firstNode -secondNode $secondNode -initMethod $initMethod

while ($device.SyncStatus -ne [SwHaSyncStatus]::SW_HA_SYNC_STATUS_SYNC)

{

$syncPercent = $device.GetPropertyValue("ha_synch_percent")

Write-Host "Synchronizing: $($syncPercent)%" -foreground yellow

Start-Sleep -m 2000

$device.Refresh()

}

}

catch

{

Write-Host $_ -foreground red

}

finally

{

$server.Disconnect()

}

Detailed explanation of script parameters:

-addr, -addr2 — partner nodes IP address.

Format: string. Default value: 192.168.0.1, 192.168.0.2

allowed values: localhost, IP-address

-port, -port2 — local and partner node port.

Format: string. Default value: 3261

-user, -user2 — local and partner node user name.

Format: string. Default value: root

-password, -password2 — local and partner node user password.

Format: string. Default value: starwind

#common

-initMethod – set the initial synchronization option.

Format: string.

Values:

Clear – default

NotSynchronize – skips synchronization (works ONLY IF THERE IS NO DATA TO SKIP THE ORIGINAL SYNCHRONIZATION).

SyncFromFirst or SyncFromSecond or SyncFromThird – runs full synchronization from the specific node. Use it for recreating replicas.

-size – set size for HA-device (in MB)

Format: integer. Default value: 12

-sectorSize – set sector size for HA-device

Format: integer. Default value: 512

allowed values: 512, 4096

-failover – set type failover strategy

Format: integer. Default value: 0 (Heartbeat)

allowed values: 0, 1 (Node Majority)

-bmpType – set bitmap type, is set for both partners at once

Format: integer. Default value: 1 (RAM)

allowed values: 1, 2 (DISK)

-bmpStrategy – set journal strategy, is set for both partners at once

Format: integer. Default value: 0

allowed values: 0, 1 – Best Performance (Failure), 2 – Fast Recovery (Continuous)

-storageName is used only if you plan to add the partner to the existing device. For CreateHA_2.ps1 use, leave it as is.

#primary node

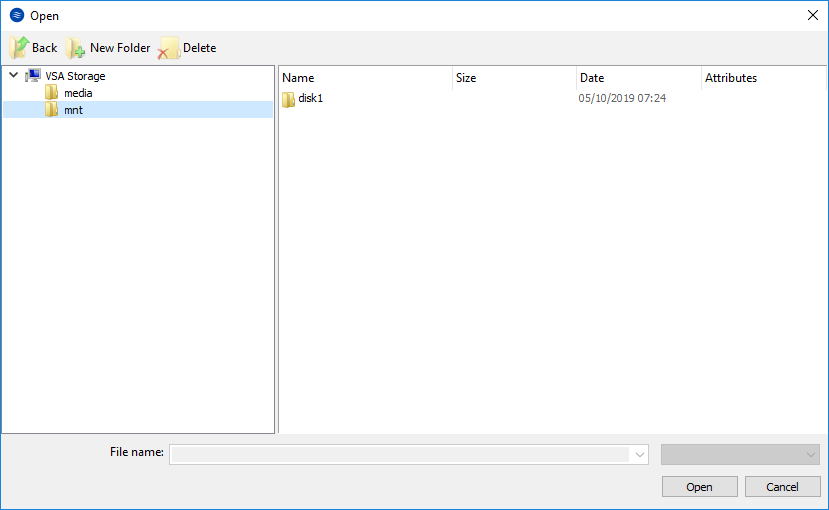

-imagePath – set path to store the device file

Format: string. Default value: My computer\C\starwind”. For Linux the following format should be used: “VSA Storage\mnt\mount_point”

-imageName – set name device

Format: string. Default value: masterImg21

-createImage – set create image file

Format: boolean. Default value: true

-targetAlias – set alias for target

Format: string. Default value: targetha21

-poolName – set storage pool. Do not change it and keep default value.

Format: string. Default value: pool1

-aluaOptimized – set Alua Optimized

Format: boolean. Default value: true

-cacheMode – set type L1 cache (optional parameter)

Format: string. Default value: wb

allowed values: none, wb, wt

-cacheSize – set size for L1 cache in MB (optional parameter)

Format: integer. Default value: 128

allowed values: 1 and more

-syncInterface – set sync channel IP-address from partner node

Format: string. Default value: “#p2={0}:3260”

-hbInterface – set heartbeat channel IP-address from partner node

Format: string. Default value: “”

-createTarget – set creating target

Format: string. Default value: true

Even if you do not specify the parameter -createTarget, the target will be created automatically.

If the parameter is set as -createTarget $false, then an attempt will be made to create the device with existing targets, the names of which are specified in the -targetAlias (targets must already be created)

-bmpFolderPath – set path to save bitmap file

Format: string.

#secondary node

-imagePath2 – set path to store the device file

Format: string. Default value: “My computer\C\starwind”. For Linux the following format should be used: “VSA Storage\mnt\mount_point”

-imageName2 – set name device

Format: string. Default value: masterImg21

-createImage2 – set create image file

Format: boolean. Default value: true

-targetAlias2 – set alias for targetFormat: string.

Default value: targetha22

-poolName2 – set storage pool. Do not change it and keep default value.

Format: string. Default value: pool1

-aluaOptimized2 – set Alua Optimized

Format: boolean. Default value: true

-cacheMode2 – set type L1 cache (optional parameter)

Format: string. Default value: wb

allowed values: wb, wt

-cacheSize2 – set size for L1 cache in MB (optional parameter)

Format: integer. Default value: 128

allowed values: 1 and more

-syncInterface2 – set sync channel IP-address from partner node

Format: string. Default value: “#p1={0}:3260”

-hbInterface2 – set heartbeat channel IP-address from partner node

Format: string. Default value: “”

-createTarget2 – set creating target

Format: string. Default value: true

Even if you do not specify the parameter -createTarget, the target will be created automatically.If the parameter is set as -createTarget $false, then an attempt will be made to create the device with existing targets, the names of which are specified in the -targetAlias (targets must already be created)

-bmpFolderPath2 – set path to save bitmap file

Format: string.

IMPORTANT: If the script should be executed again with the same parameters, (for example, the first time execution has failed) make sure to do the following for one node at a time before the next attempt to execute the script:

1. Stop StarWind Service:

sudo systemctl stop starwind-virtual-san2. Go to /opt/starwind/starwind-virtual-san/drive_c/starwind/headers and delete the headers you do not need.

3. Go to the underlying storage specified as $imagePath and delete the header and imagefile there.

4. Go to the folder with StarWind.cfg (/opt/starwind/starwind-virtual-san/drive_c/starwind/StarWind.cfg ) and copy it.

5. Edit StarWind.cfg:

sudo nano /opt/starwind/starwind-virtual-san/drive_c/starwind/StarWind.cfg6. Navigate under <targets>, remove target you do not need.

7. Navigate under <devices>, and remove the device entry you do not need.

8. Start the service:

sudo systemctl start starwind-virtual-san9. Wait for the devices synchronization.

10. Repeat for the remaining StarWind VSAN instance.

Selecting the Failover Strategy

StarWind provides 2 options for configuring a failover strategy:

Heartbeat

The Heartbeat failover strategy allows avoiding the “split-brain” scenario when the HA cluster nodes are unable to synchronize but continue to accept write commands from the initiators independently. It can occur when all synchronization and heartbeat channels disconnect simultaneously, and the partner nodes do not respond to the node’s requests. As a result, StarWind service assumes the partner nodes to be offline and continues operations on a single-node mode using data written to it.

If at least one heartbeat link is online, StarWind services can communicate with each other via this link. The device with the lowest priority will be marked as not synchronized and get subsequently blocked for the further read and write operations until the synchronization channel resumption. At the same time, the partner device on the synchronized node flushes data from the cache to the disk to preserve data integrity in case the node goes down unexpectedly. It is recommended to assign more independent heartbeat channels during the replica creation to improve system stability and avoid the “split-brain” issue.

With the heartbeat failover strategy, the storage cluster will continue working with only one StarWind node available.

Node Majority

The Node Majority failover strategy ensures the synchronization connection without any additional heartbeat links. The failure-handling process occurs when the node has detected the absence of the connection with the partner.

The main requirement for keeping the node operational is an active connection with more than half of the HA device’s nodes. Calculation of the available partners is based on their “votes”.

In case of a two-node HA storage, all nodes will be disconnected if there is a problem on the node itself, or in communication between them. Therefore, the Node Majority failover strategy requires the addition of the third Witness node or file share (SMB) which participates in the nodes count for the majority, but neither contains data on it nor is involved in processing clients’ requests. In case an HA device is replicated between 3 nodes, no Witness node is required.

With Node Majority failover strategy, failure of only one node can be tolerated. If two nodes fail, the third node will also become unavailable to clients’ requests.

Please select the required option:

Preparing Datastores

Adding Discover Portals

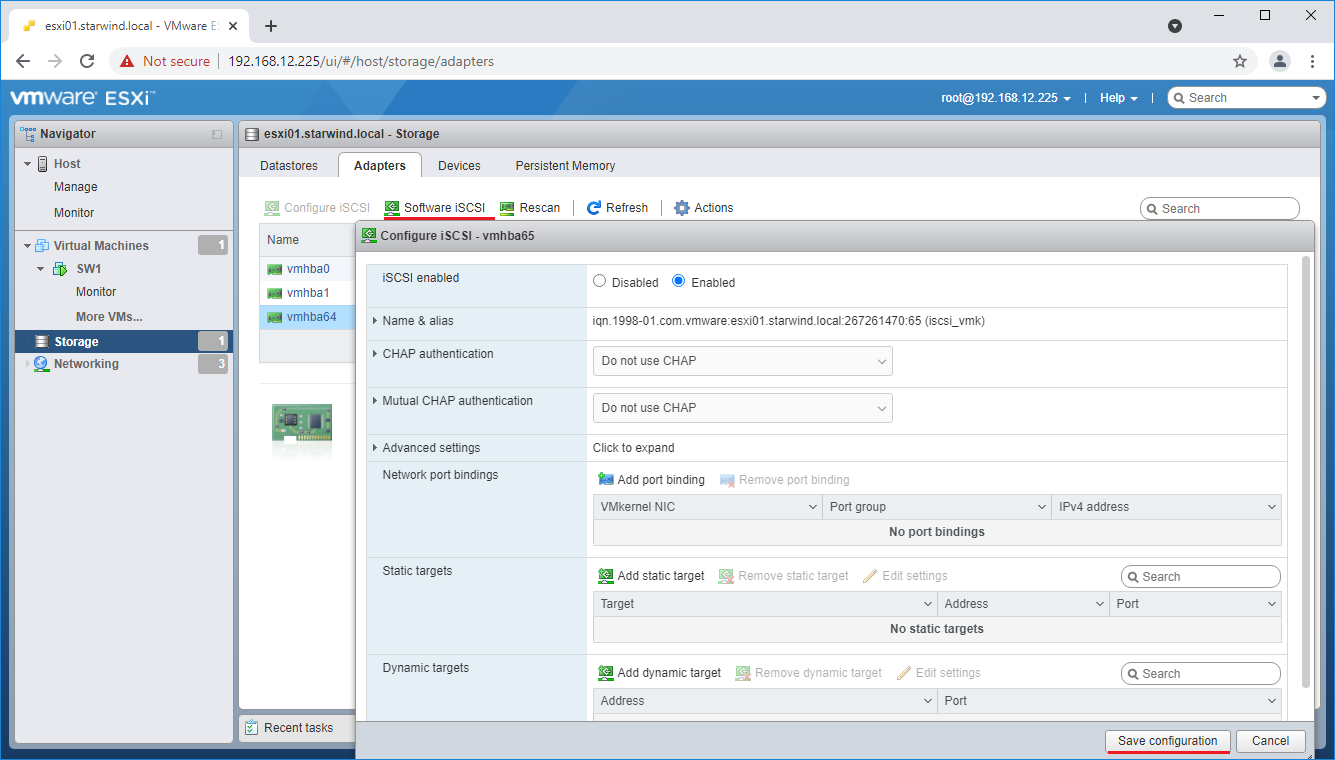

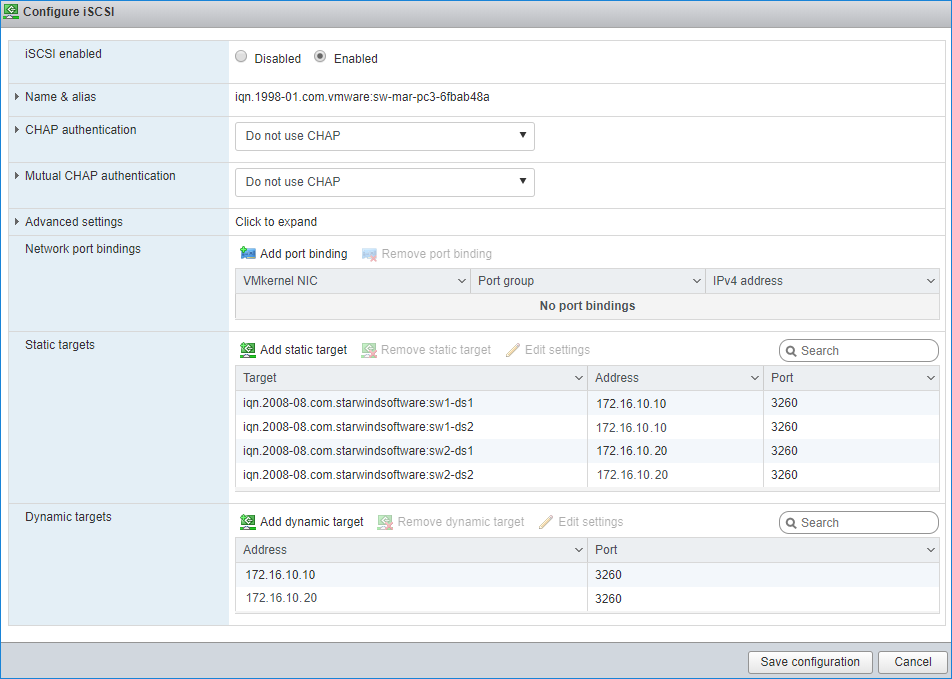

1. To connect the previously created devices to the ESXi host, click on the Storage -> Adapters -> Software iSCSI and in the appeared window choose the Enabled option to enable Software iSCSI storage adapter. Push the Save configuration button.

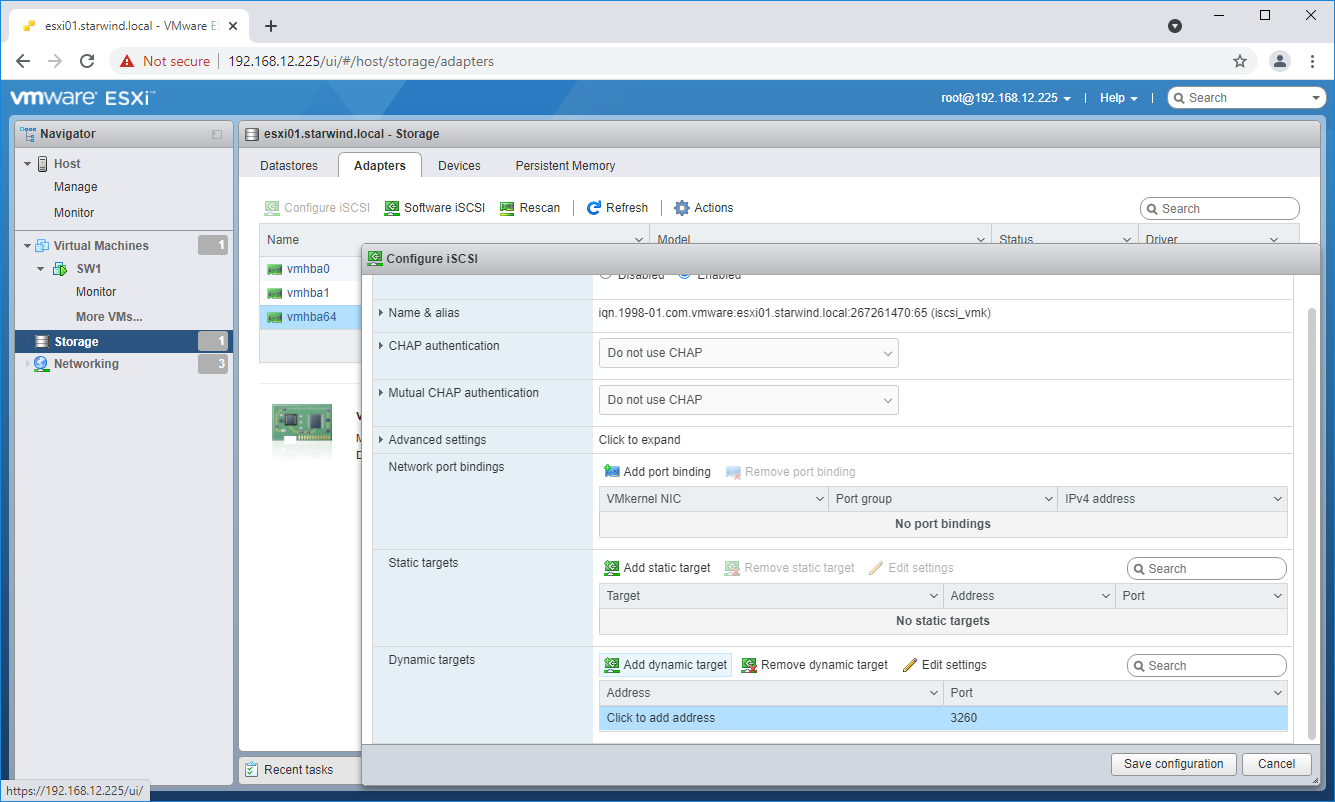

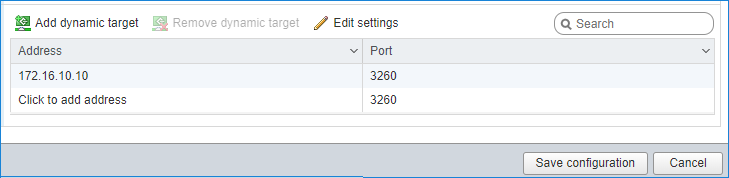

2. In the Configure iSCSI window, under Dynamic Targets, click on the Add dynamic target button to specify iSCSI interfaces.

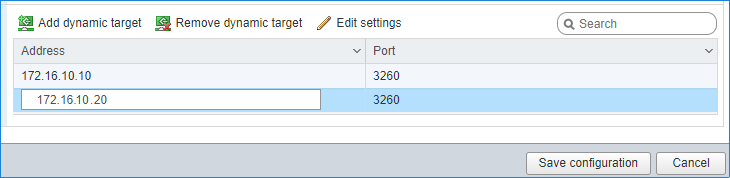

3. Enter the iSCSI IP addresses of all StarWind nodes for the iSCSI traffic.

Confirm the actions by pressing Save configuration.

4. The result should look like in the image below.

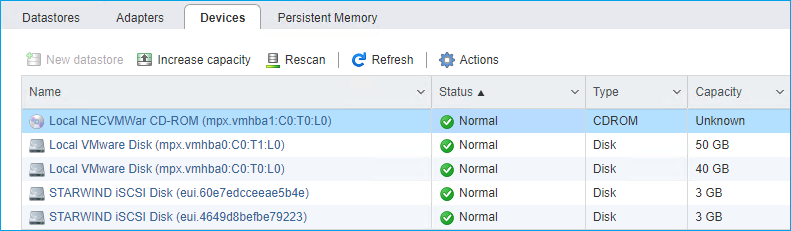

5. Click on the Rescan button to rescan storage.

6. Now, the previously created StarWind devices are visible to the system.

7. Repeat all the steps from this section on the other ESXi host, specifying corresponding IP addresses for the iSCSI subnet.

Creating Datastores

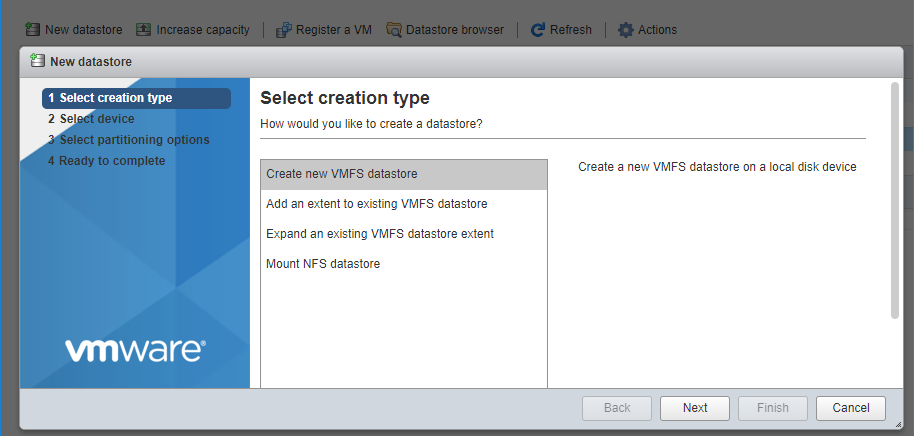

1. Open the Storage tab on one of your hosts and click on New Datastore.

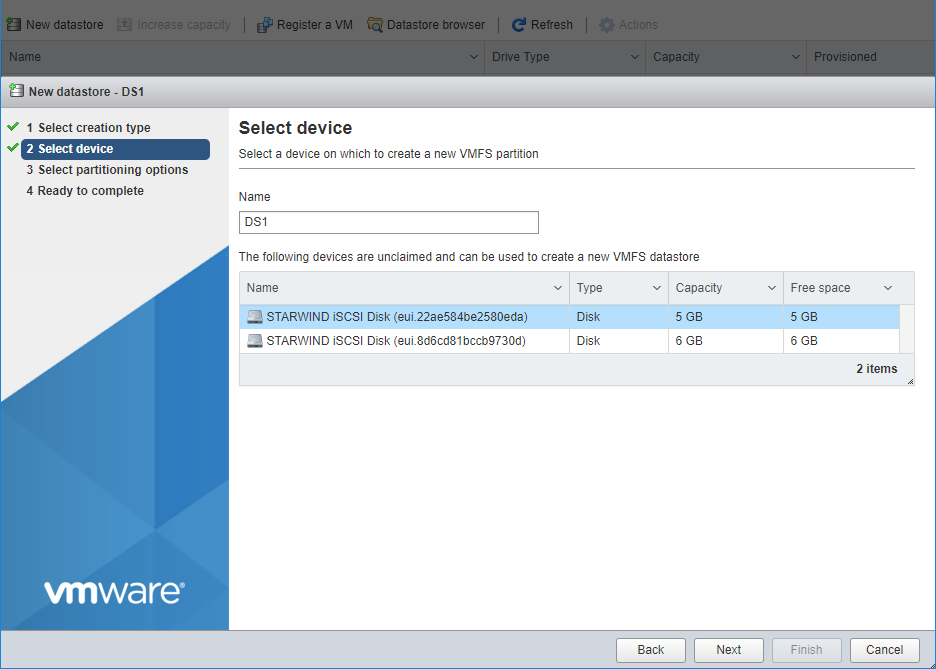

2. Specify the Datastore name, select the previously discovered StarWind device, and click Next.

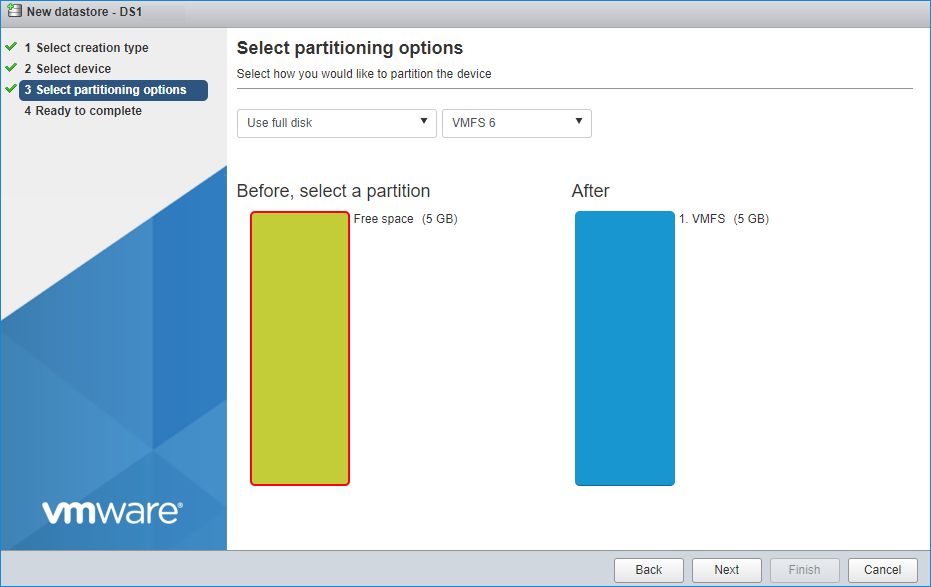

3. Enter datastore size and click Next.

4. Verify the settings and click Finish.

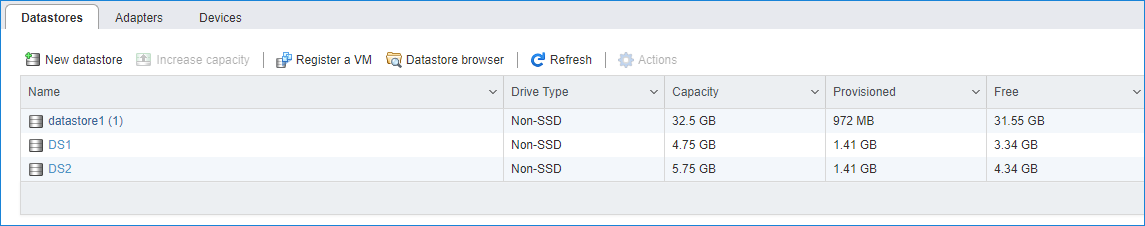

5. Add another Datastore (DS2) in the same way but select the second device for the second datastore.

6. Verify that your storages (DS1, DS2) are connected to both hosts. Otherwise, rescan the storage adapter.

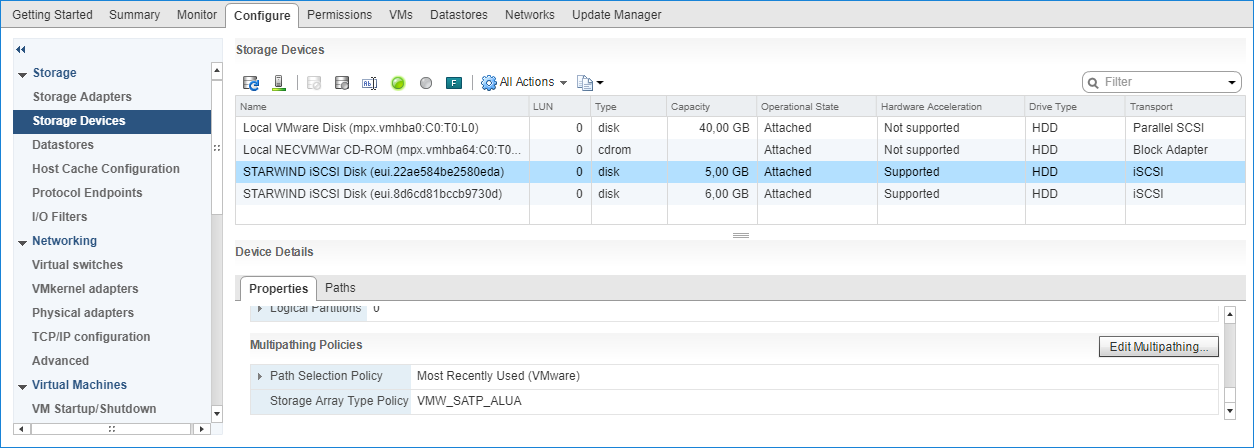

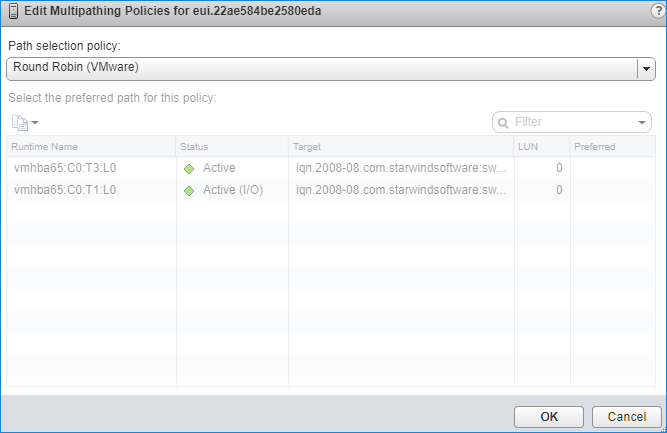

NOTE: Path Selection Policy changing for Datastores from Most Recently Used (VMware) to Round Robin (VMware) is added into the Rescan Script, and this action is performed automatically. For checking and changing this parameter manually, the hosts should be connected to vCenter.

Multipathing configuration can be checked only from vCenter. To check it, click the Configure button, choose the Storage Devices tab, select the device, and click the Edit Multipathing button.

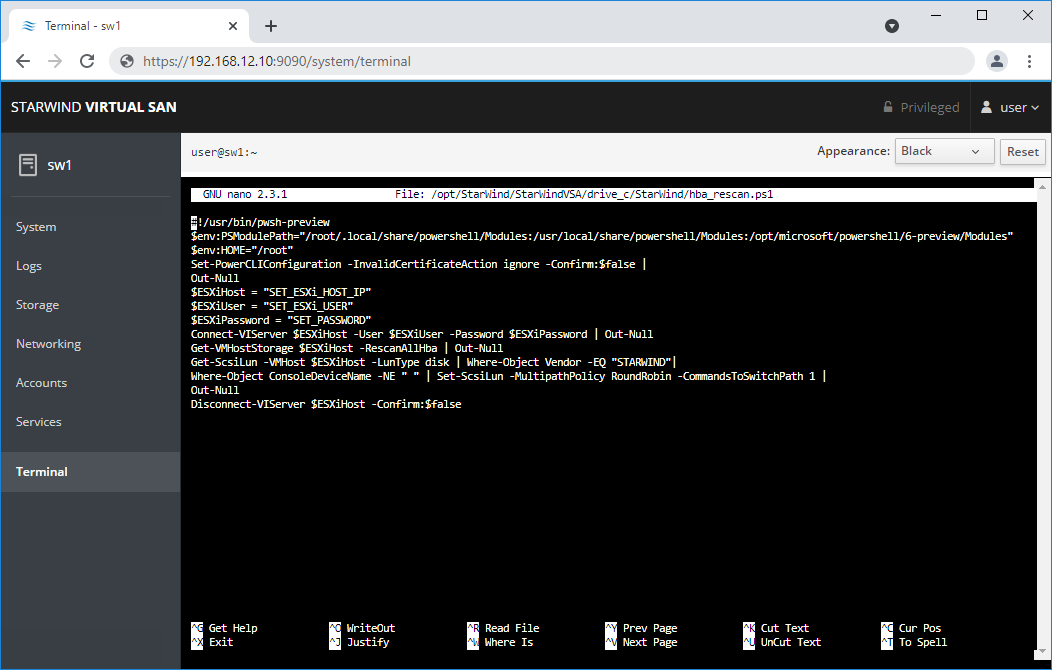

Configuring an Automatic Storage Rescan

1. Open the Terminal page.

2. Edit file /opt/StarWind/StarWindVSA/drive_c/StarWind/hba_rescan.ps1 with the following command:

sudo nano /opt/StarWind/StarWindVSA/drive_c/StarWind/hba_rescan.ps1

3. In the appropriate lines, specify the IP address and login credentials of the ESXi host (see NOTE below) on which the current StarWind VM is stored and running:

$ESXiHost = “IP address”

$ESXiUser = “Login”

$ESXiPassword = “Password”

NOTE: In some cases the rescan script can be changed and storage rescan added for another ESXi host. Appropriate lines should be duplicated and changed with properly edited variables if required.

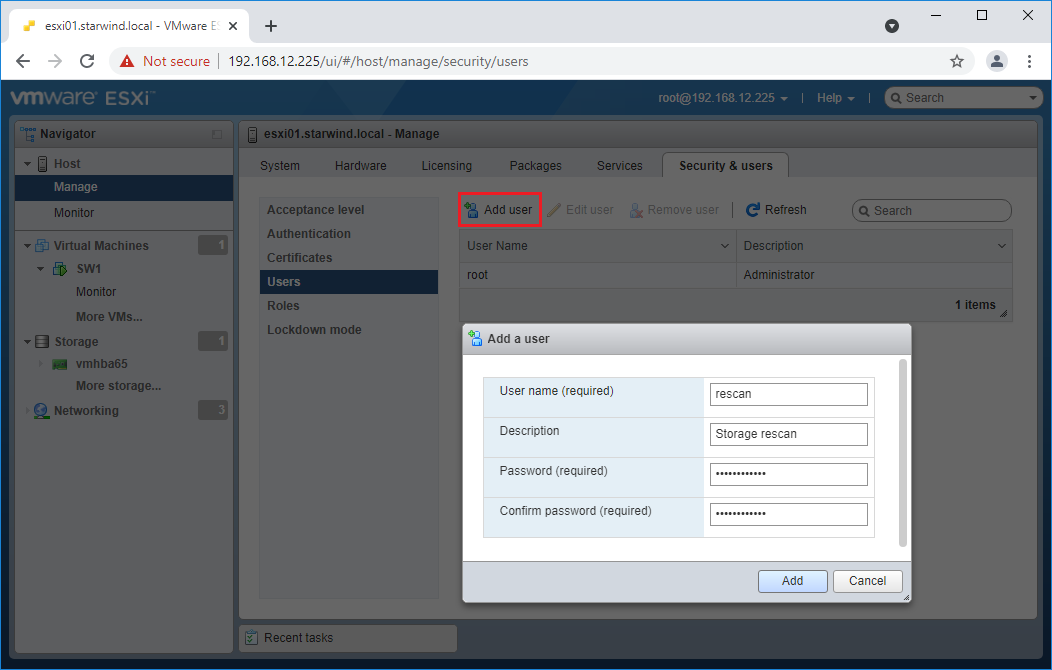

NOTE: In some cases, it makes sense to create a separate ESXi user for storage rescans. To create the user, please follow the steps below:

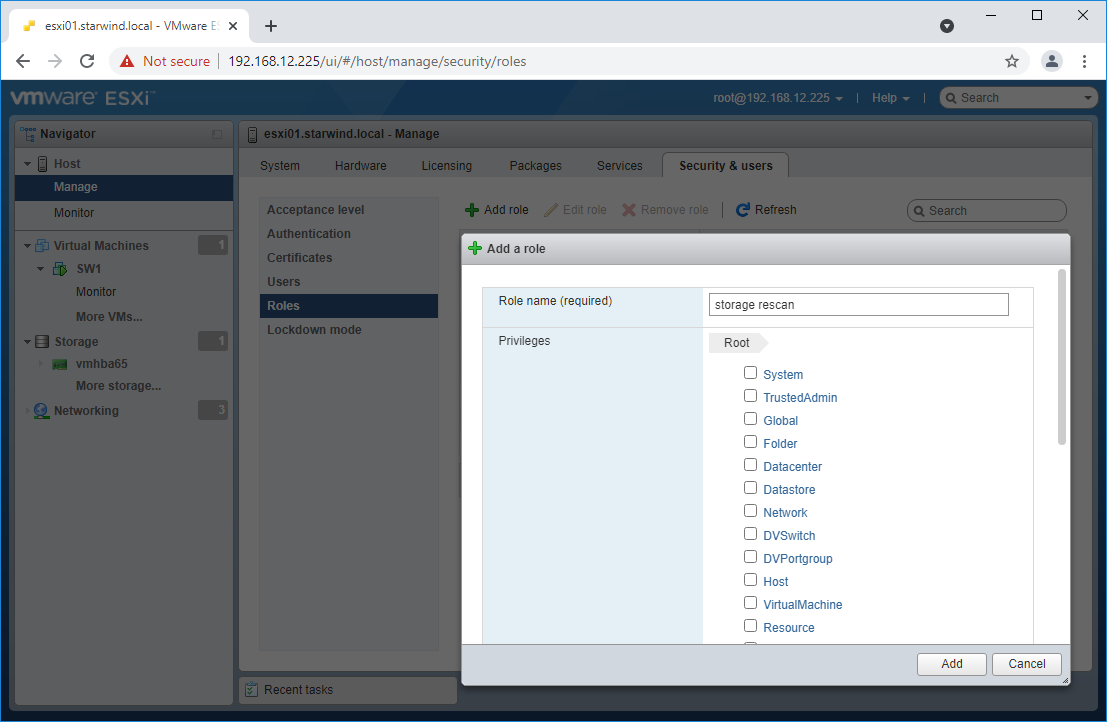

Log in to ESXi with the VMware Host Client. Click Manage, and under Security & users tab, in the Users section click Add user button. In the appeared window, enter a user name, and a password.

Create a new Role, under Roles section, and click New Role button. Type a name for the new role. Select privileges for the role and click OK.

The following privileges might be assigned: Host – Inventory, Config, Local Cim, and Global – Settings.

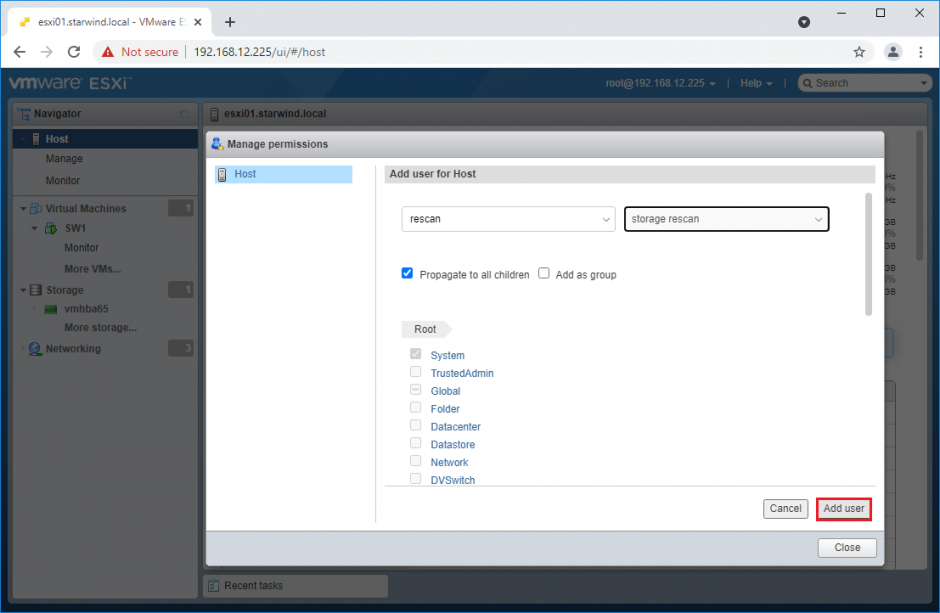

Assign permission to the storage rescan user for an ESXi host – right-click Host in the VMware Host Client inventory and click Permissions. In the appeared window click Add user.

Click the arrow next to the Select a user text box and select the user that you want to assign a role to. Click the arrow next to the Select a role text box and select a role from the list.

(Optional) Select Propagate to all children or Add as group. Click Add user and click Close.

Make sure that rescan script is working and execute it from the VM: sudo /opt/StarWind/StarWindVSA/drive_c/StarWind/hba_rescan.ps1

4. Repeat all steps from this section on the other ESXi hosts.

Performance Tweaks

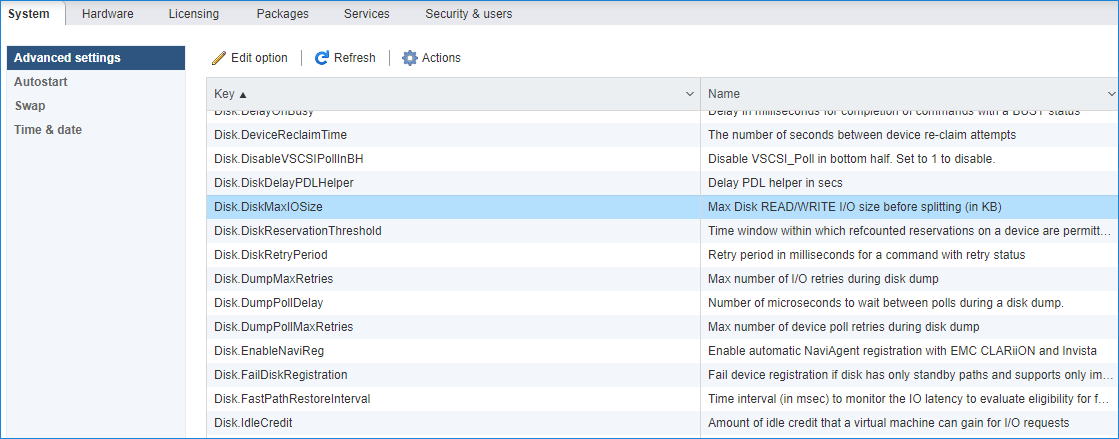

1. Click on the Configuration tab on all of the ESXi hosts and choose Advanced Settings.

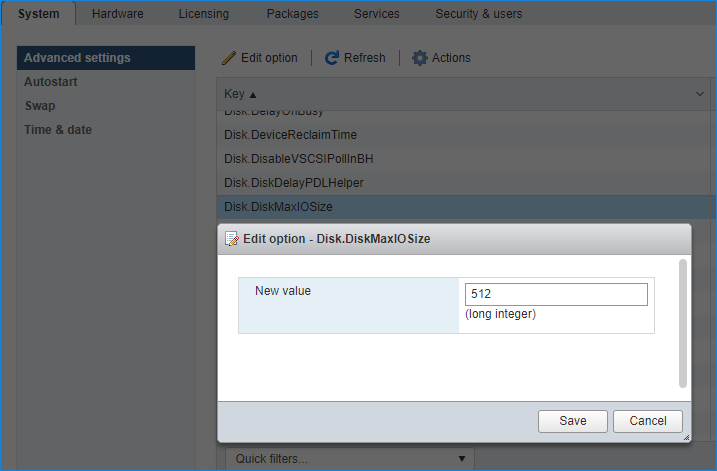

2. Select Disk and change the Disk.DiskMaxIOSize parameter to 512.

3. To optimize performance change I/O scheduler options according to the article below:

https://knowledgebase.starwindsoftware.com/guidance/starwind-vsan-for-vsphere-changing-linux-i-o-scheduler-to-optimize-storage-performance/

NOTE: Changing Disk.DiskMaxIOSize to 512 might cause startup issues with Windows-based VMs, located on the datastore where specific ESX builds are installed. If the issue with VMs start appears, leave this parameter as default or update the ESXi host to the next available build.

NOTE: To provide high availability for clustered VMs, deploy vCenter and add ESXi hosts to the cluster.

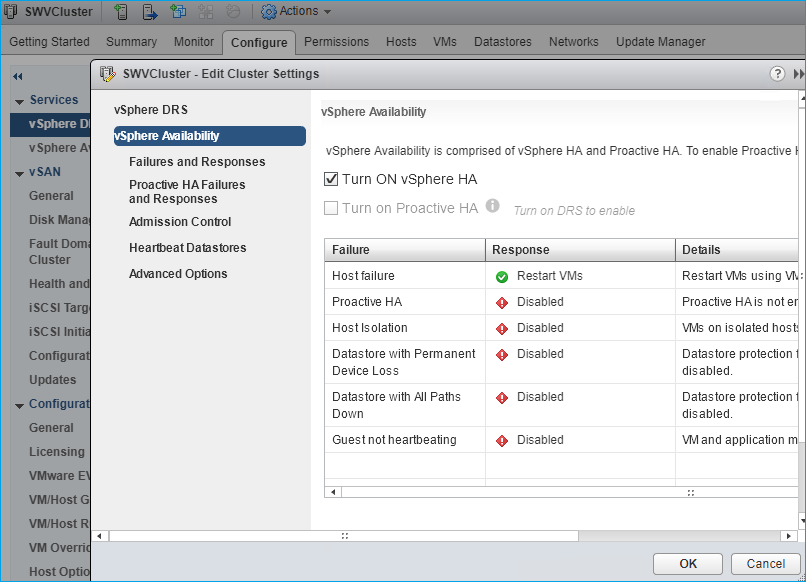

Click on Cluster -> Configure -> Edit and check the turn on vSphere HA option if it’s licensed.

Conclusion

By following this guide the end-user can get a StarWind Virtual SAN deployed on VMware vSphere, with VSAN set up as a Controller Virtual Machine (CVM). The guide offers key insights and steps to ensure a seamless deployment.