INTRODUCTION

Wheather your IT-infrastructure is big, or small, it utilizes the Server Message Block (SMB) protocol for accessing files, printers and other resources – in one form or another. However, when it comes to connecting to the storage for ‘enterprise’ workloads, we see the evidence where this protocol is never used. Therefore, it became a trigger for Microsoft to go with upgrading SMB so that it would provide filebased access to applications data. This shift enabled it to be used for handling such enterprise use cases as Microsoft Hyper-V and SQL Server. Hence, SMB3 evolved into the integral part of a failover and scalable clustered file servers, better known as Scale-Out File servers (SOFS). Nevertheless, traditional implementations of SOFS sets some new challenges.

PROBLEM

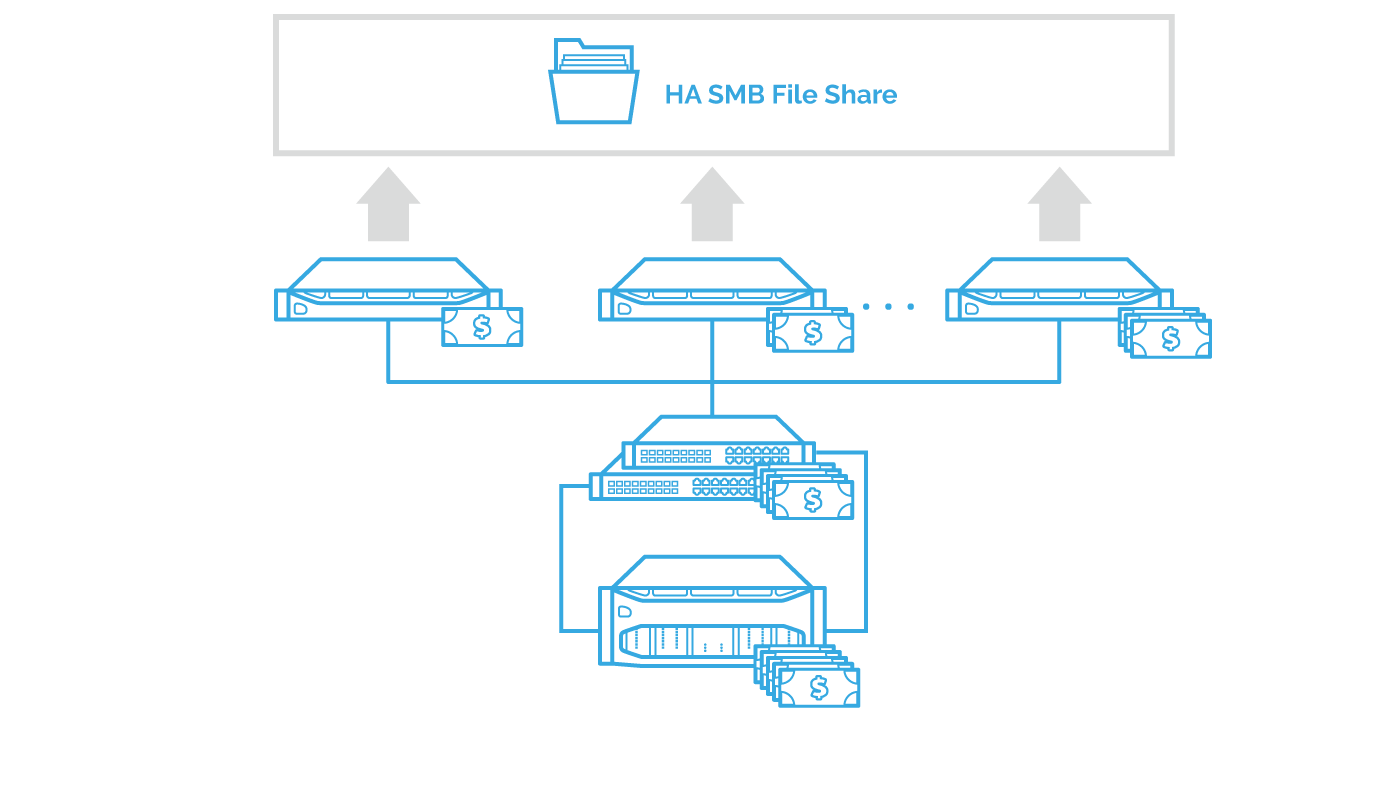

First issue is the solution’s complexity and cost. SOFS requires a SAN (iSCSI/FC) or DAS. Local storage can be used with Windows Server 2016 and Storage Spaces Direct. This solves the complexity issue. Yet, it doesn’t help with CAPEX as users can only get Storage Spaces Direct with the expensive Datacenter OS licensing.

Along with the high CAPEX, low ROI. In case of S2D, it’s due to an expensive OS license used for a simple role. On the other hand, a dedicated storage with a decent level of redundancy and performance wouldn’t improve ROI if it’s only used for file services. Finally, there aren’t many storage solutions capable of providing a unified platform that also has an essential set of data services like caching, snapshots, QoS, or data optimization.

SOLUTION

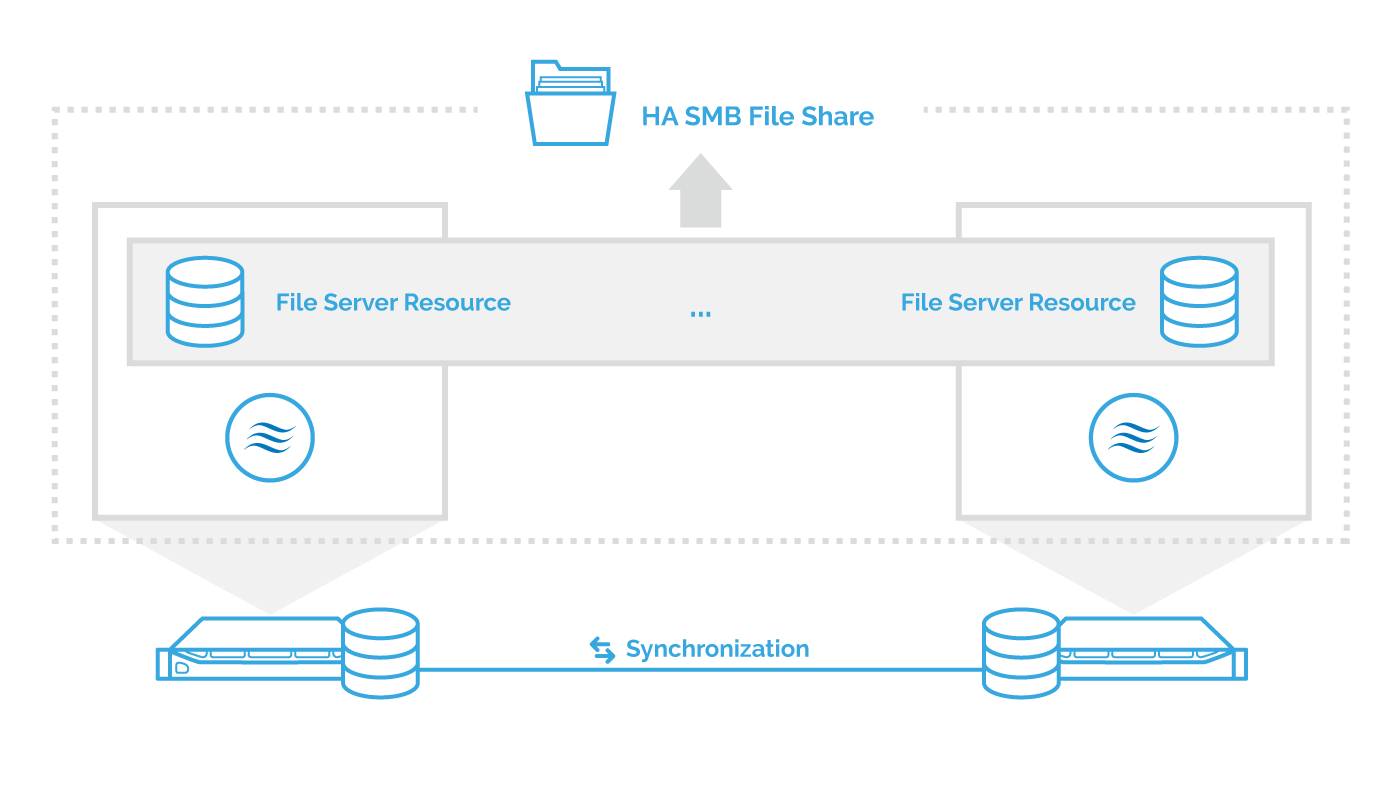

StarWind VSAN enhances the SMB services and brings the implementation of clustered file servers to the qualitatively new level. In the first place, StarWind fixes the CAPEX and complexity issues by maximizing hardware use. It implements shared nothing. So what you would need for providing the SMB access is just a pair of commodity servers since hardware footprint can be as low as 2 nodes. This results in decreased CAPEX and OPEX as well as in improved ROI of the solution. It’s also due to multilevel redundancy and high-performance architecture of StarWind VSAN built with the latest trends in the storage industry such as RDMA protocol suite and DRAM/Flash caching.

Apart from this, StarWind helps saving up organizations’ budgets by introducing a low requirement for the OS license (no need for Datacenter edition). Finally, there is an increase of customer lifetime value since StarWind provides such as write caching, snapshots, QoS, and data optimization.

CONCLUSION

StarWind leverages SMB3, providing high performance and high availability for mission-critical applications. What you get is a scalable and continuously available storage platform that improves the ROI and CLV by maximizing the hardware utilization and providing data services richer than the ones that are available with the stock SoFS implementation.