Since several years with VMware ESXi, there is not much new that has changed over the years. With the latest VMware ESXi 8.0 there are some new features and UI changes that we’ll discuss in this post.

The installer of ESXi has been here for over a decade, however over the years, VMware has improved the access to the hypervisor.

First, we had the fat, old Windows client which everybody got hooked into, then we have a nicer ESXi host client previously a Fling, that has been productized and integrated into the final release of ESXi.

Now with ESXi 8.0 has been released, let’s have a look what it looks like and what’s changed. We’ll have a look at the installation and configuration and see if any changes have been apparent. Then we’ll have a look at some new cool themes that are bundled now with ESXi 8.0 host client.

Better support for home labs hardware

Are you planning to run ESXi 8.0 on a home lab (unsupported) hardware? Good news is that since ESXi 7.0 U3f, VMware has integrated Intel i219 device driver from the Community Networking Driver Fling into the default ESXi 7.0 U3f ISO. And the ESXi 8.0 release has this driver inside too. This greatly simplifies the installation of course. All those following devices should work: i220, i225 & i226 PCIe-based network devices.

This is very good news as you will no longer need to create install the VMware Fling vSphere Installation Bundle (VIB) and or create custom ESXi image. The network devices will be automatically detected and ready for use. All the devices from the Community Networking Driver for ESXi from this page should be integrated into ESXi 8.0

Which devices are deprecated and unsupported in ESXi 8.0?

There is a number of devices and drivers that will not go to make it into ESXi 8.0. As with every major release of vSphere, there are always devices, CPUs, PCIe devices, that will not be supported. So, if you force those hosts for an upgrade without checking first, you might loose access to storage or networks.

You might be using some Mellanox or EMULEX HBA hardware that you might want to check for support first.

There is a VMware KB article – KB88172 – and this KB is to check for outdated hardware that won’t be supported in ESXi 8.0.

So far, as I said, there is:

- All devices previously supported by the nmlx4_en driver. The driver does not exist in ESXi 8.0.

- A portion of devices previously supported by the lpfc driver. These devices have been removed from the driver.

Visible changes during and after Installation of ESXi 8.0

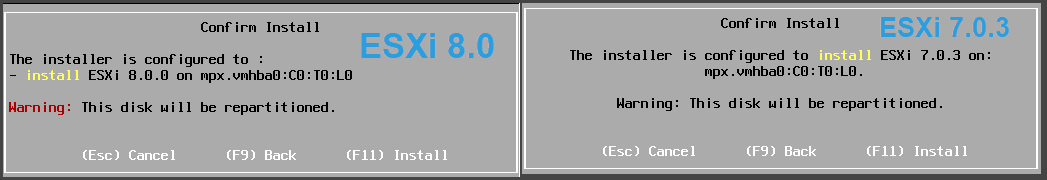

So far, there is not much. We have a new red warning just before the disk repartitioning. We’ll see more changes after installation, within the UI, where we’ll have new and redesigned themes with great look and feel.

ESXi 8.0 Installation

Can I still use USB stick to install ESXi 8.0?

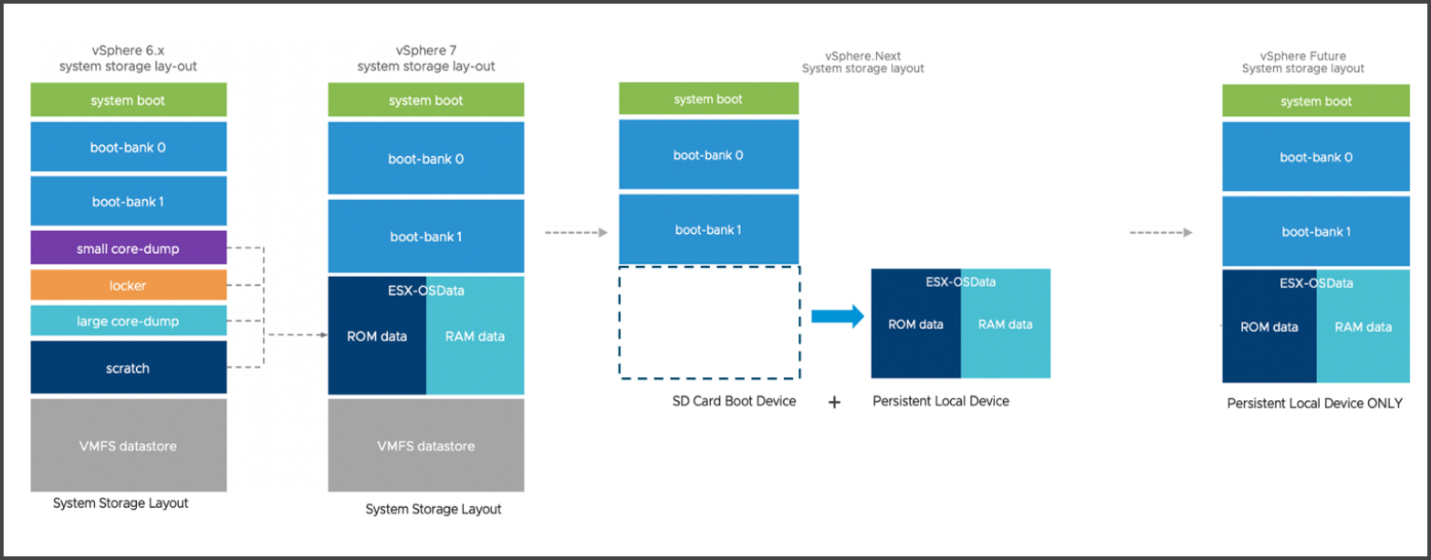

Yes, but VMware does not recommend that. Since VMware vSphere 7 where there has been a change to the boot partition and transition into a new layout partition that are used on the boot devices across the different vSphere releases.

VMware still supports USB/SD cards, but for any new hardware procurement, VMware recommends going for High-Quality Media with preferences to SATA/SAS/NVMe SSD that meets VMware specified endurance requirements, or HDD is strongly recommended.

PCIe NVMe, SAS, SATA/SATADOM SSD, Industrial grade flash/SSDs, SLC or pSLC.

Endurance requirements:

128 TBW (over 5 years)

From my own experience, I would simply create a RAID 1 with 2 SSDs In SATA/SAS (they’re quite cheap those days) and have it as a volume for the ESXi install. You don’t need a lot of capacity as it will hold only the ESXi boot files.

If anything goes wrong with 1 SSD, the other one which is in the mirror would still be there. Monitoring at the hardware layer for any failures on the SSD should be set during the deployment phase.

VMware ESXi and transition of boot partition layouts used on the boot devices across the different vSphere releases

VMware has stopped certifying flash devices for boot device, and will stop listing them as approved devices starting in vSphere. This applies even for home labs. You certainly don’t want to waste your money on USBs if they not going to last due to high reads and writes that ESXi needs, right?

Few interesting FAQ from VMware KB85685

Based on the boot device used, could an install or upgrade to 7.x or vSphere.Next be blocked?

VMware will not block ESXi installation or upgrade. It will, however, try to relocate critical regions of the boot device into persistent storage.

How is the VMTools location stored and controlled?

VMTools region is a part of OSData and will be moved to any place where OSData is stored. However, it can be controlled by the ProductLocker advanced setting. At install/upgrade time, it is in the boot device VMFS-L partition (either the LOCKER partition on USB/SDCard, or the OSDATA partition if the OSDATA is installed to non-USB). When the system boots up, the system-storage jumpstart will check if it is in LOCKER on USB/SD device, and if there is a local OSDATA partition, it will be moved there. The customer can change the location anywhere else by setting the ProductLocker value. (Also see https://kb.vmware.com/s/article/2129825)

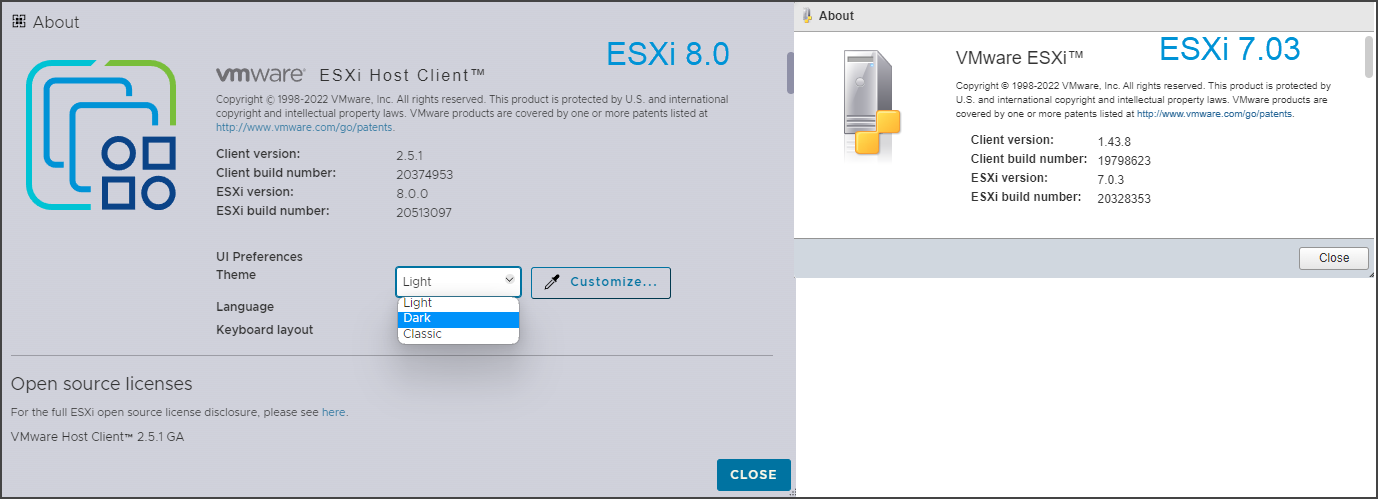

ESXi 8.0 vs 7.0.3 Host Client version Compare.

You can see that you have a possibility to change the theme. There are 3 themes bundled:

- Light (light blue with dark blue)

- Dark (darkest one)

- Classic (the brightest one, white and blue colors)

ESXi 8.0 vs 7.0.3 Host Client version Compare

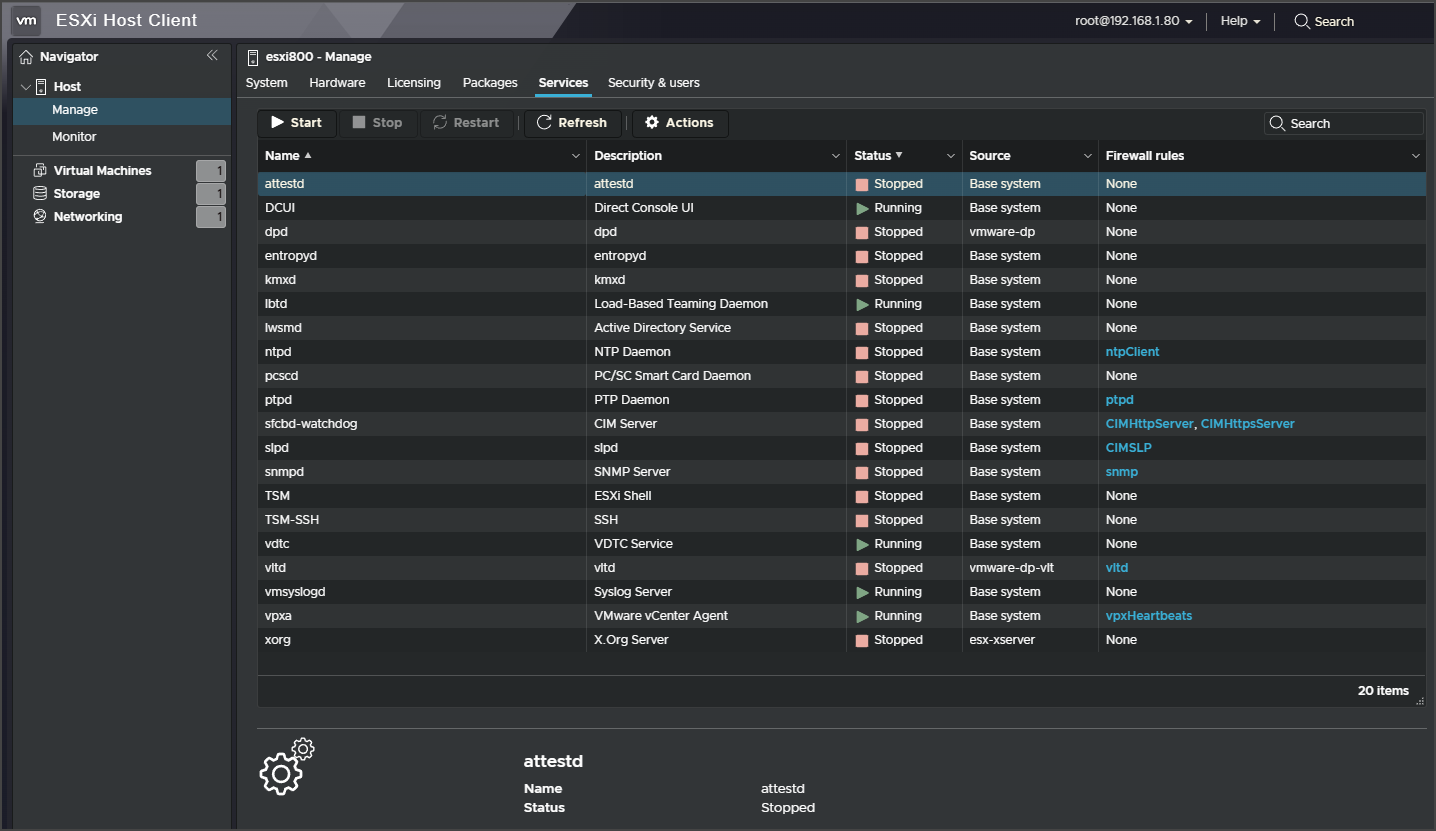

The host client with dark theme looks pretty slick. Here is the view of the host client with a dark theme active.

ESXi 8.0 host client New Theme

vCenter Server’s themes did not evolved in this release and we will find the same set of themes for vCenter server. There is the default and dark themes for vSphere web client only.

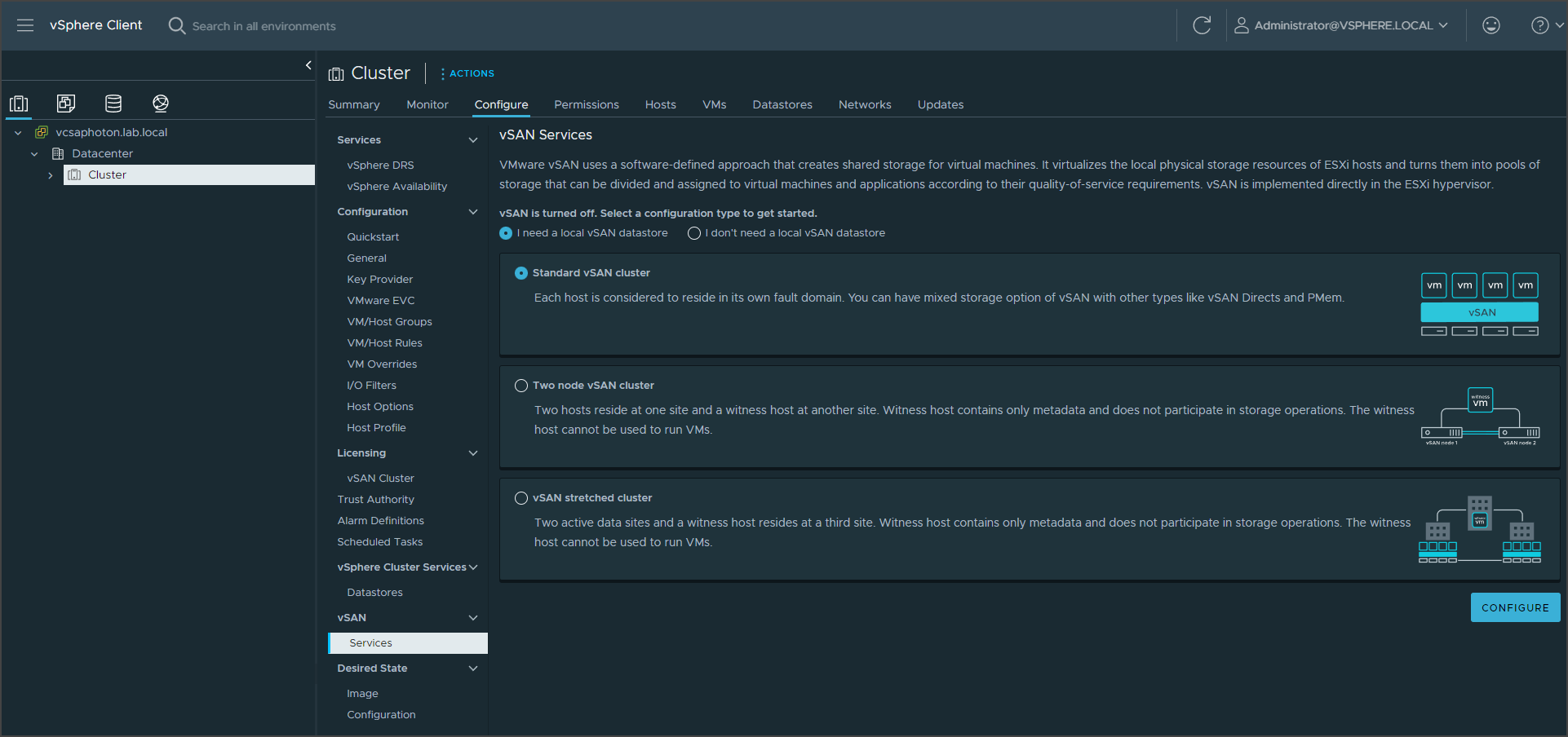

vCenter server had brought some new features, but we’ll go into more details in another post. Here what I can post is a new vSAN configuration screen where you can choose from 3 different options at the beginning of vSAN configuration.

vSphere 8.0 vSAN servicess screen

Final Words

VMware ESXi 8.0 and vSphere 8.0 in general is a major release for VMware that everybody was waiting for. The release was expected even before VMware EXPLORE Europe because VMware has announced a long time ago the end of support for some older version of vSphere.

The End of General Support for vSphere 6.5 and vSphere 6.7 is October 15, 2022. To maintain your full level of Support and Subscription Services, VMware recommends upgrading to vSphere 7. The End of General support for vSphere 7 will be on April 2, 2025. So, the release of vSphere 8.0 was just a couple of days before the end of support for 6.7 release. This will force a lot of clients to finally migrate to vSphere 7.0.