|

DISCLAIMER: The only supported ways to use VMDKs as shared-disks for clustered Windows VMs in a vSphere environment is when the VMDKs are stored in a Clustered VMDK-enabled Datastore, as described in Clustered VMDK support for WSFC, or in the “Storage Configuration” section of this Article. |

Introduction

If you’re a VMware vSphere admin in a large enterprise, sooner or later, you’re going to have to configure VM clusters. Such a necessity can arise from various reasons, including using the Oracle Real Application Clusters (RAC) software for clustering and high availability in database environments or creating continuously available systems via the VMware vSphere Fault Tolerance (FT) feature.

While utilizing cluster solutions, the VM disks must be in the multi-writer mode so that a VMDK file can be accessed simultaneously from several ESXi hosts (it can also work with writing from several VMs within the same host). VMware only supports multi-writer option for the third-party cluster-aware applications and Fault Tolerance that has vLockstep technology to establish and maintain simultaneous access to the disk from both ESXi hosts. Let’s take a look at the primary aspects of multi-writer option.

First of all, we need to clarify that right now VMware officially supports Oracle RAC, Veritas InfoScale, Microsoft Windows Server Failover Clustering (WSFC, earlier known as Microsoft Cluster Service, MSCS), and some other clusters (for example, Red Hat).

All you need to know about the Oracle clusters is in these three articles. If you’re interested in VMFS support, look it up here; the information on Veritas InfoScale support is there.

What Do You Need to Know?

It depends on your ESXI hypervisor version, but VMware generally presents all documentation required for configuring the MSCS/WSFC clusters:

| ESX Release | MSCS Supported? | Documentation |

| vSphere 5.5 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 5.5 Update 2 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 5.5 Update 3 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 6.0 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 6.0 Update 1 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 6.0 Update 2 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 6.5 Update 1 | Setup for Failover Clustering and Microsoft Cluster Service (PDF) | |

| vSphere 6.7 | |

Setup for Failover Clustering and Microsoft Cluster Service (PDF) |

| vSphere 7.0 | Setup for Windows Server Failover Clustering (PDF) |

Additionally, check out these materials.

Of course, using the virtual disks in multi-writer mode within the VMware vSphere environment imposes a certain amount of limitations:

- You need to know that the system and apps meant to work with such disks must support simultaneous access from several hosts. Either that or your data is under a constant threat of damage.

- By default, the virtual disks sharing support in multi-writer did not extend to more than 8 hosts. However, this amount could have been decreased depending on what technology you’re using. For example, for the WSFC cluster, it’s no more than 5 hosts. From vSphere 6.7 Update 1 onwards, the virtual disks sharing support in multi-writer has been extended to more than 64 hosts, but requires additional configuration (more info here).

- The VMFS file system prevents more than one virtual machine from inadvertently accessing the same VMDK disk. When using multi-writer mode, remember that it can cause data loss.

- When using the multi-writer mode, the virtual disk must be eager zeroed thick; it cannot be zeroed thick or thin provisioned. However, if you’re using vSAN, then, from vSAN 6.7 Patch 01 onwards, you can use thin disks (see the article here).

- The virtual disks with the Multi-Writer Flag cannot be attached to the virtual NVMe controller.

- Hot adding a virtual disk removes Multi-Writer Flag.

Starting vSphere 7.0 Update 1, NVMe over Fibre Channel and NVMe over RDMA (RoCE v2) datastores now support multi-writer option enabling in-guest systems that leverage cluster-aware file systems to have distributed write capability. If you’re looking for more information, look it up here.

Well, for now, let’s find out what VMware vSphere platform features are supported for VMs with virtual disks in multi-writer mode:

| Actions or Features | Supported | Unsupported | Note |

| Power on, off, restart virtual machine |  |

||

| Suspend VM |  |

||

| Hot add virtual disks |  |

Only to existing adapters | |

| Hot remove devices |  |

||

| Hot extend virtual disk |  |

||

| Connect and disconnect devices |  |

||

| Snapshots |  |

Virtual backup solutions leverage snapshots through the vStorage APIs; for example, VMware Data Recovery, vSphere Data Protection. These are also not supported. | |

| Snapshots of VMs with independent-persistent disks |  |

Supported in vSphere 5.1 Update 2 and later versions | |

| Cloning |  |

||

| Storage vMotion |  |

Neither shared nor non-shared disks can be migrated using Storage vMotion due to the virtual machine stun required to initiate the storage migration. | |

| Changed Block Tracking (CBT) |  |

||

| vSphere Flash Read Cache (vFRC) |  |

Stale writes can lead to data loss and/or corruption | |

| vMotion |  |

Supported for ORAC only and limited to 8 ESX/ESXi hosts |

By the way, when you turn on the VMware Fault Tolerance highly available cluster, the multi-writer mode for VMDK-disks is enabled automatically.

Time to Work!

So, let’s move on to configuring the Multi-writer mode:

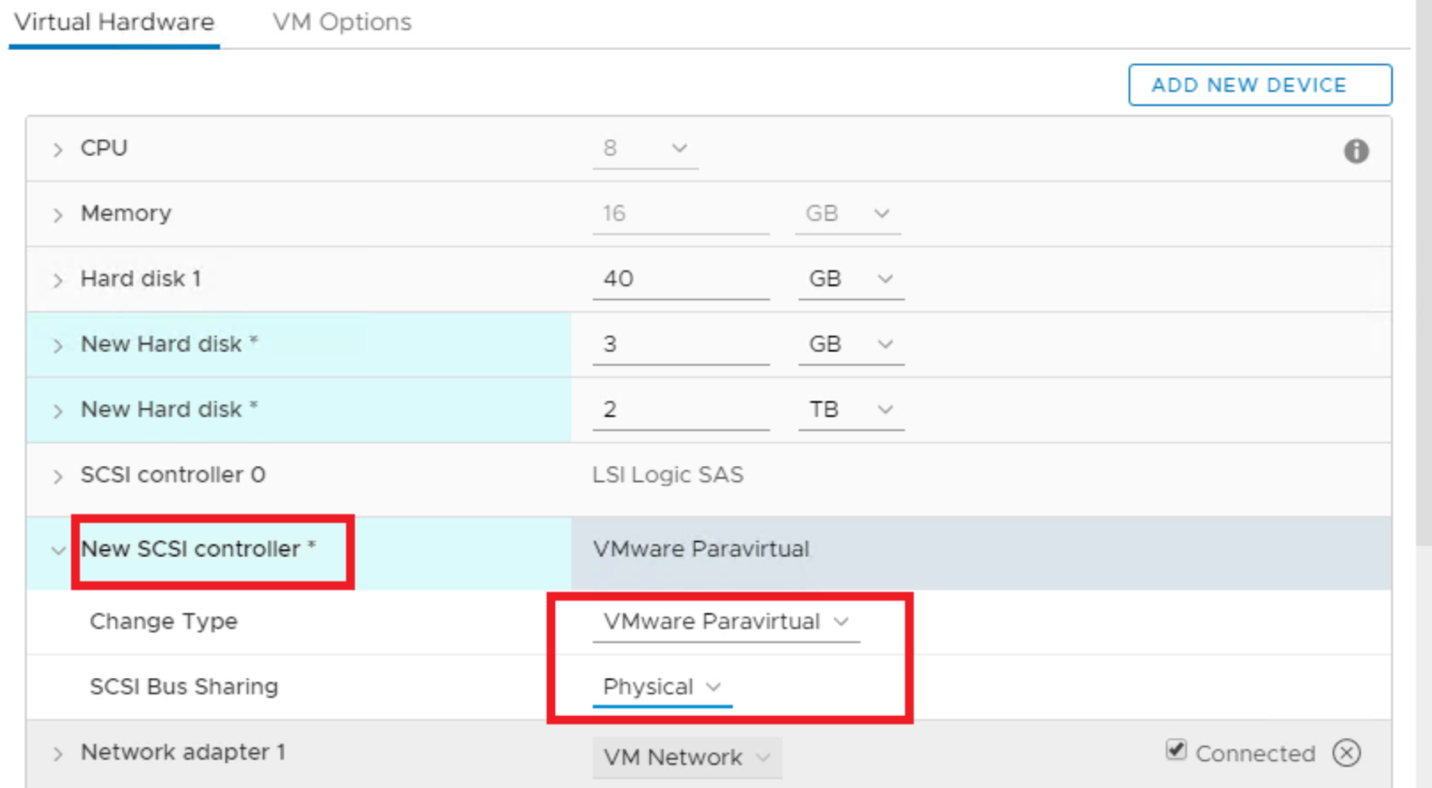

1.Set your SCSI controller to the SCSI Bus sharing mode as Virtual or Physical in the VM settings:

- Physical – access for the machines from different ESXi hosts

- Virtual – access for the machines from this particular ESXi host exclusively

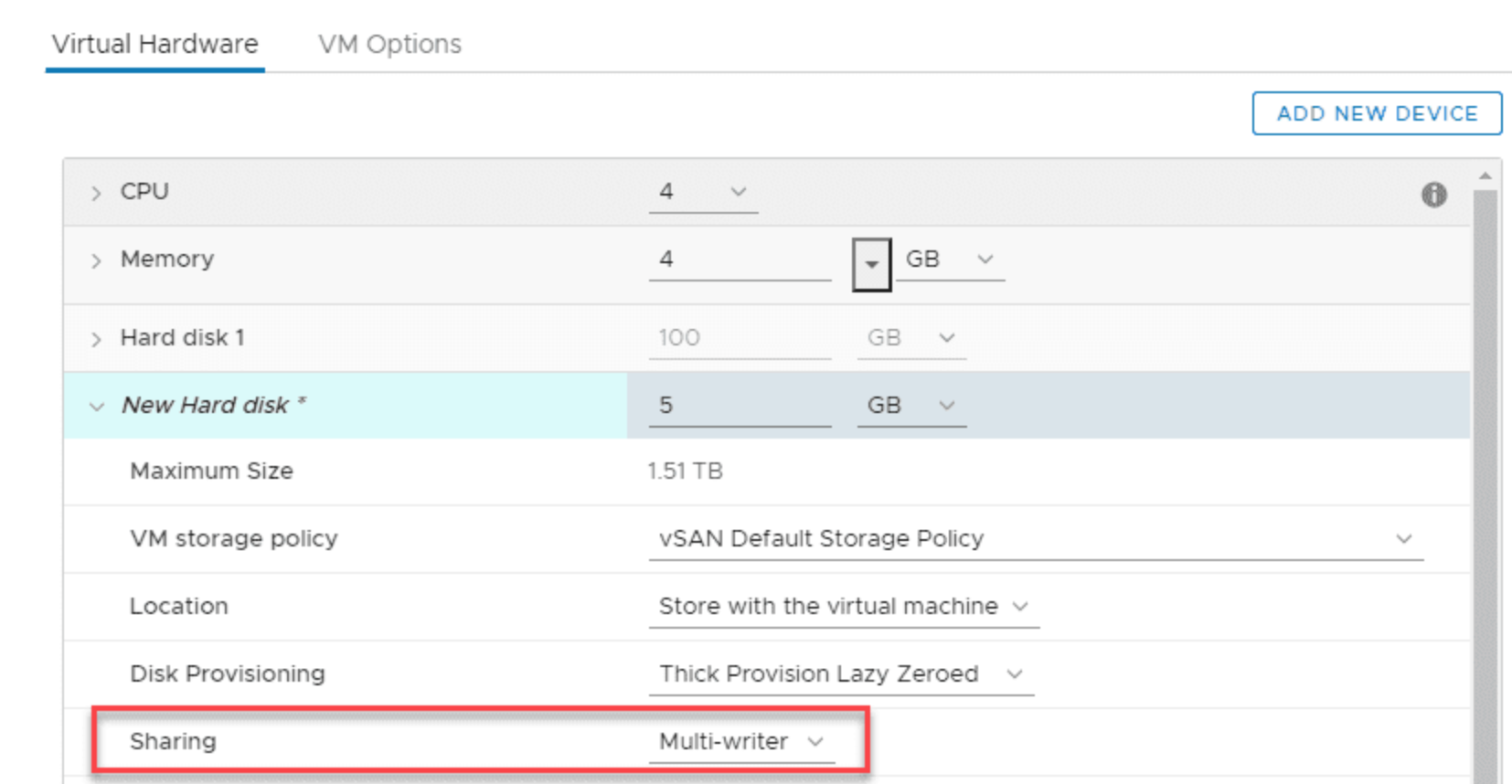

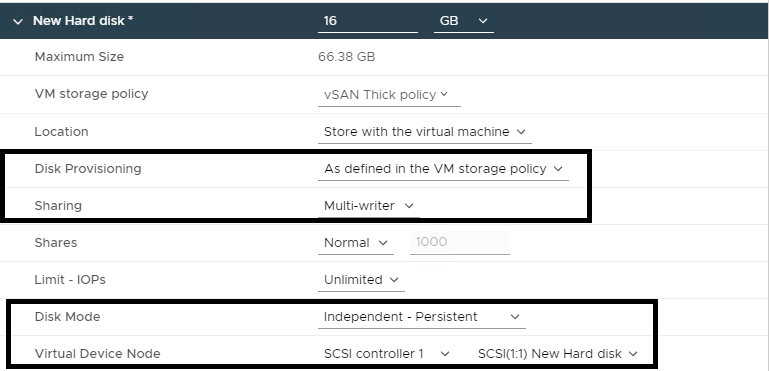

2. Furthermore, in the VM settings choose sharing as Multi-writer:

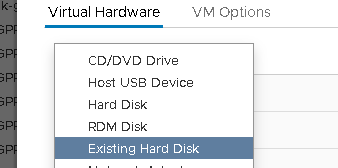

3. On the secondary ESXi host, configure or create a SCSI controller in the same fashion. Select Existing Hard Disk.

You’ll also need to configure multi-writer option for the disk.

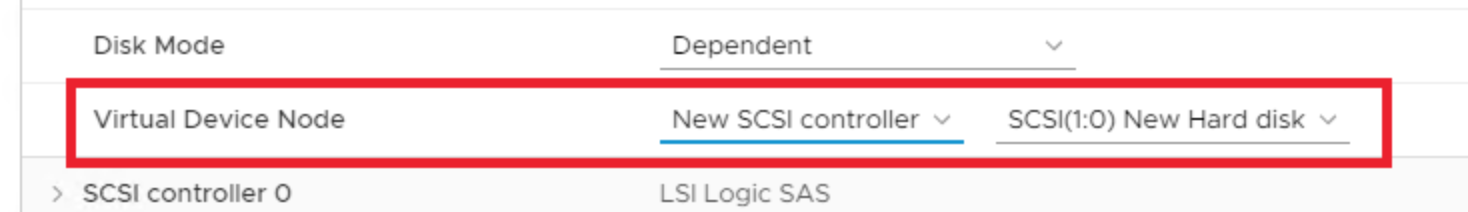

Important: the SCSI device address must remain the same on both machines! That means, if you’ve picked “SCSI controller 1” for the first VM and SCSI(1:0) for its disk, then the second machine ought to have the same configuration:

Remember, that multi-writer option is present in the graphical interface only from the vSphere 6.0 Update 1 onwards. If you have earlier version of vSphere platform, you have to add the following line to the vmx file or the VM advanced settings (VM properties: Options > General > Configuration Parameters):

|

1 |

SCSI1:0.sharing = "multi-writer" |

4. Furthermore, it’s time to configure the disk in the guest OS of both VMs and configure its access mode following your cluster software requirements.

I have to say that efficient as it is, multi-writer option is only supported by the clustering solutions that know how to work with such a resource and can be useful only for them. Some users may have assumed that this way is the way to configure some kind of shared storage, but I hate to disappoint you since the data renewal will occur only if you bring the disk offline and then back online again. Before this, you won’t see any changes in either of the hosts.

For now, let’s get back to the limitations chart. As you could have seen earlier, the snapshots are not supported, which means that traditional backup technology is useless in this case. If you decide to follow the default configuration, this will only result in the backup process ending with an error (there’s no specific kind, it depends entirely on your software).

However some backup solutions, for example Veeam Backup and Replication, can back up VMs with Fault Tolerance enable (please, see KB 1178).

Luckily, there’s a very simple trick to keep backing the machine up while excluding multi-writer disks from the whole process. You just need to change their type from Dependent (those that participate in snapshot making) to Independent Persistent (there will be no snapshots made of these, but they still save changes in time). Go to the VM settings:

You may also wonder how you would back up multi-writer disks in Independent operations mode. In that case, use the backup solutions made specifically for the guest OS level (Veeam Agent for Windows, for example) or solutions specifically designed for particular applications (Veeam Plug-in for Oracle).

Conclusions

To sum up, I have to say one important thing. When using multi-writer option, make sure you have read very carefully all the necessary technical documentation from VMware and your clustering software vendor. There’s always a continuous risk of data loss because of wrong configuration or backup error (which is one more reason to think through your backup plan real good). Hope this information was useful and good luck!