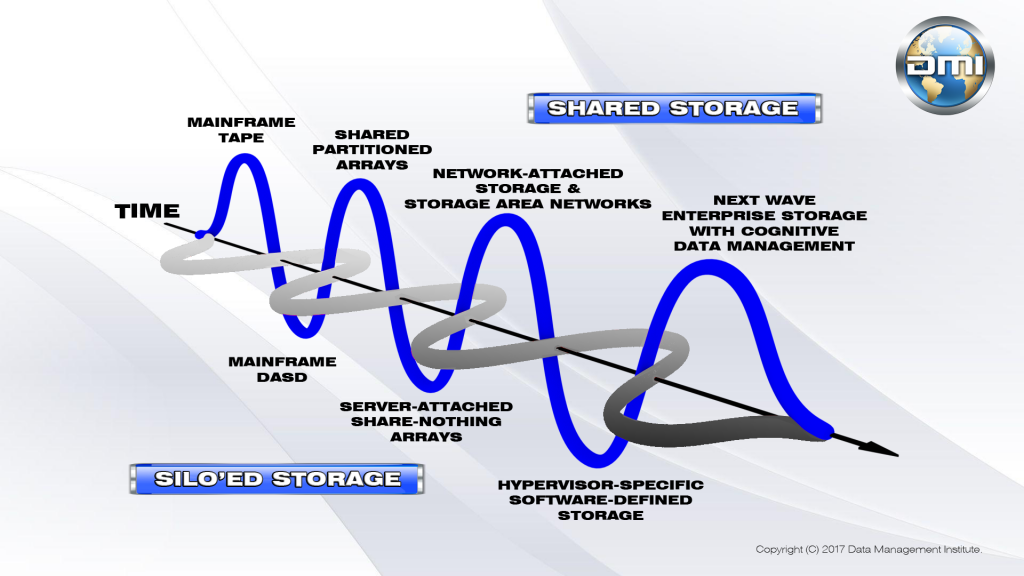

An under-reported trend in storage these days is the mounting dissatisfaction with server-centric storage infrastructure as conceived by proprietary server hypervisor vendors and implemented as exclusive software-defined storage stacks. A few years ago, the hypervisor vendors seized on consumer anger around overpriced “value-add” storage arrays to insert a “new” modality of storage, so-called software-defined storage, into the IT lexicon. Touted as a solution for everything that ailed storage – and as a way to improve virtual machine performance in the process – SDS and hyper-converged infrastructure did rather well in the market. However, the downside of creating silo’ed storage behind server hosts was that storage efficiency declined by 10 percent or more on an enterprise-wide basis; companies were realizing less bang for the buck with software-defined storage than with the enterprise storage platforms they were replacing.

So, on many fronts, storage companies are endeavoring to make a comeback with platforms that feature centralized management across far-flung installations. In this zettabyte era, storage infrastructure will likely be spread over client devices, branch offices, corporate data centers and multiple clouds. This infrastructure will need to interact transparently and in a user-friendly way. Data will need to move effortlessly across many formats, from traditional file systems to proprietary object storage models embraced by gear peddlers and cloudies. And storage gear itself will need to behave itself and adhere to at least minimal standards of interoperability and common status monitoring and management.

There will be no single storage component in this infrastructure and no storage media type can afford to be excluded. Tiering will be essential to cost containment, with data placed in an optimal way on the storage kit that supports its access and update performance requirements. Storage services will need to be provided to data and storage containers per policy and in a manner that supports regulatory and legal mandates. And overall costs for storage will need to guide the choices of which cloud to use for storing archival and backup data.

Against this backdrop, it remains a head-scratcher to many looking at the storage market as they try to reconcile the coming renaissance in enterprise storage with the apparent interest gap that storage is currently confronting. If you are a consumer, you might have read about a storage vendor that recently went belly-up or many others that are seeing steep revenue declines this year. Overall, the industry is down about $1.6 billion in sales revenue in 2017.

If you are a storage insider, you might have heard that storage start-ups – even the software-defined storage vendors – are having a hard time finding venture capital investments, even if they are mildly profitable and are pursuing C or D rounds. Whether you are chatting at a trade show booth or discussing the situation over a nice Ukrainian vodka, the lack of investment in the next wave of storage is on everyone’s mind.

I have been asking folks who claim to be “in the know” about what is going on with storage and here are some explanations that have been advanced.

- Storage is no longer relevant. It is now just a part of the server. This is an interesting idea advanced by many up and coming storage geeks. In most cases, it is a self-serving comment of those who seek to substitute Flash memory for disk drives, optical disc, and tape to create the all-silicon data center. They are conflating Flash memory with a DRAM to reduce the components of the classical von Neumann computer from three to just two. (Von Neumann saw the computer as a combination of a CPU and memory scratchpad, an I/O bus, and some sort of storage kit. If the storage element in von Neumann transitions to a building out of the memory scratchpad (flash or DRAM becomes the storage of the machine, eliminating the other storage kit), then the computer as defined by von Neumann is kaput.)

This is interesting stuff, but I kind of doubt that most firms see the world in this way. Storage remains relevant today, even if #2 is valid.

- Storage sales are down because clouds are getting all the data. I hear this one a lot. Companies aren’t buying as much storage because they lease capacity from a cloud instead. Certainly, we have seen a shift to cloud-based storage for certain kinds of data, such as backups and archives. But there has been tremendous reluctance among larger shops to place their active data in a cloud – whether for reasons of access speed, relative security, or cost. Besides, this idea could not be a valid explanation of the decline in storage spending because cloud service providers use the same storage as enterprise data centers. If the latter are not buying as much storage and are instead storing data to the cloud, then the cloud data center needs to buy the storage that the enterprise guys used to buy. Clouds are not a new form of storage, they are just a service delivery model and outsourced data center.

- Clouds use their storage more efficiently. There may be some truth to this statement. The industrial cloudies (AWS, Azure, Google, IBM, etc.) have deployed their own storage with some pretty cutting-edge innovations in some cases. These innovations include object models for unstructured data, the use of (older) technologies for erasure coding to eliminate drive mirroring and RAID schemes that consume a lot of capacity, and the application of a lot of deduplication and compression technologies to squeeze more data into the same amount of fixed space. However, with data growth rates in the tens – or hundreds – of zettabytes per year, even these space savers are unlikely to forestall burgeoning capacity requirements. Most clouds (and many large enterprises) are now adopting tape storage to augment capacity against shortfalls that are believed to be coming in disk and flash memory.

- The storage market isn’t offering anything that consumers feel like buying. Of all the explanations, this one makes the most sense. Storage industry sales revenues are down because consumers aren’t buying as much gear or media. True enough. What isn’t clear is why.

Until about 2005, consumers bought storage based largely on the brand. That was the only way to explain why EMC sold so much stuff year over year despite offering, arguably, overpriced and not very well engineered product. Their success echoed the old expression, “Nobody gets fired for buying IBM.” Everyone’s fortunes began to fall, however, when shared storage began to replace monolithic, proprietary arrays. Then, when the hype around SANs and NAS died down – due to their cost and complexity, and because of misinformation about the relationship between “legacy” shared storage and poor virtual machine performance – the hype around software-defined storage rose. SDS was the name of the game for the past couple of years, though many companies simply repurposed older servers and internal storage to “roll their own” storage infrastructure, depressing sales of new gear.

However, as indicated at the outset, it is becoming clear that SDS and hyper-converged aren’t necessarily delivering “enterprise class” storage infrastructure. Many customers seem to be waiting for the market to decide what the next big thing will be and are forestalling purchases. This seems like a pretty sensible description of the current situation.

So, what will it take to put the storage industry back on a paying basis? IBM has been enjoying year over year growth in their storage sales, but that may be a reflection of their extremely deep product bench. They have specialized gear for everything and they are among the last of the tenured vendor club, the three-letter acronyms.

What is needed are some new ideas. Symbolic IO has a few tricks they are pursuing, and DataCore Software still has a compelling storage virtualization play that they have de-emphasized recently to capitalize on the SDS craze. There are also some cool architectural ideas coming out of the developers at StarWind Software, including a de-constructionist approach to deploying a software-defined, array-based hybrid.

Will these and other developments be enough to awaken the investment community? It remains to be seen. Right now, they seem more inclined to spend money on the next big computer game.