Amazon released recently the Aurora Storage Engine as a MySQL-compatible relational database service and is highly encouraging to customers to migrate from Oracle or Microsoft SQL Server to this new cloud service platform. Amazon Aurora is promising up to five times better performance than MySQL with better security, availability, and reliability of a commercial database and a 10% cost of what organizations are paying. And also announced a short time ago, PostgreSQL compatibility (available as a preview).

The Storage Approach for Databases

As any IT person would know, the storage design in any enterprise-scale platform for a database is going to be the backbone of the service that is providing. And we could be talking about platforms that insert hundreds of millions of new rows within a few hours, it’s easy to understand that we need a storage platform with high performance.

The cloud solutions found this as an incentive to customers to avoid those large investments and complex platforms, and migrate these databases to the cloud. Instead of talking about SAN, DAS, fiber channel, connections, and so many other variables; Amazon just wants you to think about AWS and now their Amazon Aurora.

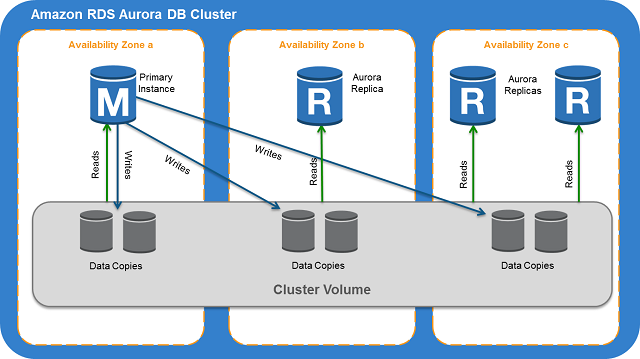

Amazon Aurora storage engine is a distributed SAN that spans multiple AWS Availability Zones (AZs) in a region. Each AZ is isolated from the others, except for a low latency link that allows for rapid communication with the other AZs in the region. The distributed, low latency storage engine at the center of Amazon Aurora depends on AZs.

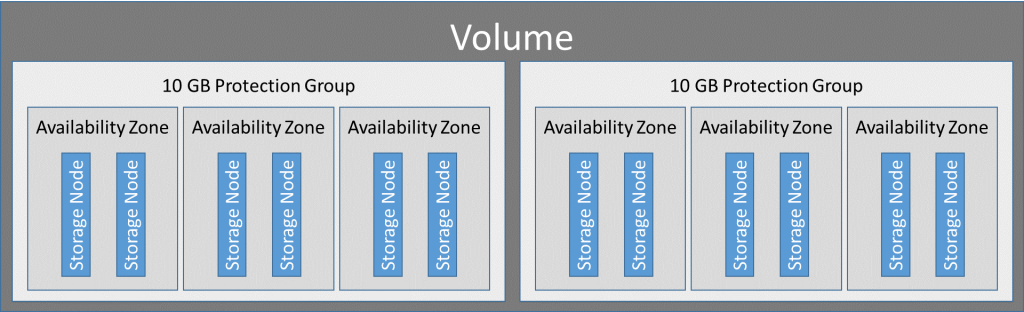

Amazon Aurora handles its storage volumes in 10 GB logical blocks called “protection groups”. The data in each protection group is replicated across six storage nodes. Those storage nodes are then allocated across three AZs in the region in which the Amazon Aurora cluster resides.

So, How Fast, Reliable and Secure Aurora Actually Is?

Amazon promises that Aurora provides 5X the throughput of standard MySQL or 2X the throughput of standard PostgreSQL running on the same hardware. Also, AWS confirms that on the largest Amazon Aurora instance, you can achieve up to 500,000 reads and 100,000 writes per second. And you can further scale read operations using read replicas that have very low 10 ms latency.

If you are concern about security, Amazon Aurora allows users to encrypt all data at rest using industry standard AES-256 encryption. Keys and security assets can also be managed through AWS Key Management Service (AWS KMS). Transmitted data can be secured through a TLS connection to the Amazon Aurora cluster. Amazon Aurora also holds multiple certifications/attestations, including SOC 1, SOC 2, SOC 3, ISO 27001/9001, ISO 27017/27018, and PCI.

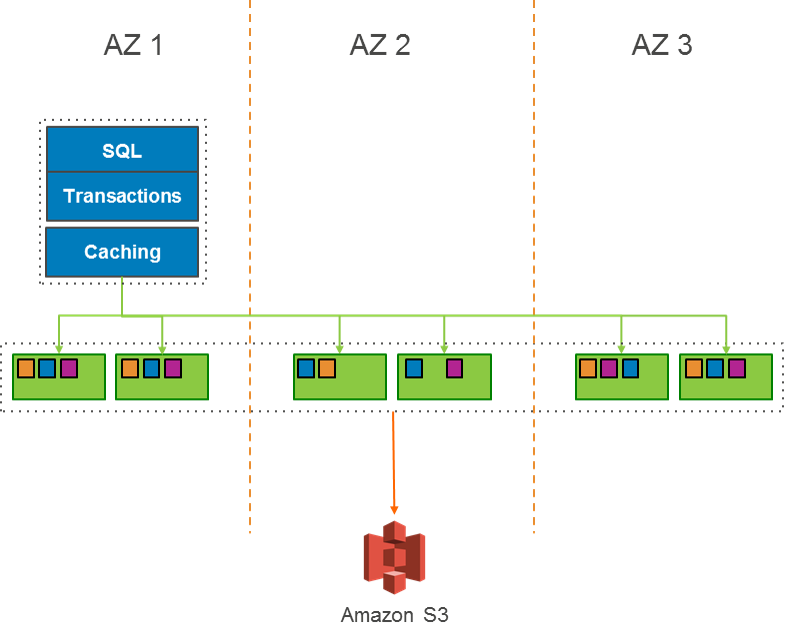

Aurora continuously backed up to Simple Storage Service (S3) in real time, with no performance impact. With that, customers can forget about backup windows, complex and interconnected backup scripts. It also allows users to restore to any point in the user-defined backup retention period. In addition, because all backups are stored in S3, which is replicated across multiple data centers, the SLA Amazon provides is 99.999999999% durability.